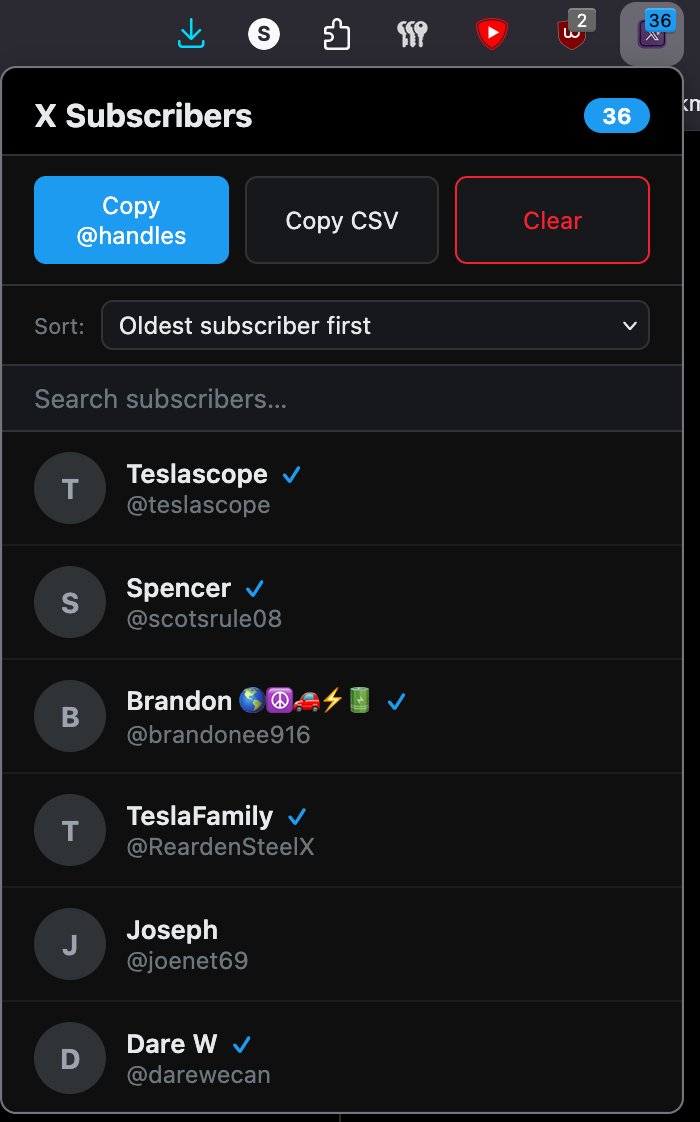

Teslascope@teslascope

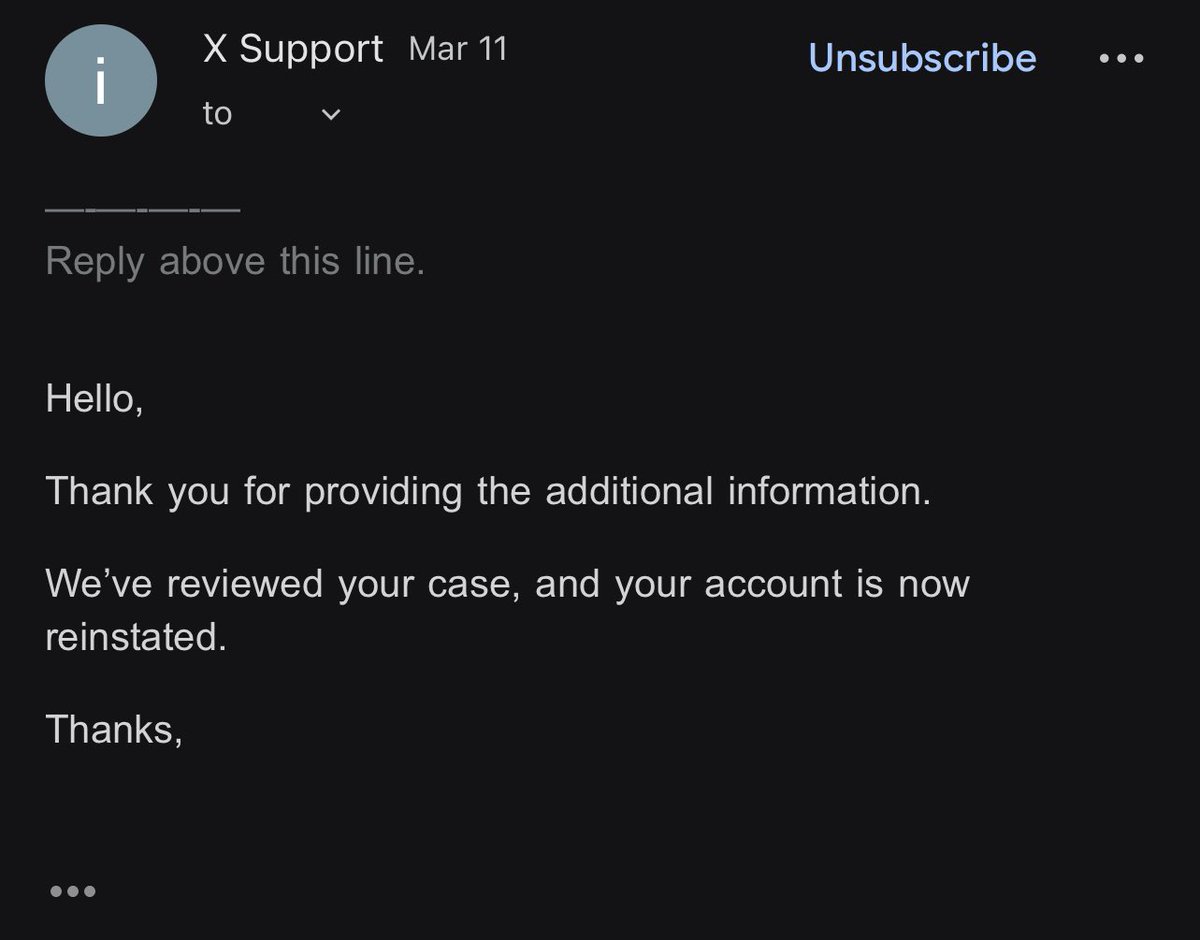

We review cases like this all the time, and our entire career is spent managing and studying data from these vehicles through our platform (when either consented by vehicle owners or in de-identified form).

Multiple test drives have been taken within the past 24 hours at this location on both the latest and older FSD lane assist stacks (including older than the one installed in this incident).

In both cases, the vehicle traveled at a slower speed (35-49mph) than in the incident (54-68mph at the 4-second prior mark).

This implies that the driver was pressing down on the accelerator, forcing the vehicle to accelerate faster than it would normally. This action does not deactivate lane assist/FSD, but the system treats it as a manual override (as it is).

Given the speed of travel, the turn ahead and posted speed signs (and that the vehicle was traveling at 3-4x the posted speed), this all supports the narrative that the driver was not paying attention to the road and was potentially driving recklessly.

If the vehicle was operating normally and at its own suggested speed, it would have likely handled the corner turn with ease, as has been tested on the same vehicle, as well as other models.

All speculative, all an opinion of course.