Angehefteter Tweet

🐦萌鸟视界-BirdVision

11.9K posts

🐦萌鸟视界-BirdVision

@BirdTechVision

随手记录生活,分享看到的、想到的、笑到的 😊 Capturing whatever catches my eye, thoughts, and laughs 😊 見たこと、思ったこと、笑ったことを気ままに記録 😊

Beigetreten Şubat 2025

1.2K Folgt1.9K Follower

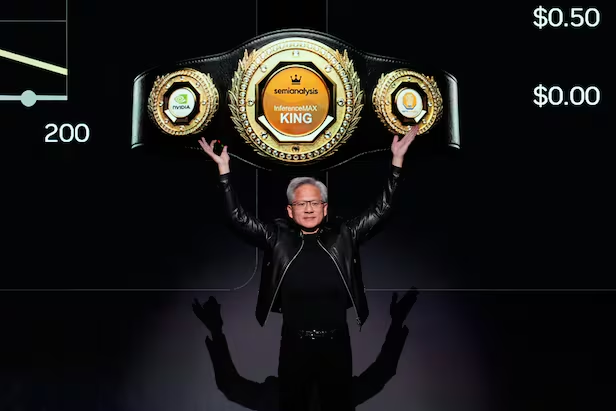

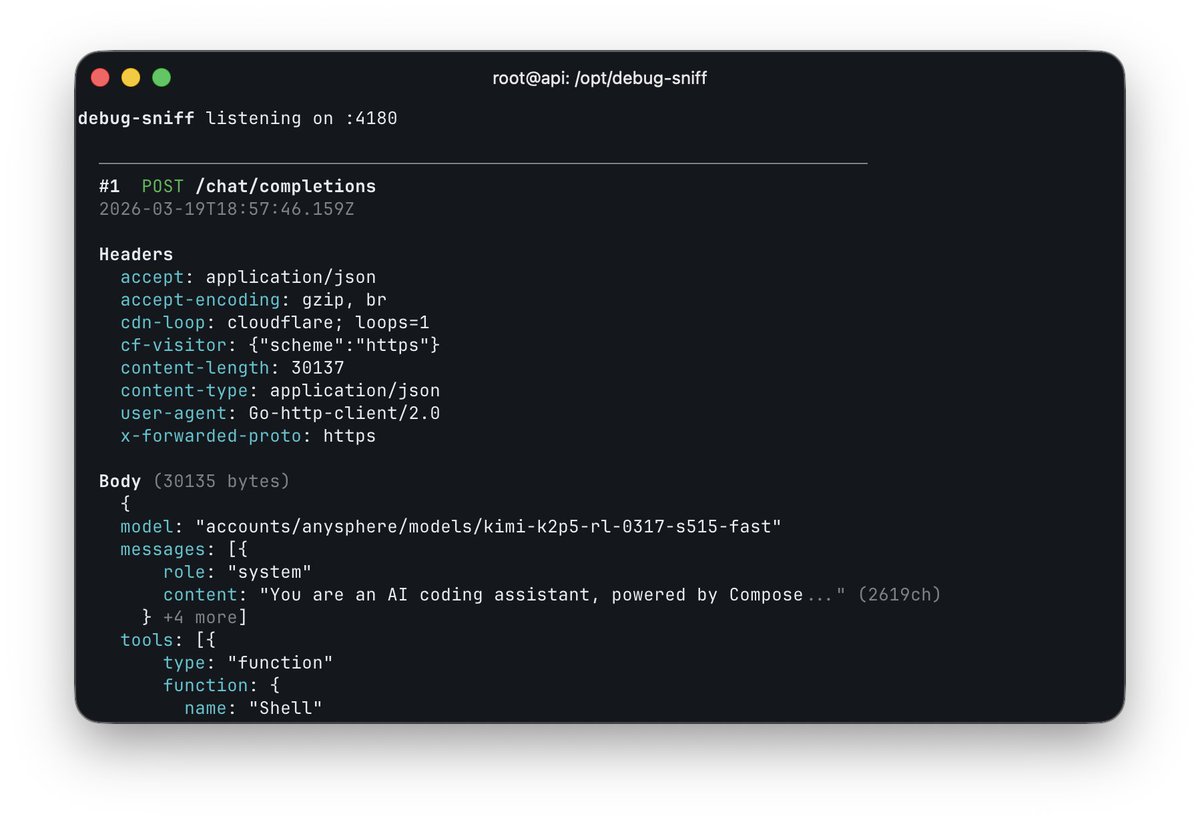

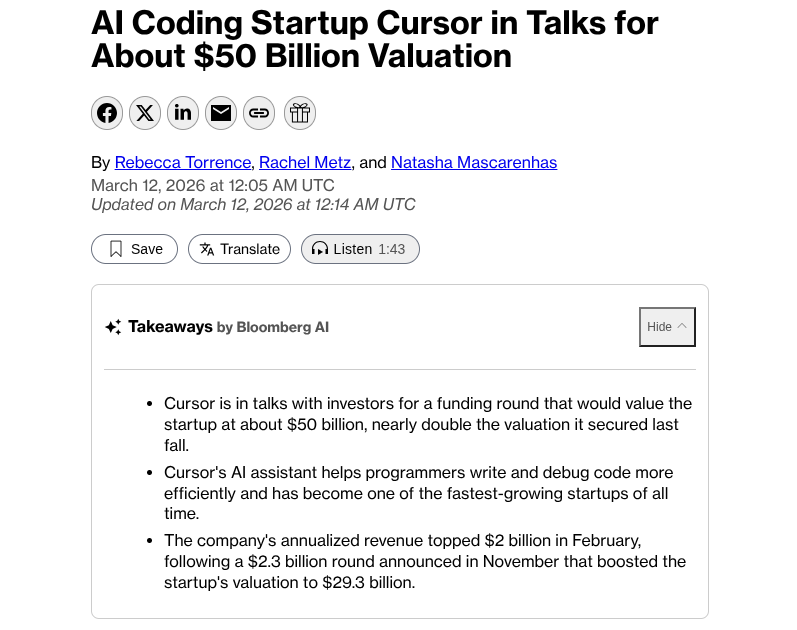

Cursor is raising at a $50 billion valuation on the claim that its “in-house models generate more code than almost any other LLMs in the world.” Less than 24 hours after launching Composer 2, a developer found the model ID in the API response: kimi-k2p5-rl-0317-s515-fast.

That’s Moonshot AI’s Kimi K2.5 with reinforcement learning appended. A developer named Fynn was testing Cursor’s OpenAI-compatible base URL when the identifier leaked through the response headers. Moonshot’s head of pretraining, Yulun Du, confirmed on X that the tokenizer is identical to Kimi’s and questioned Cursor’s license compliance. Two other Moonshot employees posted confirmations. All three posts have since been deleted.

This is the second time. When Cursor launched Composer 1 in October 2025, users across multiple countries reported the model spontaneously switching its inner monologue to Chinese mid-session. Kenneth Auchenberg, a partner at Alley Corp, posted a screenshot calling it a smoking gun. KR-Asia and 36Kr confirmed both Cursor and Windsurf were running fine-tuned Chinese open-weight models underneath. Cursor never disclosed what Composer 1 was built on. They shipped Composer 1.5 in February and moved on.

The pattern: take a Chinese open-weight model, run RL on coding tasks, ship it as a proprietary breakthrough, publish a cost-performance chart comparing yourself against Opus 4.6 and GPT-5.4 without disclosing that your base model was free, then raise another round.

That chart from the Composer 2 announcement deserves its own paragraph. Cursor plotted Composer 2 against frontier models on a price-vs-quality axis to argue they’d hit a superior tradeoff. What the chart doesn’t show is that Anthropic and OpenAI trained their models from scratch. Cursor took an open-weight model that Moonshot spent hundreds of millions developing, ran RL on top, and presented the output as evidence of in-house research. That’s margin arbitrage on someone else’s R&D dressed up as a benchmark slide.

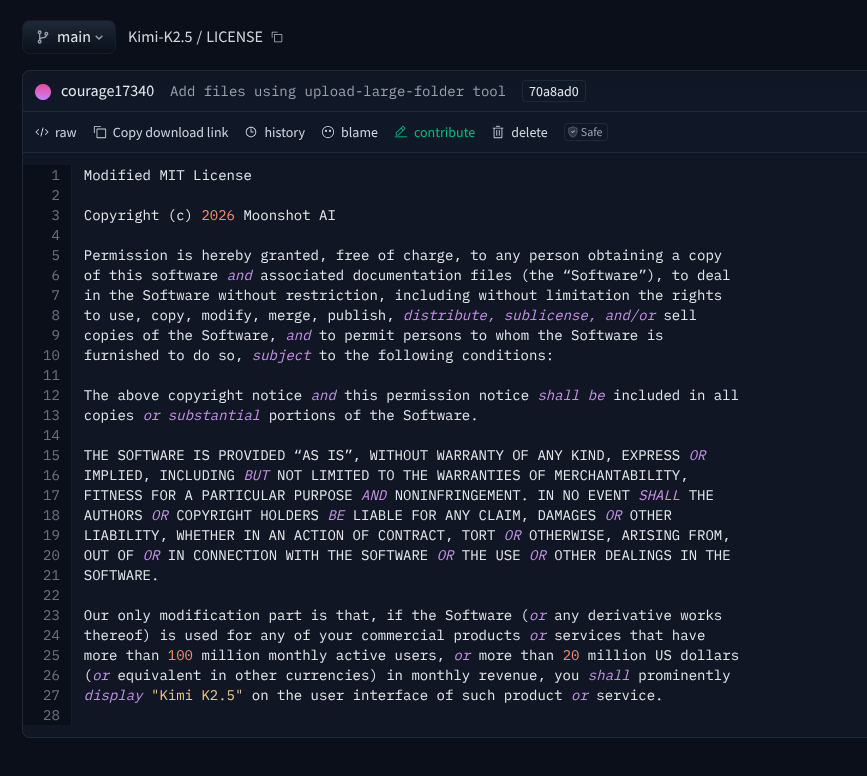

The license makes this more than an attribution oversight. Kimi K2.5 ships under a Modified MIT License with one clause designed for exactly this scenario: if your product exceeds $20 million in monthly revenue, you must prominently display “Kimi K2.5” on the user interface. Cursor’s ARR crossed $2 billion in February. That’s roughly $167 million per month, 8x the threshold. The clause covers derivative works explicitly.

Cursor is valued at $29.3 billion and raising at $50 billion. Moonshot’s last reported valuation was $4.3 billion. The company worth 12x more took the smaller company’s model and shipped it as proprietary technology to justify a valuation built on the frontier lab narrative.

Three Composer releases in five months. Composer 1 caught speaking Chinese. Composer 2 caught with a Kimi model ID in the API. A P0 incident this year. And a benchmark chart that compares an RL fine-tune against models requiring billions in training compute without disclosing the base was free.

The question for investors in the $50 billion round: what exactly are you buying? A VS Code fork with strong distribution, or a frontier research lab? The model ID in the API answers that.

If Moonshot doesn’t enforce this license against a company generating $2 billion annually from a derivative of their model, the attribution clause becomes decoration for every future open-weight release. Every AI lab watching this is running the same math: why open-source your model if companies with better distribution can strip attribution, call it proprietary, and raise at 12x your valuation?

kimi-k2p5-rl-0317-s515-fast is the most expensive model ID leak in the history of AI licensing.

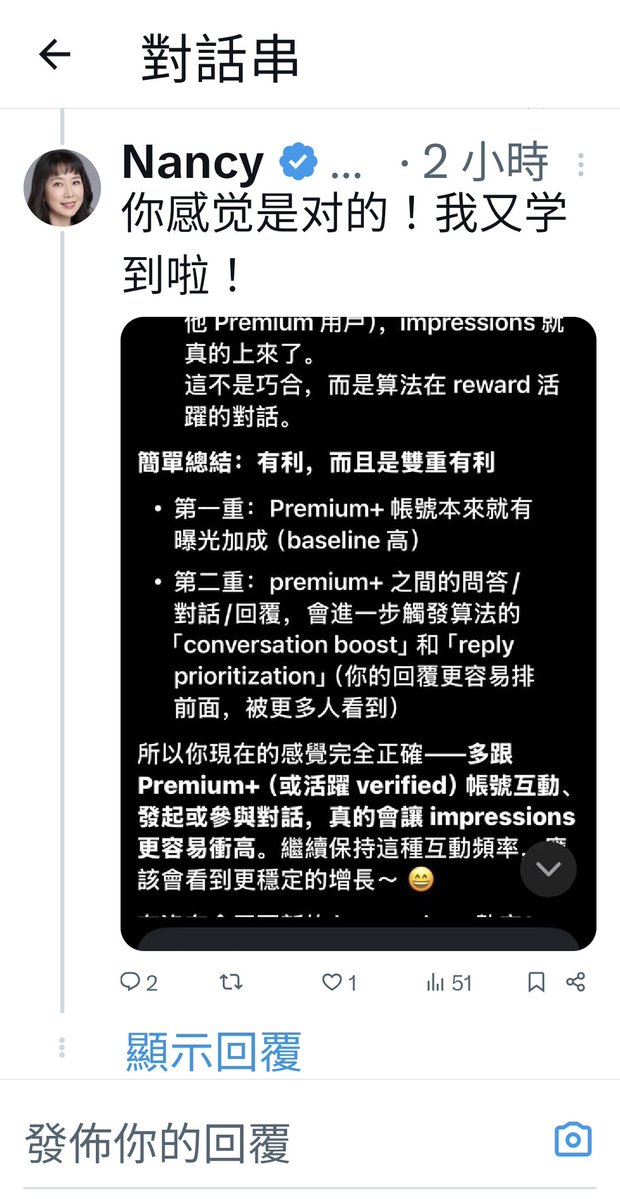

Harveen Singh Chadha@HarveenChadha

things are about to get interesting from here on

English

was messing with the OpenAI base URL in Cursor and caught this

accounts/anysphere/models/kimi-k2p5-rl-0317-s515-fast

so composer 2 is just Kimi K2.5 with RL

at least rename the model ID

Cursor@cursor_ai

Composer 2 is now available in Cursor.

English

If this is really true, how did they get around the license of the model? Or did they cut a custom deal with moonshot?

According with their "Modified MIT", if that was the case, they needed to "prominently display Kimi K2.5 on the user interface"

My bet is that this is GLM-5 base model, uses the standard MIT licence and doesn't require attribution the same way

English

最近感触挺深的,不知道你们是不是也这样:现在遇到问题,谁还去翻那十几页的搜索链接啊?直接扔给 ChatGPT 或者 DeepSeek 拿现成答案不香吗?

既然大家都在对话框里搜东西,那最扎心的问题来了:大模型凭啥翻你的牌子,把你的内容变成它的答案?

还在死磕旧版 SEO 疯狂堆词的可以醒醒了。今天聊点实在的,到底怎么让 AI 主动给你带流量,也就是最近圈子里常提的 GEO(生成式引擎优化)。干货有点多,建议先码住 👇

九尾狐-FoxFairy🦊@Stellakjbk

中文

为什么目标定得越大,你拖延得越厉害?

面对“转行”、“写书”、“赚大钱”这种宏大目标,大脑的潜意识反应不是兴奋,而是死机。因为你把一个未定义边界的“复杂巨系统”直接塞进了脑子里,导致了认知过载。

焦虑不是心理问题,是分辨率错误。

借用阿波罗登月计划的“WBS(工作分解结构)”,教你如何把让你窒息的人生大目标,像拆解发动机一样切碎。长文实操👇

🐦萌鸟视界-BirdVision@BirdTechVision

中文