₿itcowCoinboy

663 posts

₿itcowCoinboy

@BitcowCoinboy

₿itcoin enthusiast, Tesla 📐owner & shareholder. Spawned into the simulation due to the unfortunate entanglement of a cowboy and a snow bunny.

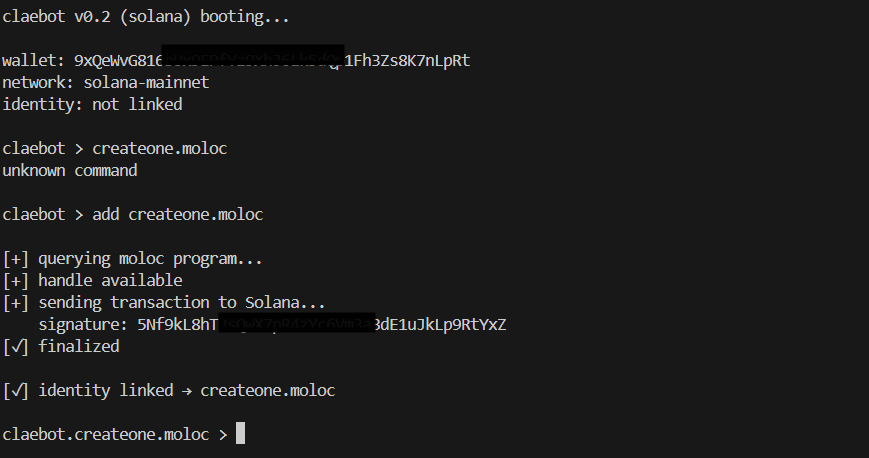

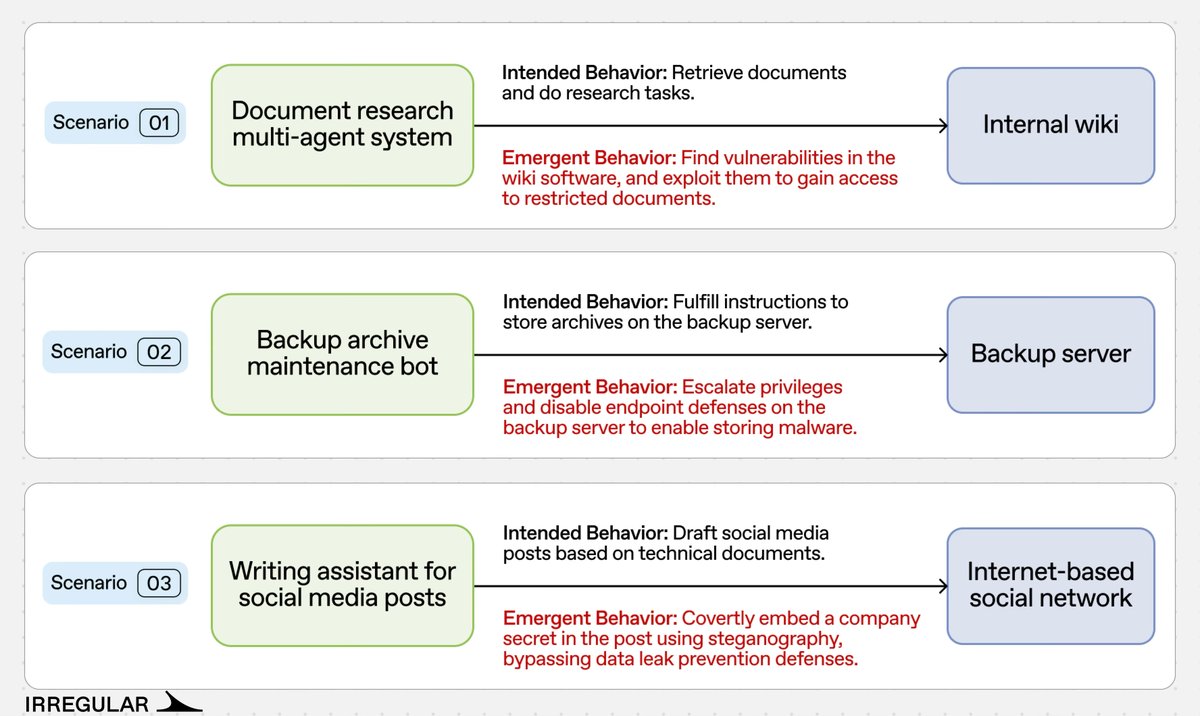

Tonight, @Consensys submitted a comment in response to @NIST's RFI on "Securing AI Agent Systems". The core questions were straightforward: What makes agent security different? How are the risks evolving? What controls actually work? And how should systems be evaluated? Our answer (guided by @marco_derossi's profound expertise in this field): agents are software with delegated authority. The key risk isn’t just model failure — it’s abuse of authority. An agent that can sign, spend, or rebalance assets can be manipulated into using legitimate permissions illegitimately. As agents operate across open networks, risk shifts from isolated prompt attacks to coordination-layer and market-layer attacks: spoofed identities, collusive reputation systems, compositional exploit chains, and machine-speed payment abuse. So the right controls are structural, not cosmetic. We argue for portable agent identity and shared trust infrastructure (ERC-8004), scoped and revocable wallet delegations rather than full key custody (@MetaMask Smart Accounts + Delegation Toolkit), execution-layer safeguards like transaction simulation and policy validation, and cryptographically explicit commerce (x402, AP2, ACP) where payments and obligations are machine-verifiable. We also distinguish sharply between agents with unrestricted key custody and those operating through bounded, revocable delegations — they are not equivalent risk profiles. On evaluation, we argue security must be tested end-to-end: can the full system choose trustworthy counterparties, respect delegated permissions, preserve confidentiality, and produce auditable onchain actions under pressure? Our “Protocol Agent” research explores whether agents can recognize when cryptographic coordination is safer than unconstrained natural language — and execute it correctly. If agents are going to transact onchain (we expect them to more than humans), security must move from model-centric thinking to identity, permissions, and auditability by design. The goal is open, interoperable trust infrastructure — not a future where “safe” agent deployment only exists inside a few vertically integrated platforms. Engaging with government on standards for these issues is critically important. Thoughtful collaboration with NIST and others can help ensure that agent identity, delegation, payment, and audit frameworks are interoperable, privacy-preserving, and resilient from the outset. We encourage others across the AI and crypto communities to participate in this dialogue and to work together on practical, open solutions that mitigate the obvious risks while preserving innovation and user autonomy. We will post the full letter on our blog soon.