EmPRISE Lab

80 posts

EmPRISE Lab

@EmpriseLab

EmPRISE Lab in CS at Cornell University. We are a full-stack robotics lab. Our vision is to EMpower People with Robots and Intelligent Shared Experiences.

UPDATE: The regular registration deadline has been extended to Wednesday, September 10th! Join us at Cornell on Oct 11 for a day of robotics talks, posters, and connections across the Northeast. 🔗 events.ces.scl.cornell.edu/event/NERC

Most assistive robots live in labs. We want to change that. FEAST enables care recipients to personalize mealtime assistance in-the-wild, with minimal researcher intervention across diverse in-home scenarios. 🏆 Outstanding Paper & Systems Paper Finalist @RoboticsSciSys 🧵1/8

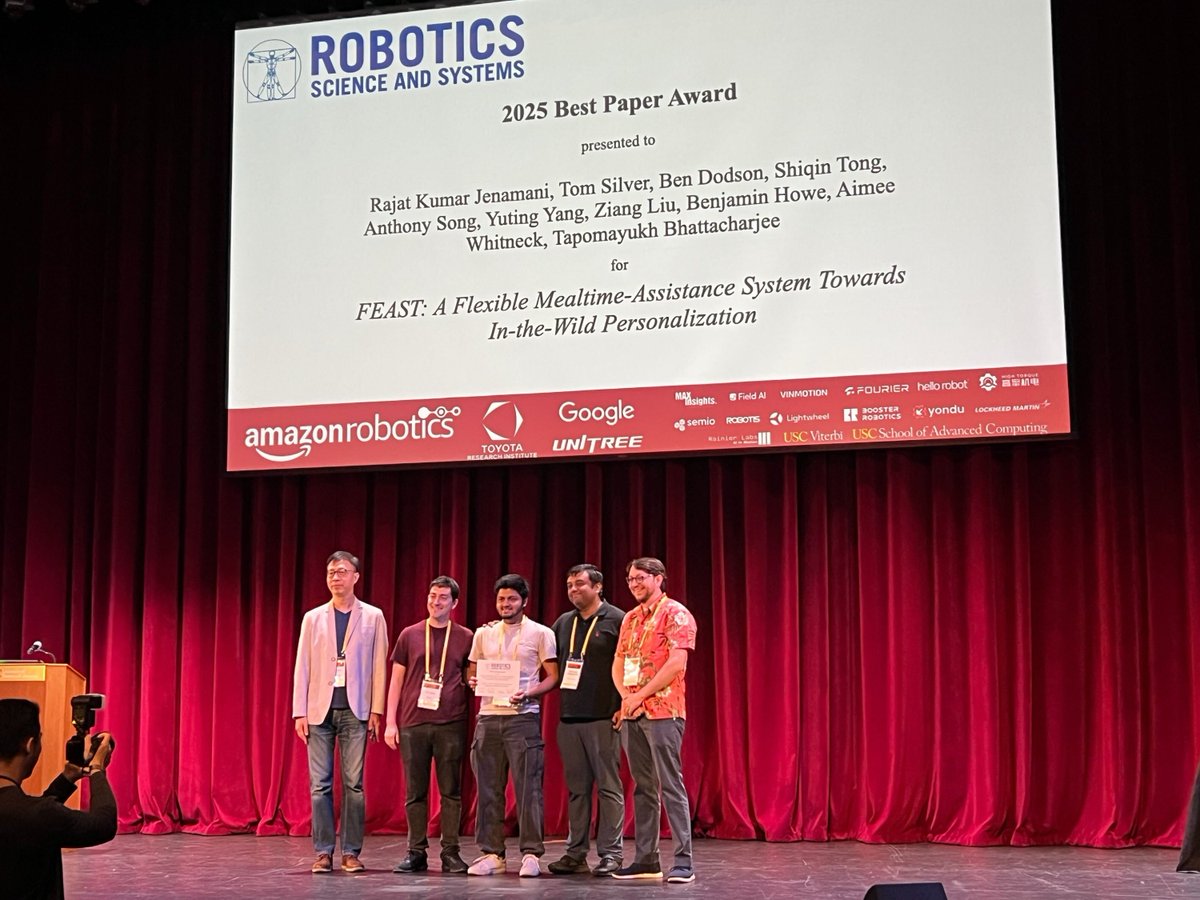

Congrats @rkjenamani and the entire team @EmpriseLab on this impressive accomplishment and being nominated for Best Paper Award and Best Systems Paper Award at #RSS 2025! This project took almost 2.5 years to get to this stage, and I am incredibly proud of what we have achieved in term of real-world deployment of a meal-assistance system with real users in their homes with minimal researcher intervention leveraging in-the-wild personalization. Key insight is that for in-the-wild deployment of user-centered systems, adaptation and personalization need to go hand-in-hand with transparency and safety. More technical details are in the thread 🧵below. @rkjenamani will be presenting this work on Monday (June 23, 2025) @RoboticsSciSys 2025 in the #HRI session. Do attend :-) Website: emprise.cs.cornell.edu/feast @CornellCIS @Cornell_CS @EmpriseLab

Most assistive robots live in labs. We want to change that. FEAST enables care recipients to personalize mealtime assistance in-the-wild, with minimal researcher intervention across diverse in-home scenarios. 🏆 Outstanding Paper & Systems Paper Finalist @RoboticsSciSys 🧵1/8

🚀 Wrapping up an incredible journey at the PhyRC Challenge (emprise.cs.cornell.edu/rcareworld/cha…) at #ICRA2025! Over the past few months, teams from around the world have been tackling challenging tasks in assistive robotics — dressing and bed-bathing. The journey began in RCareWorld (emprise.cs.cornell.edu/rcareworld/), our human-centric simulation platform, where teams first demonstrated their solutions virtually. The top performers then advanced to the real-world phase at ICRA 2025, executing the tasks with a manikin in a physical setting. The winners were awarded real robots as prizes, including a Kinova Gen 3 robot sponsored by @KinovaRobotics for the dressing winners, and a Stretch 3 robot from @hellorobotinc for the bed-bathing winners. A huge thank you to the sponsors! 🏆 Congratulations to our winning teams: RoboNotts — Bed-Bathing Track (Jialin Chen, Koyo Fujii, Kalu Stephen, Areeb Akhter, Zakaria Taghi, Liz Felton, Luis Figueredo, @praminda, Aly Magassouba from University of Nottingham) UniChAMPions — Dressing Track (Maria Fernanda Paulino Gomes, César Bastos da Silva, Elton Cardoso do Nascimento, Ervin Bolivar H. , Esther Luna Colombini, Paula Dornhofer Paro Costa, Leonardo Rocha Olivi, and Eric Rohmer from Universidade Estadual de Campinas, @FEECUnicamp, and HIAAC UNICAMP) We’d also like to recognize the incredible efforts of the RALLA Team (Eunice Firewood, John Bateman, @jihongzhu, Jian Zhao, and @kefhuang from University of York) and the UWMTR Team (Cheng Tang, Hao Tian, Hasan Khan, Siha Pyo, Eddy Zhang, and Chao Tang from @UWaterloo) for their participation throughout the challenge. Thank you to all the organizing team members who made the PhyRC Challenge possible! @tomssilver @rishabhmadan96 @rohanbbanerjee @ZhanxinWu0725 @TapoBhat Check out the highlight video below to relive some of the incredible moments!

Happy to share a new preprint: "Coloring Between the Lines: Personalization in the Null Space of Planning Constraints" w/ @rkjenamani, @RealZiangLiu, Ben Dodson, and @TapoBhat. TLDR: We propose a method for continual, flexible, active, and safe robot personalization. Links 👇

The @EmpriseLab is thrilled to announce the PhyRC Challenge, a competition to facilitate innovation in physical robotic caregiving. The competition has two tracks (Track 1: Fixed-based Manipulation for Robot-assisted Dressing and Track 2: Mobile Manipulation for Robot-assisted Bed Bathing), each evaluated through two phases, namely Phase 1: Simulation Phase and Phase 2: Real Robot Phase. We would like to thank @KinovaRobotics for generously sponsoring a Gen 3 7-DoF robot arm for the Track 1 winning team and @hellorobotinc for generously sponsoring a Stretch 3 robot for the Track 2 winning team. (1/4)