Tom Silver

440 posts

Tom Silver

@tomssilver

Assistant Professor @Princeton. Developing robots that plan and learn to help people.

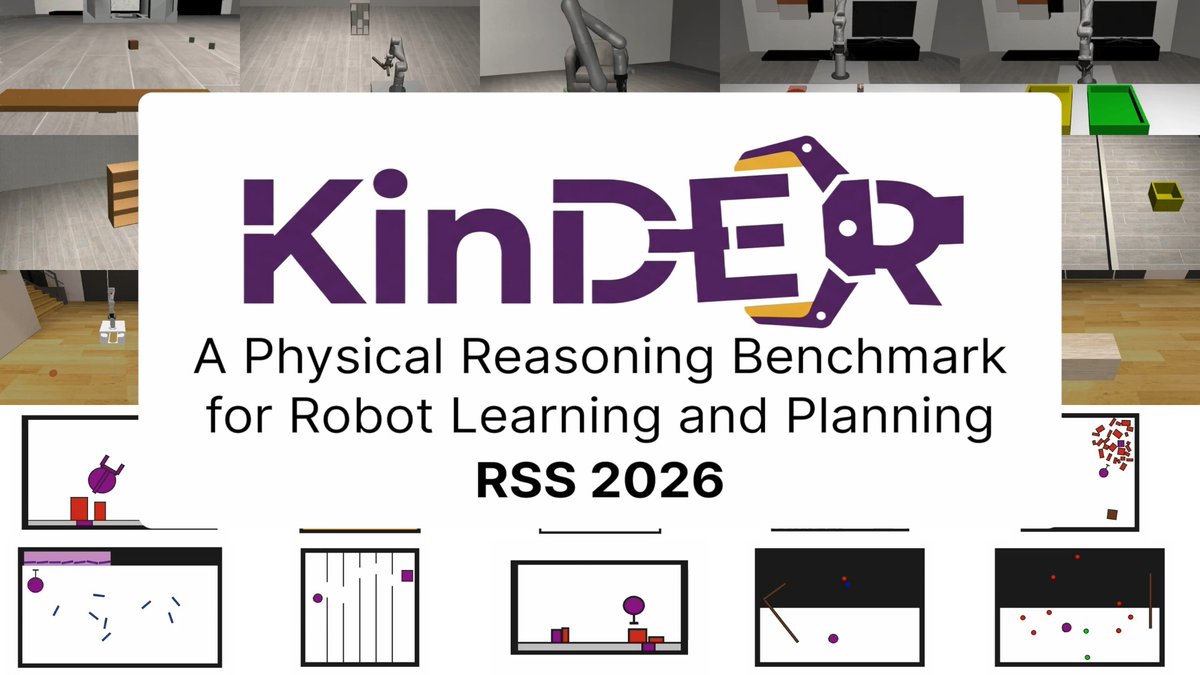

Meet KinDER — a stress test for robot physical reasoning. All 13 methods failed 😈 🌎 25 environments ♾️ Infinite tasks 🏋️ Gymnasium API ⚒️ Over 20 parameterized skills 🪧 Human demonstrations 📊 13 baselines (planning and learning) From @Princeton @CMU_Robotics @ICatGT @CambridgeMLG @nvidia @MIT_CSAIL 🧵 1/n

Meet KinDER — a stress test for robot physical reasoning. All 13 methods failed 😈 🌎 25 environments ♾️ Infinite tasks 🏋️ Gymnasium API ⚒️ Over 20 parameterized skills 🪧 Human demonstrations 📊 13 baselines (planning and learning) From @Princeton @CMU_Robotics @ICatGT @CambridgeMLG @nvidia @MIT_CSAIL 🧵 1/n

Meet KinDER — a stress test for robot physical reasoning. All 13 methods failed 😈 🌎 25 environments ♾️ Infinite tasks 🏋️ Gymnasium API ⚒️ Over 20 parameterized skills 🪧 Human demonstrations 📊 13 baselines (planning and learning) From @Princeton @CMU_Robotics @ICatGT @CambridgeMLG @nvidia @MIT_CSAIL 🧵 1/n

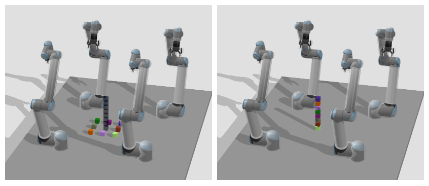

@Princeton @CMU_Robotics @ICatGT KinDER introduces five core physical reasoning challenges in dynamic & kinematic environments, both in 2D and 3D. These are bottlenecks for state-of-the-art robot planning and manipulation. Progress on KinDER will mean real progress for robotics. 🧵 2/n

Meet KinDER — a stress test for robot physical reasoning. All 13 methods failed 😈 🌎 25 environments ♾️ Infinite tasks 🏋️ Gymnasium API ⚒️ Over 20 parameterized skills 🪧 Human demonstrations 📊 13 baselines (planning and learning) From @Princeton @CMU_Robotics @ICatGT @CambridgeMLG @nvidia @MIT_CSAIL 🧵 1/n

Meet KinDER — a stress test for robot physical reasoning. All 13 methods failed 😈 🌎 25 environments ♾️ Infinite tasks 🏋️ Gymnasium API ⚒️ Over 20 parameterized skills 🪧 Human demonstrations 📊 13 baselines (planning and learning) From @Princeton @CMU_Robotics @ICatGT @CambridgeMLG @nvidia @MIT_CSAIL 🧵 1/n