Tapomayukh "Tapo" Bhattacharjee

486 posts

Tapomayukh "Tapo" Bhattacharjee

@TapoBhat

Assistant Professor at CS, Cornell. Leads @EmpriseLab. Interested in Assistive Robotics, Human-Robot Interaction, Robot Manipulation, Haptic Perception.

Ithaca, NY Katılım Mayıs 2011

354 Takip Edilen1.6K Takipçiler

Sabitlenmiş Tweet

Physical caregiving is one of robotics' hardest frontiers: it is contact-rich, physically intensive, long-horizon, safety-critical, and full of deformable objects.

Physical caregiving tasks such as bathing, dressing, transferring, toileting, and grooming require professional training and considerable practical experience. Yet, no existing dataset captures how expert caregivers perceive, interact, and adapt in real-time when performing these tasks, in a form that robots can learn from.

✨ We introduce OpenRoboCare at #IROS2025, the first expert-collected, multi-task, multimodal dataset for physical robot caregiving, featuring:

🩺 21 expert occupational therapists demonstrating caregiving procedures

🛠️ 15 caregiving tasks across 5 Activities of Daily Living (bathing, dressing, transferring, toileting, grooming)

🧍 2 hospital-grade manikins for safety and repeatability

🎥 5 synchronized sensing modalities: RGB-D, pose tracking, eye gaze, tactile sensing, and expert task & action annotations

📂 315 sessions · 19.8 hrs · 31,185 samples

Beyond raw data, OpenRoboCare distills core physical caregiving insights:

- 3 core principles followed by occupational therapists: pre-positioning, anticipation of body mechanics, and task efficiency.

- 4 key physical techniques: the bridge strategy, segmental rolling, wheelchair recline, and stabilization of key control points.

- Quantitative patterns in task duration, predictive gaze behavior that precedes physical contact, and the timing, magnitude, and spatial distribution of contact forces across body regions and task phases.

The dataset will be made openly accessible through the AWS Open Data Sponsorship Program soon.

🌐 Check out our project website for more visuals and insights: emprise.cs.cornell.edu/robo-care/

This work is led by: @xiaoyul14, @RealZiangLiu, and Kelvin Lin. This is a collaboration with Harold Soh's group from NUS and @DimitropoulouDr from CUIMC.

@EmpriseLab @Cornell_CS @IROS2025 @awscloud

English

Check out @rohanbbanerjee's work on a confidence- and human workload-aware robot failure recovery methods for modular robot systems in #HRI2026.

In a robot system, failures can happen due to reasons spanning from a variety of errors in perception, planning, to controls. In user-facing settings, it only makes sense to leverage the user (already present) to recover from errors that the robot cannot recover autonomously. However, instead of treating users as "tools" to improve robot performance whenever the robot is uncertain, let's consider human factors central to these algorithms. In this paper, @rohanbbanerjee considers one such user variable: user querying workload.

The key insight is that through a variety of "module selectors" to select "what to query" and "querying algorithms" to select "when to query", it is possible to maintain the balance between robot task performance and user workload for a wide variety of modular human-robot collaboration settings, and this leads to higher user satisfaction.

In addition to providing guidelines for failure recovery (which algorithms to use for module selection and querying) for a wide variety of robot applications that vary in their complexity (number of modules, module redundancies, confidence scores, noisy experts, user workload etc.), @rohanbbanerjee has deployed his algorithms on a real robot for the robot-assisted feeding application, and evaluated them with users through 3 different real-robot user studies with 22 people including 2 individuals with mobility limitations in their real homes. @EmpriseLab @Cornell_Bowers @HRI_Conference

Project website with paper and video (needs sound for narration): emprise.cs.cornell.edu/modularhil/

Rohan Banerjee@rohanbbanerjee

Hi all! I'm at #HRI2026 in Edinburgh this week, presenting our work: A Human-in-the-Loop Confidence-Aware Failure Recovery Framework for Modular Robot Policies. Check out our talk on 3/18 at the Trust and Safety 2 session (Session 6A) @ 11:40am! More in the thread below🧵

English

Tapomayukh "Tapo" Bhattacharjee retweetledi

Calling all researchers! 🤖The CoRL 2026 website is officially live at corl.org with key dates for your submissions:

🗓 May 25: Abstract Submission

🗓 May 28: Full Paper Submission

🗓 Nov 9-12: Conference in Austin, TX

Send us your coolest work!

#RobotLearning

English

Very excited to be a part of this assistive mobility + manipulation effort!

Kinova@KinovaRobotics

Kinova joins ARPA-H’s $41M RAMMP program to advance assistive mobility + manipulation: smart wheelchair, next-gen Jaco arm, and AI-driven HMIs—boosting independence and safety. More info 👉 bit.ly/4nBrbyY #Kinova #Robotics #Accessibility

English

Tapomayukh "Tapo" Bhattacharjee retweetledi

Tapomayukh Bhattacharjee (@TapoBhat) of @Cornell_Bowers has received up to $2.4M from @ARPA_H to develop a robot-assisted system that will not only prepare meals for people with severe mobility limitations but also feed them and clean up afterward. news.cornell.edu/stories/2025/1…

English

Tapomayukh "Tapo" Bhattacharjee retweetledi

Tapomayukh "Tapo" Bhattacharjee retweetledi

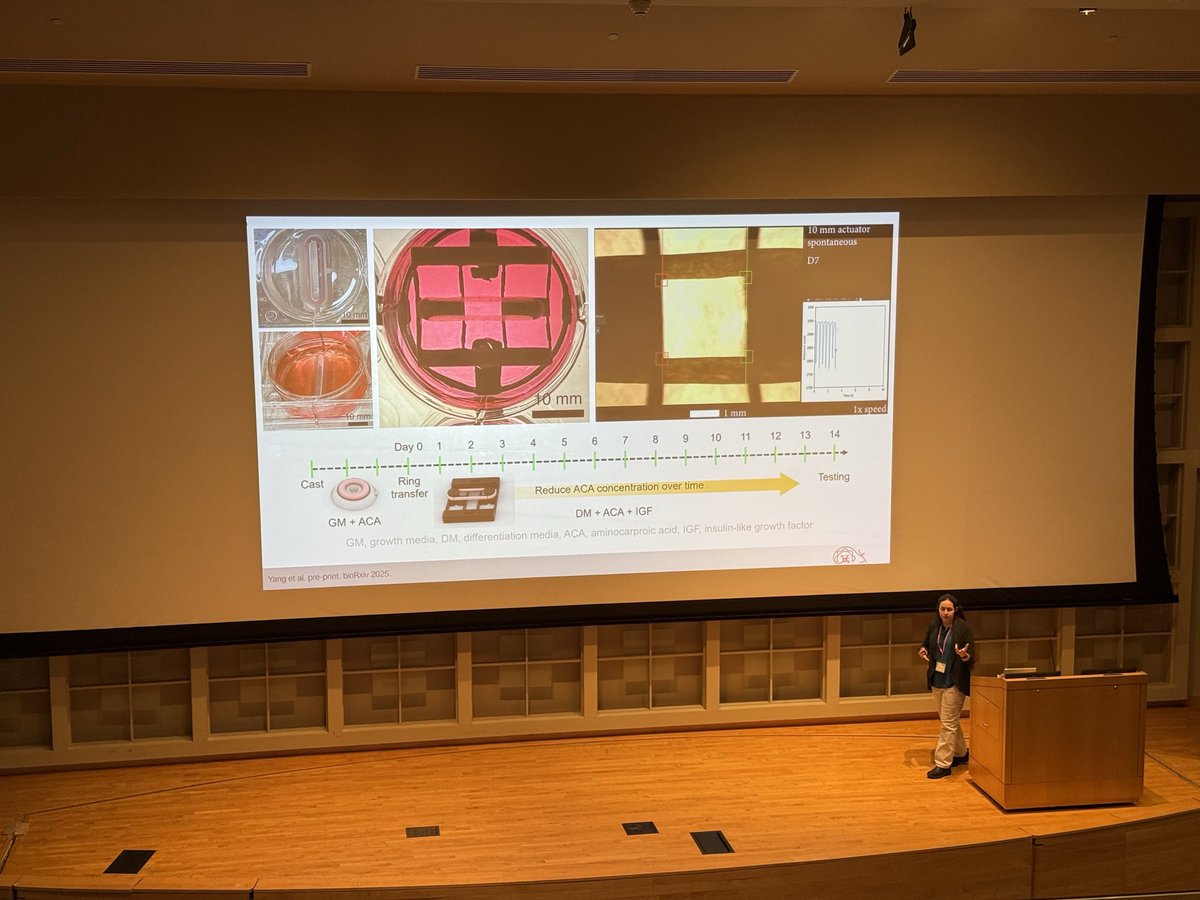

Exciting talks (with show-and-tell from Jeffrey!) happening now from our first two rising stars, @JeffreyILipton and @UksangYoo! #NERC2025

English

Tapomayukh "Tapo" Bhattacharjee retweetledi

Kicking off our last session with an exciting talk from @Majumdar_Ani on robot safety! #NERC2025

English

Tapomayukh "Tapo" Bhattacharjee retweetledi

Tapomayukh "Tapo" Bhattacharjee retweetledi

Only two days left to register for NERC!

Join us Oct 11 at Cornell for an exciting day of robotics talks, posters, and networking.

Register by *this Friday,* Oct 3 to join us in Ithaca next week.

🎟️ events.ces.scl.cornell.edu/event/NERC

English

(3/3) 🧵

We also evaluate PrioriTouch in environments that result in complex trajectories with multiple contacts to demonstrate its scalability.

🔧 TL;DR

PrioriTouch = Learning-to-rank + Hierarchical Control + Sim-in-the-loop → personalized, multi-contact pHRI that respects comfort without sacrificing the task.

English

(2/3) 🧵

👥Grounding preferences and real-world user study

We first elicit population-level preferences across 37 body regions (n=98; ages 32–77) to initialize conservative comfort thresholds and a base ranking, then personalize online.

In a real-world user study, 7 out of 8 participants preferred PrioriTouch over a heuristic baseline, citing higher perceived safety and comfort while maintaining task performance.

English

Robots often rely on whole-arm contact for caregiving tasks such as bed-bathing and transferring. Humans exhibit contact preferences in terms of where and how much force to exert for comfortable interactions. But comfort isn’t one-size-fits-all: preferences vary across people, and for the same person, across different body parts. How can a robot adapt to these preferences on the fly?

Excited to share our work “PrioriTouch: Adapting to User Contact Preferences for Whole-Arm Physical Human-Robot Interaction” led by @rishabhmadan96! #CoRL2025

💡 Core idea

Treat user contact preferences as a ranking over control objectives. PrioriTouch learns this priority ordering online and executes it with Hierarchical Operational Space Control (H-OSC), so higher-priority contacts (the ones closer to causing discomfort) are protected while others yield.

🧠 Learning to rank, safely

We introduce LinUCB-Rank, a contextual bandit that updates the priority ordering from sparse user feedback (“I feel uncomfortable around my abdomen”).

To keep people safe, risky exploration occurs first in a digital twin (simulation-in-the-loop) and then is deployed on the real robot.

📊 What does this buy us?

A sample-efficient way of reasoning about contact preferences. In sim and hardware: fewer force-threshold violations, fewer feedback signals to reach the right ordering, and sustained task efficiency.

@EmpriseLab @ToyotaResearch @Cornell_CS @corl_conf

🗣️ Spotlight: Sep 29 (Session 4)

📊 Poster: Sep 29 (Session 2)

🌐 Website: emprise.cs.cornell.edu/prioritouch

📝 Paper: arxiv.org/abs/2509.18447

(1/3) 🧵

English

(6/6) 🧵

Finally, we demonstrate the finetuned CLAMP model on a Franka Panda in 3 real-world tasks:

- Sorting recyclables vs. trash: robot separated unseen objects in a cluttered scene

- Retrieving metallic items from a cluttered bag: visuo-haptic model exhibited 45% success rate where vision failed completely

- Sorting ripe vs. overripe bananas: haptic encoder distinguished visually similar foods by compliance

English

(5/6) 🧵

On this dataset, we train the CLAMP model, a material recognition that fuses low-dimensional features from a pretrained haptic encoder and from GPT-4o equipped with in-context examples of material recognition. We show that the CLAMP model:

- Outperformed GPT-4o and a VLM finetuned on physical object properties (PG-VLM, Gao et al., 2024), even on unseen objects.

- Transfered to haptic-only compliance recognition, with no finetuning.

- Generalized to data from three robot embodiments with varying robot and gripper kinematics; finetuned models outperform vision-only baselines with only 15% finetuning data.

English

Introducing CLAMP: : a device, dataset, and model that bring large-scale, in-the-wild multimodal haptics to real robots. Haptics / Tactile data is more than just force or surface texture, and capturing this multimodal haptic information can be useful for robot manipulation.

Check out @pranavnnt’s work “CLAMP: Crowdsourcing a LArge-scale in-the-wild haptic dataset with an open-source device for Multimodal robot Perception”, at #CoRL2025.

The CLAMP device is an open-source, low-cost (<$200), portable (0.59 kg) tool that can sense 5 haptic modalities along with vision and language. Users can take it home and log haptic data via a PiTFT screen and buttons.

As far as we know, the CLAMP dataset is the largest multimodal haptic dataset in the robotics literature, with a total of 12.3 million data points from 5357 objects in 41 homes, collected by 16 CLAMP devices.

The CLAMP model is a material recognition model that outperformed GPT-4o, CLIP, and PG-VLM in our experiments, and generalized to haptic data from three different robot embodiments (WidowX and Franka with different grippers).

A finetuned CLAMP model enabled a 7-DoF Franka Panda to robustly perform three real-world manipulation tasks involving clutter, occlusion, and visual ambiguity.

@EmpriseLab @Cornell_CS @corl_conf

🗣️ Spotlight presentation at #CoRL2025 on Sep 30 (spotlight session 5)

📊 Poster session at #CoRL2025 on Sep 30 (poster session 3)

🌐 Website: emprise.cs.cornell.edu/clamp/

📄 Paper: arxiv.org/pdf/2505.21495

Check this thread for more details (1/6) 🧵

English