Gallil Maimon

108 posts

Gallil Maimon

@GallilMaimon

Research Scientist intern @ Meta (FAIR); PhD student @CseHuji; Speech Language Modelling

I am excited to open-source PipelineRL - a scalable async RL implementation with in-flight weight updates. Why wait until your bored GPUs finish all sequences? Just update the weights and continue inference! Code: github.com/ServiceNow/Pip… Blog: huggingface.co/blog/ServiceNo…

🚨New paper alert🚨 🧠 Instruction-tuned LLMs show amplified cognitive biases — but are these new behaviors, or pretraining ghosts resurfacing? Excited to share our new paper, accepted to CoLM 2025🎉! See thread below 👇 #BiasInAI #LLMs #MachineLearning #NLProc

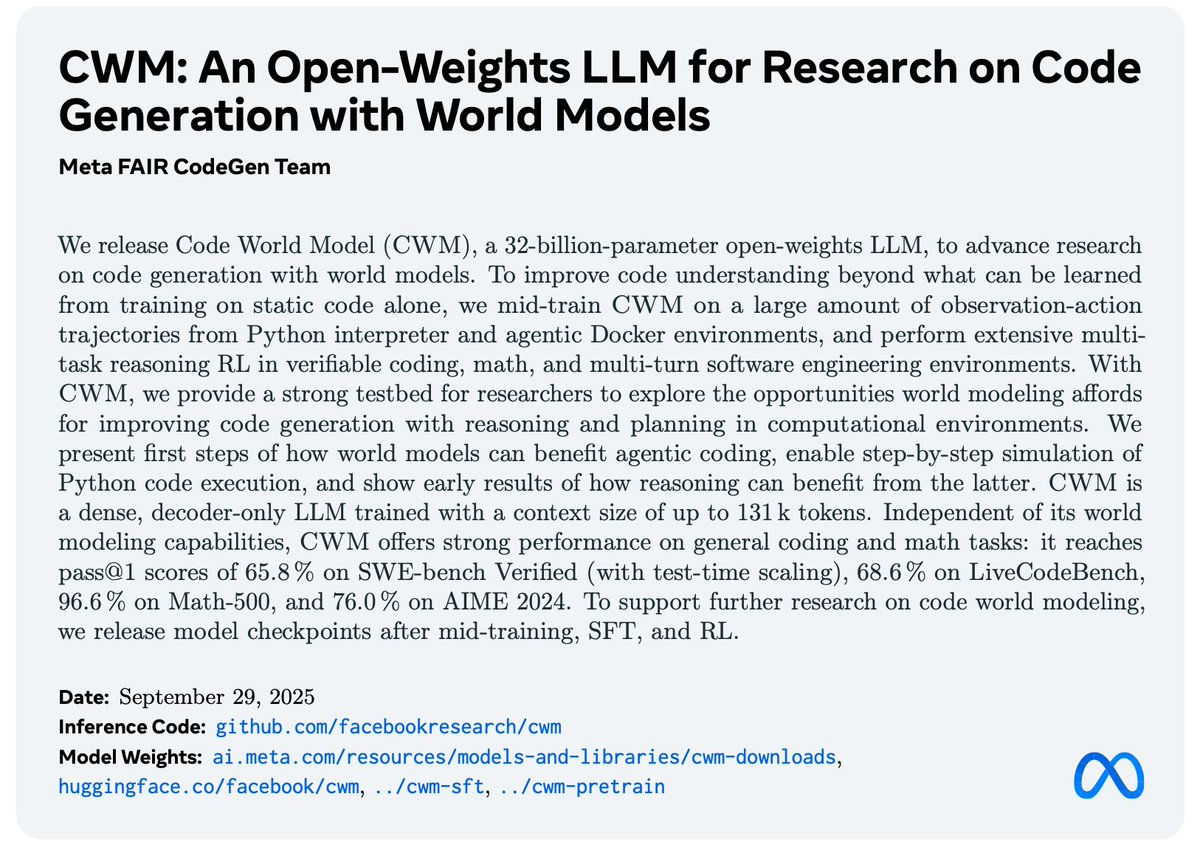

(🧵) Today, we release Meta Code World Model (CWM), a 32-billion-parameter dense LLM that enables novel research on improving code generation through agentic reasoning and planning with world models. ai.meta.com/research/publi…

(🧵) Today, we release Meta Code World Model (CWM), a 32-billion-parameter dense LLM that enables novel research on improving code generation through agentic reasoning and planning with world models. ai.meta.com/research/publi…

(🧵) Today, we release Meta Code World Model (CWM), a 32-billion-parameter dense LLM that enables novel research on improving code generation through agentic reasoning and planning with world models. ai.meta.com/research/publi…

(🧵) Today, we release Meta Code World Model (CWM), a 32-billion-parameter dense LLM that enables novel research on improving code generation through agentic reasoning and planning with world models. ai.meta.com/research/publi…