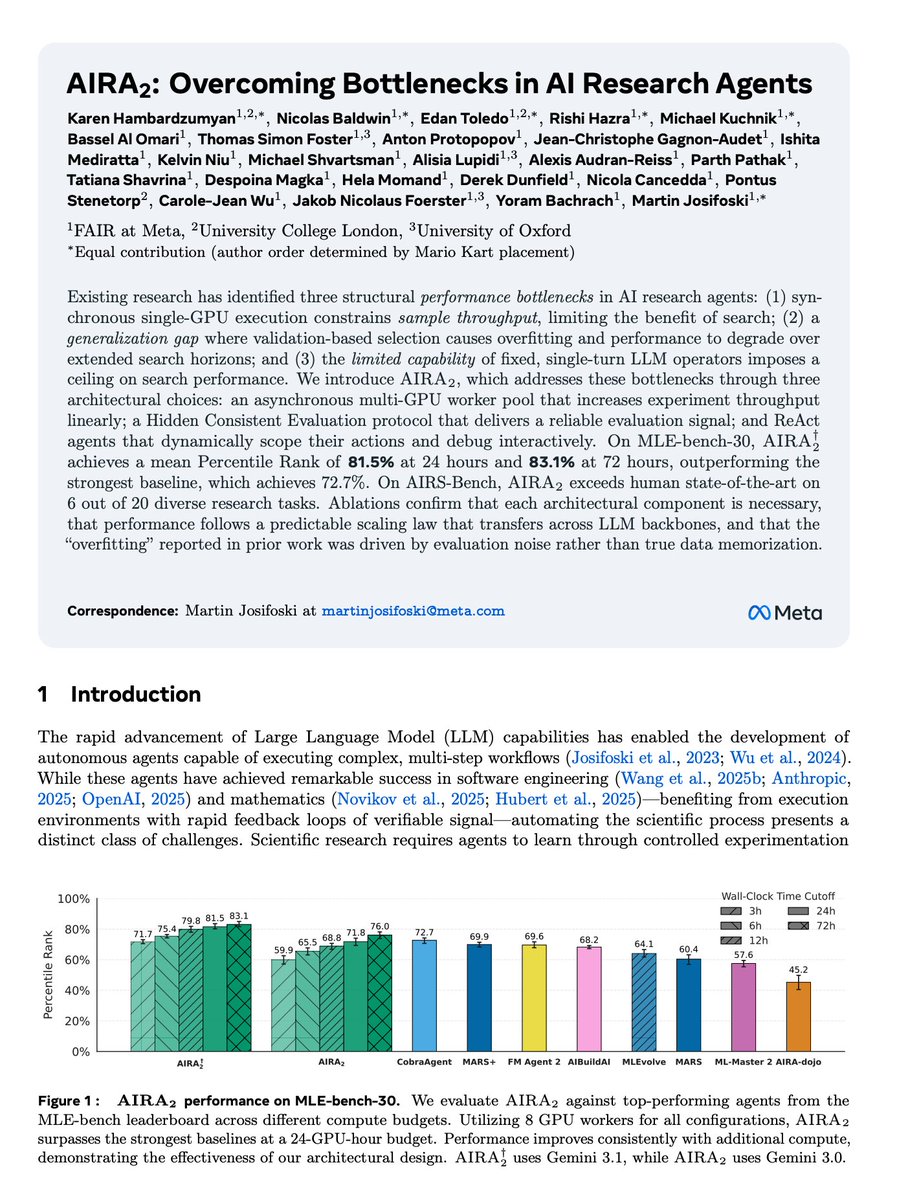

AI at Meta

2.6K posts

@AIatMeta

Together with the AI community, we are pushing the boundaries of what’s possible through open science to create a more connected world.

Meta Platforms Inc. has acquired Assured Robot Intelligence, a startup developing artificial intelligence models for robots, as part of a major initiative to build humanoid technology. bloomberg.com/news/articles/…

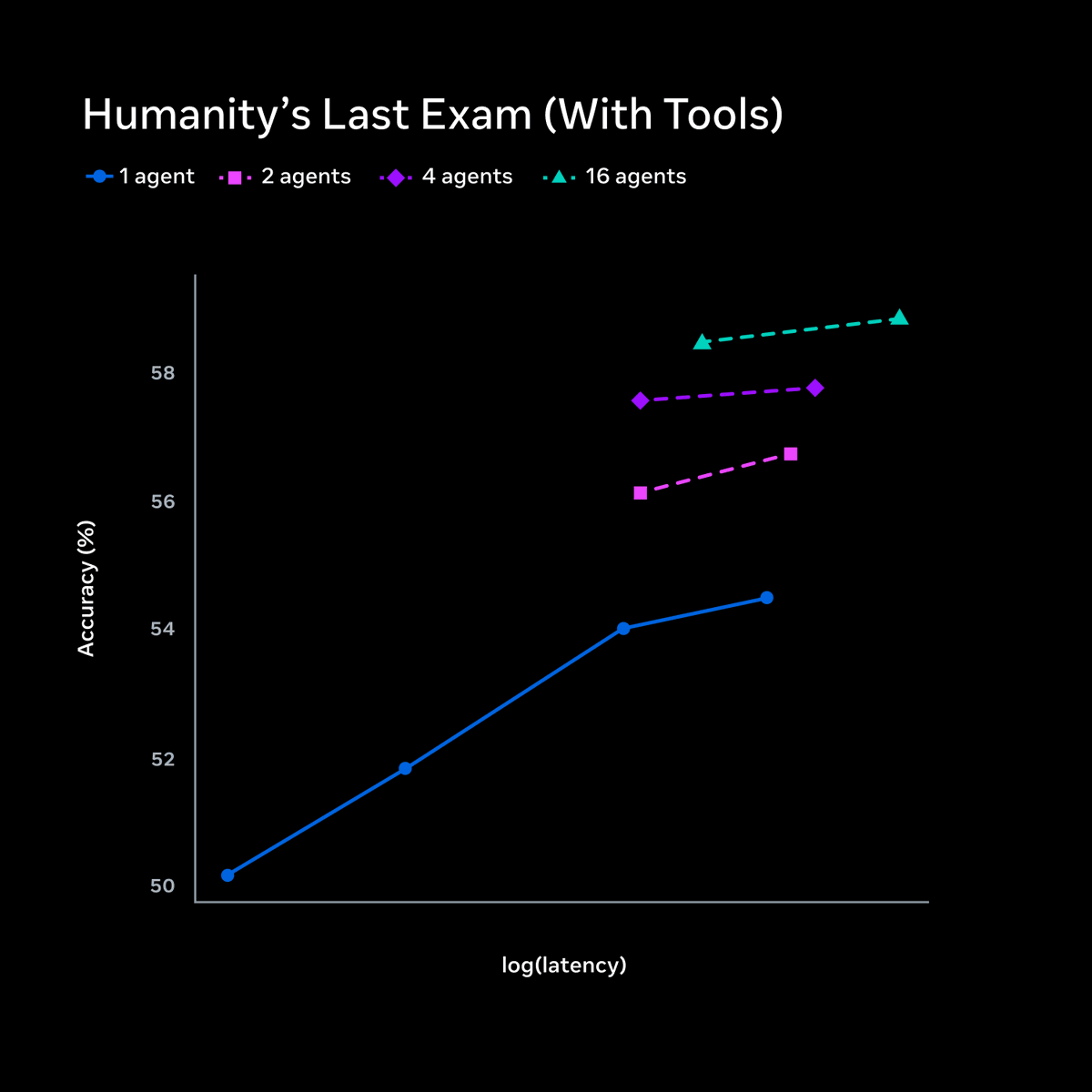

Ok this is actually pretty impressive and I truly didn't see any model doing this before or being able to do it to this extent. When I asked Muse Spark from Meta to convert this image into code, it cut out the assets from the screens so it could use them correctly!