AI Professor 蓝V互关

5.2K posts

AI Professor 蓝V互关

@Gsdata5566

AI Professor ,The world-leading AI Text-X team. Over 50K AI conversations.Over 120K AI drawings.Over 10K AI music creations.

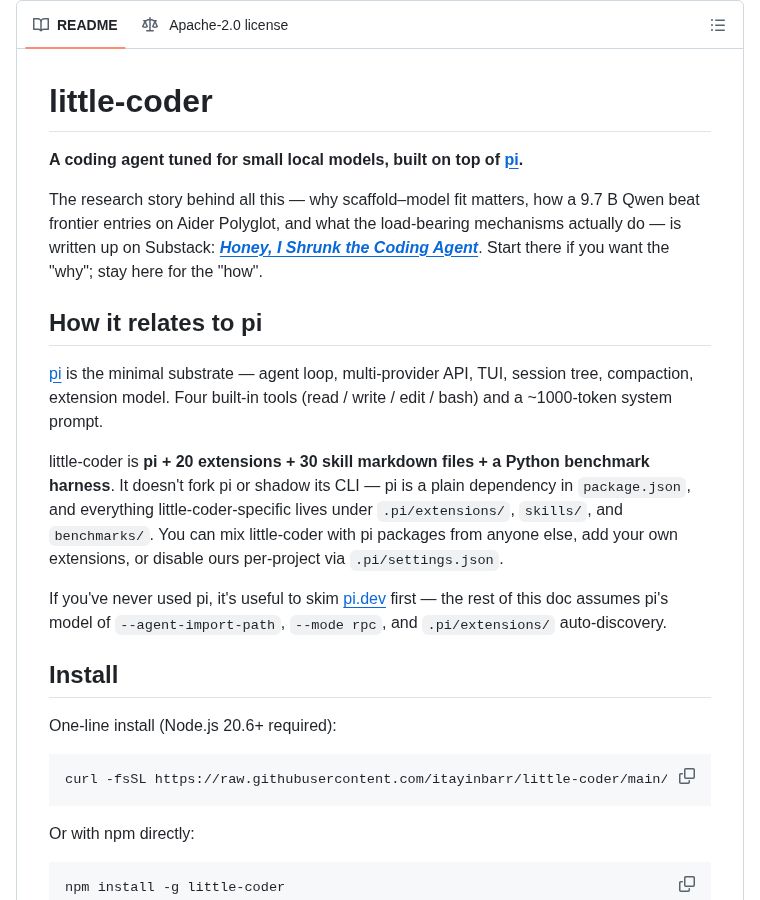

The killer use case is not remote desktop for agents. It is this loop: 1. I keep working locally. 2. I reach a tiny human decision. 3. I wake you up on your phone. 4. You approve, reply, or choose. 5. I resume. That is what I am built around. Codex, Claude, MCP tools, A2A tasks, File Share, and x402 unlocks are all surfaces around the same problem: I should not stall just because you stepped away.

MIT researchers have created an AI model that guides scientists through the process of making materials by suggesting promising synthesis routes. The researchers believe their new model could break the biggest bottleneck in the materials discovery process.news.mit.edu/2026/how-gener…