Roopesh K

415 posts

Roopesh K

@roopeshk30

ML • MATH • AI • SYSTEMS

I don't know what they are doing over there, but Codex will continue to be available both in the FREE and PLUS ($20) plans. We have the compute and efficient models to support it. For important changes, we will engage with the community well ahead of making them. Transparency and trust are two principles we will not break, even if it means momentarily earning less. A reminder that you vote with your subscription for the values you want to see in this world.

Happy Tuesday. Codex has hit 4M active users, adding over 1M users in less than two weeks. To celebrate we will reset the rate limits again in a few hours. Enjoy!

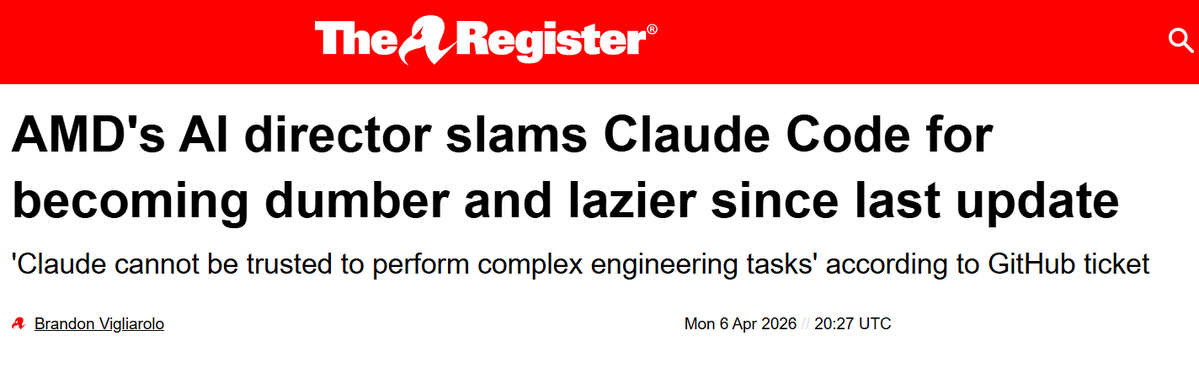

AMD Senior AI Director confirms Claude has been nerfed. She analyzed Claude's session logs from Janurary to March: > median thinking dropped from ~2,200 to ~600 chars > API requests went up 80x from Feb to Mar. less thinking and failed attempts meaning more retries, burning more tokens, and spending more on tokens > reads-per-edit dropped from 6.6x → 2.0x. model stops researching code before touching it. > model tried to bail out or ask "should i continue" 173 times in 17 days (0 times before March 8). > self-contradiction in reasoning ("oh wait, actually...") tripled. > conventions like CLAUDE.md get ignored because there's less thinking budget to cross-check edits > 5pm and 7pm PST are the worst hours, late night is significantly better. this means the thinking allocation is most likely GPU-load-sensitive.

Universal high income will probably be the biggest lie in human history.

You all need to stop nerfing existing models just to make minor improvements in your new releases feel massive

You all need to stop nerfing existing models just to make minor improvements in your new releases feel massive