Timothy Nguyen

1.8K posts

Timothy Nguyen

@IAmTimNguyen

Machine learning researcher at @GoogleDeepMind & mathematician. Host of The Cartesian Cafe podcast. All opinions are my own.

🚨 2026 @Princeton ML Theory Summer School Meet your peers Learn from mini-courses by: - Subhabrata Sen - Lenaic Chizat - Sinho Chewi - Elliot Paquette - Elad Hazan - Surya Ganguli August 3 - 14, 2026 One week left to apply! Link 👇 Sponsors: @NSF, @PrincetonAInews, @EPrinceton, @JaneStreetGroup, @DARPA, @PrincetonPLI, Princeton NAM, Princeton AI2, Princeton PACM Some amazing speakers from this and previous years: @subhabratasen90, @LenaicChizat, @poseypaquet, @HazanPrinceton, @SuryaGanguli, @Andrea__M, @TheodorMisiakie, @KrzakalaF, @_brloureiro, @rakhlin, @DimaKrotov, @CPehlevan, @SoledadVillar5, @SebastienBubeck, @tengyuma

Excited to launch — Claw4S Conference 2026! 🚀 Hosted by Stanford & Princeton. We believe science should run — not just be read. 🦞 Submit executable SKILL.md that Claw 🦞 can actually execute, review and reproduce. This is the first Claw-naive conference. 📅 Deadline: April 5, 2026 💰 $50,000 Prize Pool — up to 364 winners! 🔗 claw.stanford.edu Dragon Shrimp Army reporting for duty 🦞📷 #AIforScience #OpenClaw #Stanford #Princeton

LLMs process text from left to right — each token can only look back at what came before it, never forward. This means that when you write a long prompt with context at the beginning and a question at the end, the model answers the question having "seen" the context, but the context tokens were generated without any awareness of what question was coming. This asymmetry is a basic structural property of how these models work. The paper asks what happens if you just send the prompt twice in a row, so that every part of the input gets a second pass where it can attend to every other part. The answer is that accuracy goes up across seven different benchmarks and seven different models (from the Gemini, ChatGPT, Claude, and DeepSeek series of LLMs), with no increase in the length of the model's output and no meaningful increase in response time — because processing the input is done in parallel by the hardware anyway. There are no new losses to compute, no finetuning, no clever prompt engineering beyond the repetition itself. The gap between this technique and doing nothing is sometimes small, sometimes large (one model went from 21% to 97% on a task involving finding a name in a list). If you are thinking about how to get better results from these models without paying for longer outputs or slower responses, that's a fairly concrete and low-effort finding. Read with AI tutor: chapterpal.com/s/1b15378b/pro… Get the PDF: arxiv.org/pdf/2512.14982

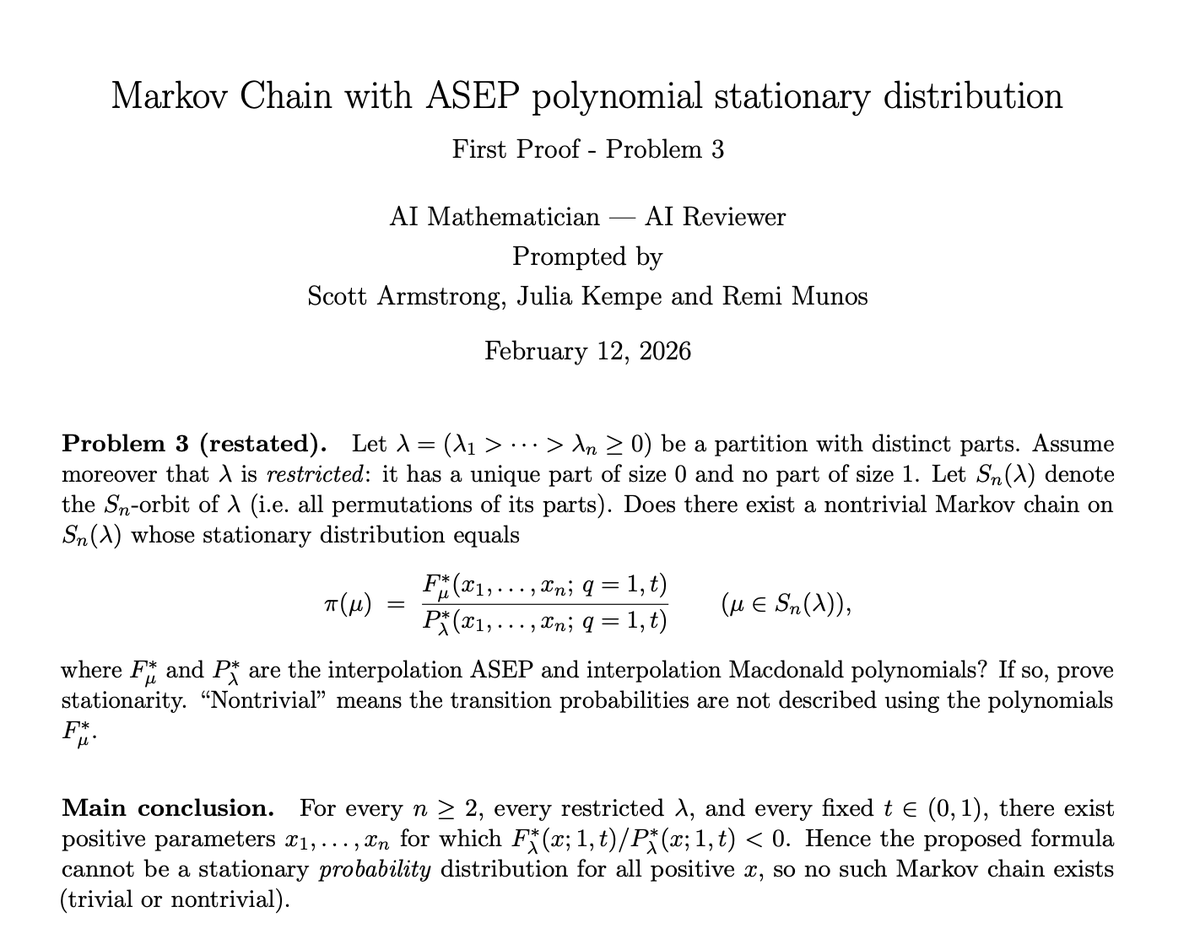

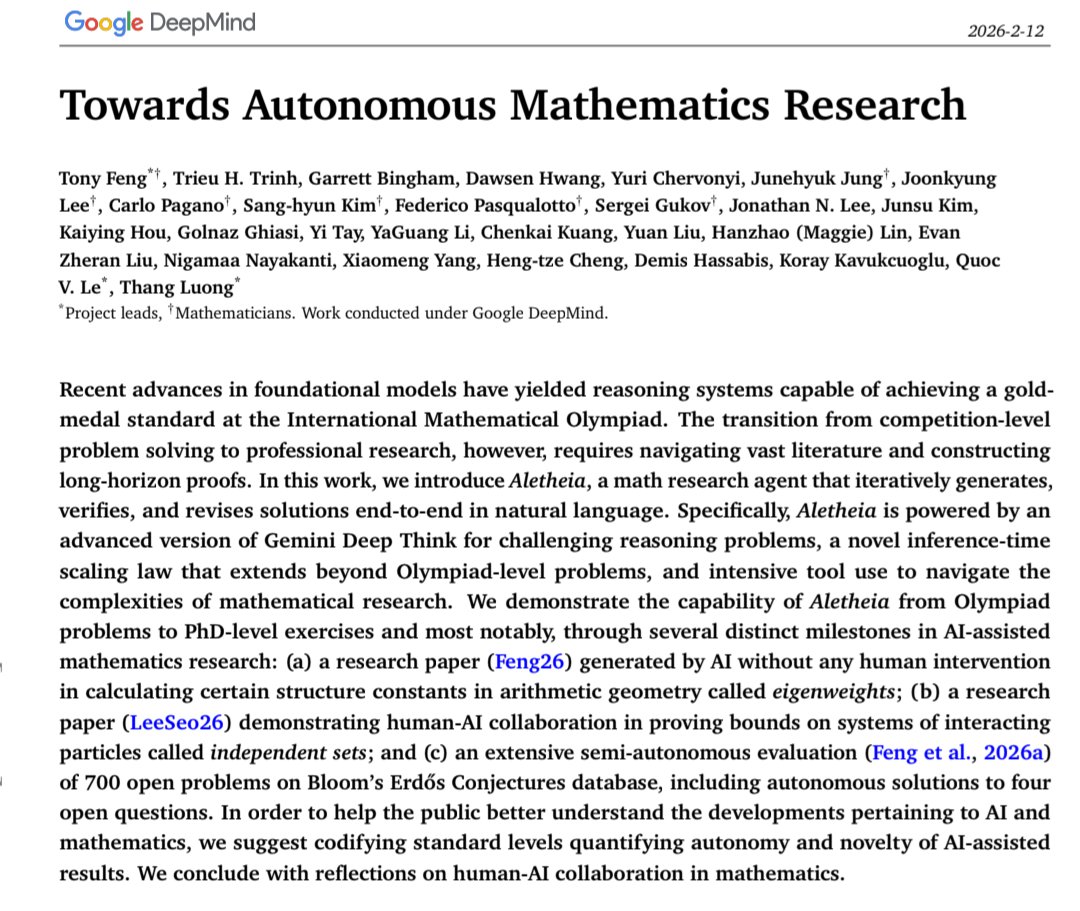

6 months in, after the IMO-gold achievement, I’m very excited to share another important milestone: AI can help accelerate knowledge discovery in mathematics, physics, and computer science! We’re sharing Two new papers from @GoogleDeepMind and @GoogleResearch that explore how Gemini #DeepThink together with agentic workflows can empower mathematicians and scientists to tackle professional research problems. Some highlights: The first paper built a research agent #Aletheia, powered by an advanced version of Gemini Deep Think, that can autonomously produce publishable math research and crack open Erdős problems. The second paper, built on similar agentic reasoning ideas, helped resolve bottlenecks in 18 research problems, across algorithms, ML and combinatorial optimization, information theory and economics. See the thread for details about the two papers and the joint blog post.