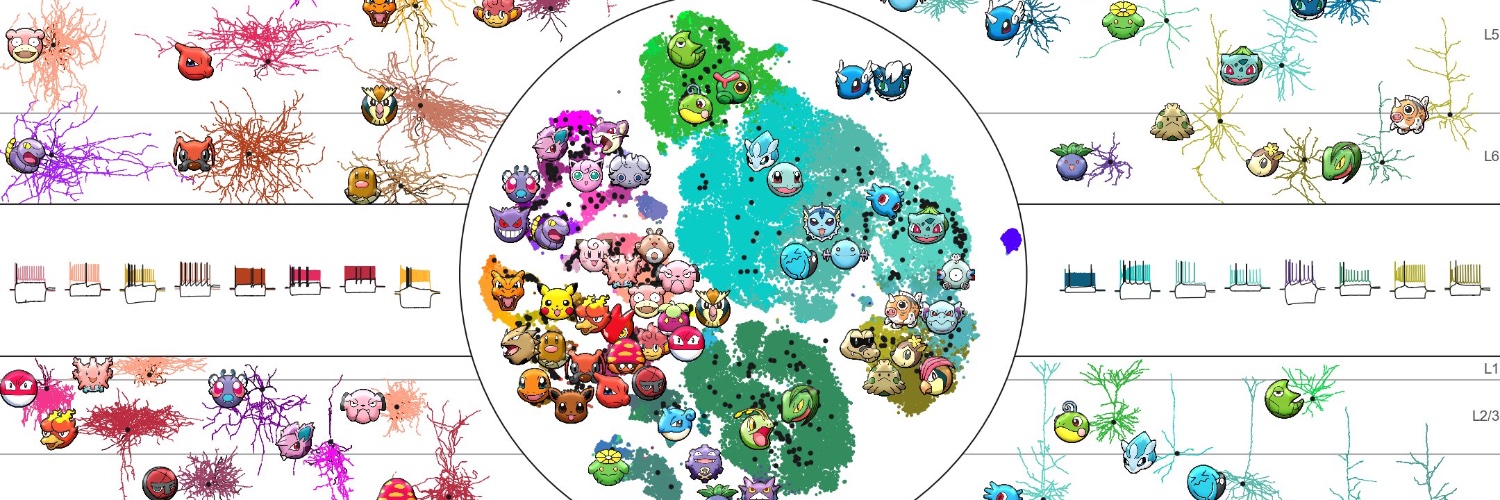

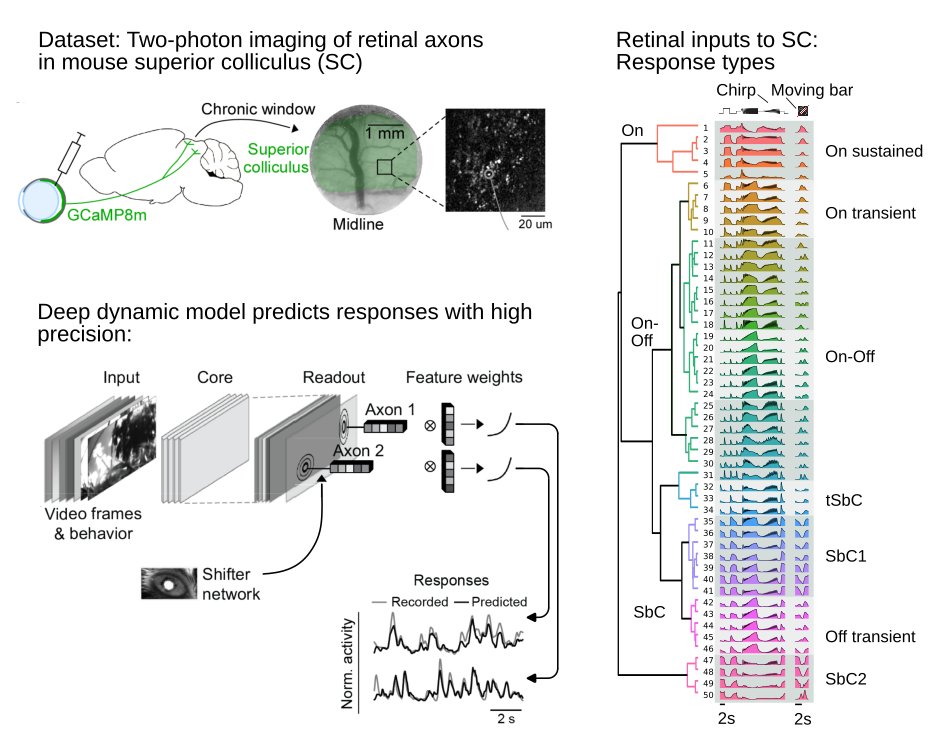

Excited to co-organize with @RisaWechsler this @StanfordHAI and @StanfordData conference on AI+Science: Accelerating Scientific Discovery on Tuesday May 5th Anyone can register for the livestream: hai.stanford.edu/events/ai-scie… We have world-leading speakers talking about: AI for Life: molecular biology to brains AI for Earth: weather, climate, geophysics and oceans AI for Universe: particle physics, cosmology & math Additionally we will have @dariogila give a keynote about America's Genesis Mission to accelerate AI for Science. And interestingly we will have a panel discussion on the nature and role of human understanding in the future of AI for Science, informed by scientists, AI researchers and sociologists of science and AI. Excited to develop a highly interdisciplinary and global view of all the opportunities and challenges of accelerating science with AI.