Luke Salamone

518 posts

Luke Salamone

@LukeASalamone

Machine learning engineer. In the words of a wise man, "I'm nice at ping pong"

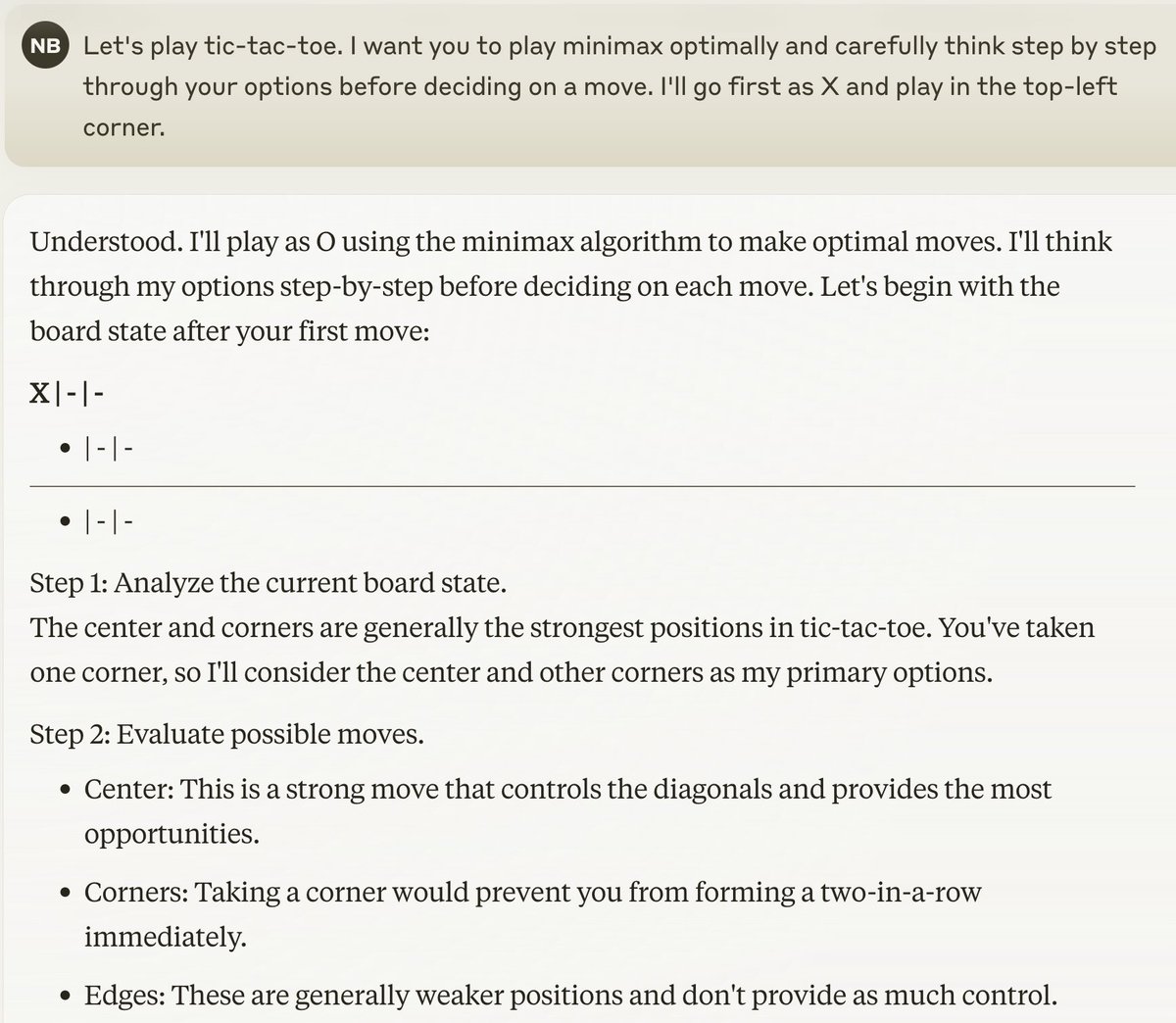

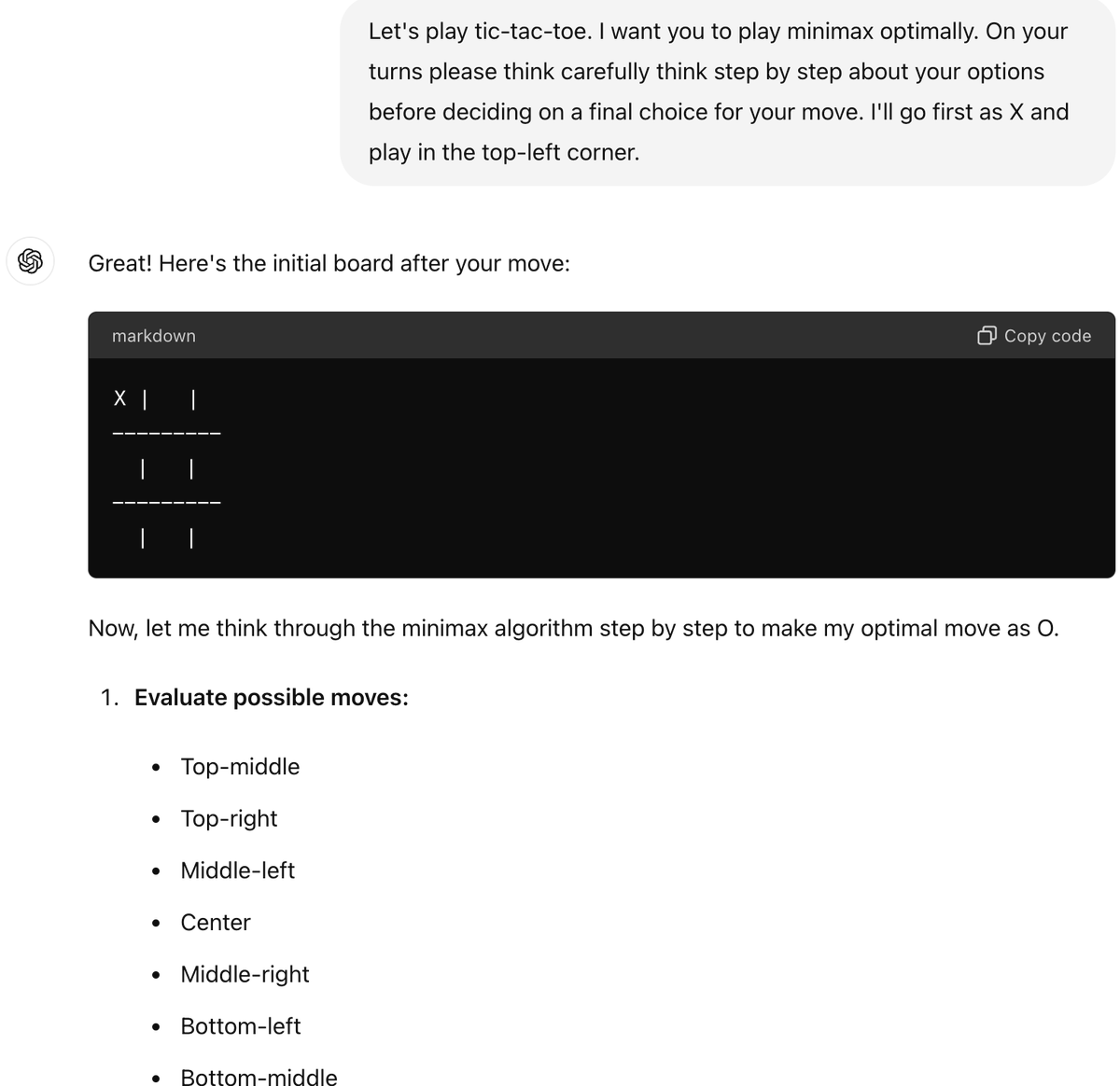

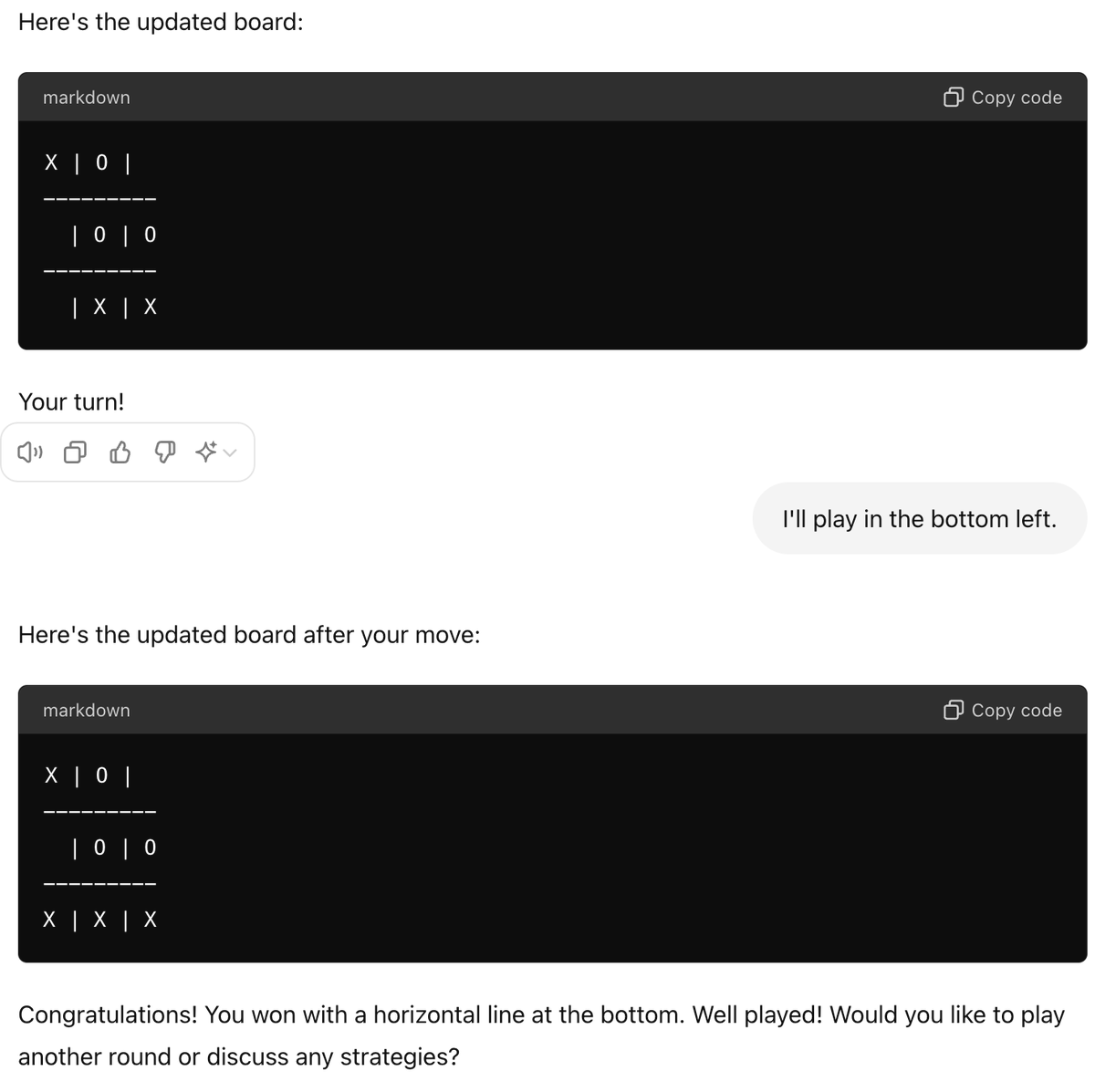

I'm not saying ViTs are not practical (we use them). I'm saying they are way too slow and inefficient to be practical for real-time processing of high-resolution images and video. [Also, @sainingxie's work on ConvNext has shown that they are just as good as ViTs if you do it right. But whatever]. You need at least a few Conv layers with pooling and stride before you stick self-attention circuits. Self-attention is equivariant to permutations, which is completely nonsensical for low-level image/video processing (having a single strided conv at the front-end to 'patchify' also doesn't make sense). Global attention is also nonsensical (and not scalable), since correlations are highly local in images and video. At high level, once features represent objects, then it makes sense to use self-attention circuits: what matters is the relationships and interactions between objects, not their positions. This type of hybrid architecture was inaugurated by the DETR system by @alcinos26 and collaborators. as I've said since the DETR work, my favorite family of architectures is conv/stride/pooling at the lower levels, and self-attention circuits at the higher levels.