Angehefteter Tweet

Happy Sunday fam!

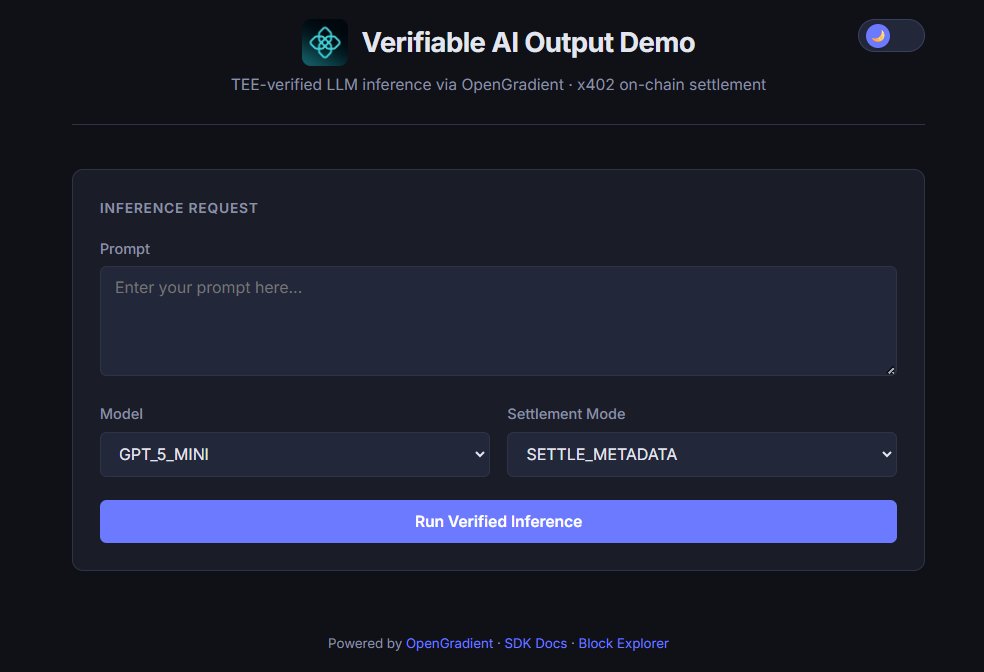

Using the @OpenGradient SDK stack, I built a small demo showing how an AI API call can become verifiable.

Every LLM prompt runs inside a Trusted Execution Environment, and each inference produces a payment receipt through x402.

Instead of simply trusting the output, the execution can be traced and verified.

Demo: Opengradient-vaod.xyz

With this demo, you get:

• The AI response

• Proof that the computation actually ran

• Execution metadata

• A payment receipt via x402

You can ask any question (Opengradient related too).

Prefer testing it with Claude Opus 4.6 but you can try other models.

Disclaimer: This is not a paid tweet, just complying with X rules.

English