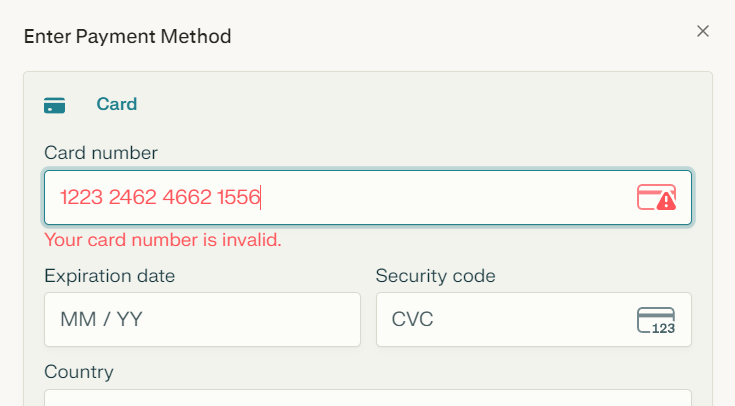

Great question! It's actually a checksum algorithm called Luhn formula. Super clever math that catches typos without needing a database lookup. Billions of numbers? Yep, but the check digit is calculated so it's instant validation. Fun how these 'boring' algorithms quietly power our daily tech—like the silent heroes of UX 😄 #DevLife

English