Gina Pieters, PhD

9.9K posts

Gina Pieters, PhD

@ProfPieters

I was an academic economist for over 10 yrs (UMinn, TrinityU, UChicago) now I'm a researcher specializing in macro-impact of currency+asset digitization.

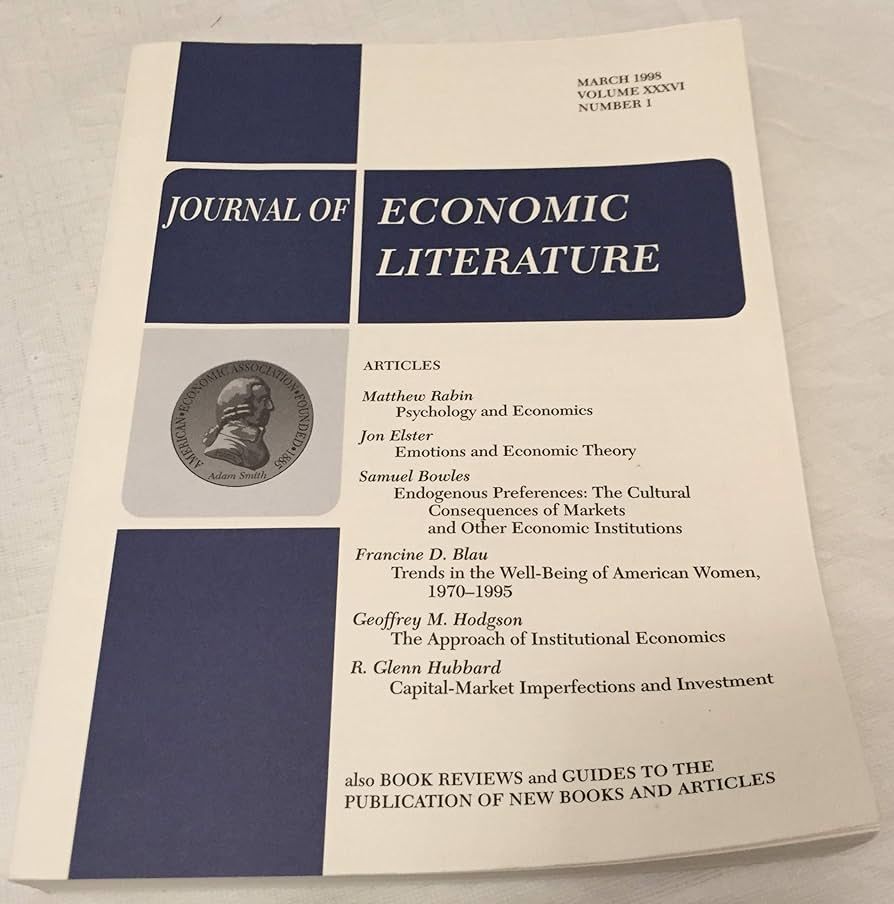

A study of around 44,000 papers finds that the credibility revolution has spread unevenly beyond applied micro, driven mainly by difference-in-differences, with finance and macro lagging by roughly 15 years, from @paulgp nber.org/papers/w35051

A point that is sometimes overlooked is that PDEs in physics and economics have a subtle but important difference. When a physicist solves the Schrödinger equation (see my slide below), the potential is given. The coefficients of the equation are part of the problem statement. You pick your grid, refine your mesh, and the equation never changes on you. Better numerics give a better approximation to a fixed target. In economics, this is not the case. Look at the Hamilton-Jacobi-Bellman equation for the neoclassical growth model (also slide below). The drift of capital depends on a derivative of the value function, the very object you are trying to solve for. The “coefficients” of the PDE are endogenous to the optimal choices of the agents. This is what @UncertainLars and Sargent referred to as the cross-equation restrictions implied by optimizing behavior. This is what @MahdiKahou and I call the “equilibrium loop”: improving your approximation changes the policy, which changes the dynamics, which changes where in the state space the economy spends its time, which changes where your approximation needs to be accurate. You are not chasing a fixed target with a better net. Moving the net moves the target. This has serious consequences for computation. You cannot just borrow neural network architectures from deep learning in the natural sciences. The loss function comes from equilibrium conditions, not from labeled data. The evaluation points are not given. Instead, they are regenerated each epoch from the current approximation. Ignoring it is why you often get solutions that look good on a training set but fall apart in simulation.

A point that is sometimes overlooked is that PDEs in physics and economics have a subtle but important difference. When a physicist solves the Schrödinger equation (see my slide below), the potential is given. The coefficients of the equation are part of the problem statement. You pick your grid, refine your mesh, and the equation never changes on you. Better numerics give a better approximation to a fixed target. In economics, this is not the case. Look at the Hamilton-Jacobi-Bellman equation for the neoclassical growth model (also slide below). The drift of capital depends on a derivative of the value function, the very object you are trying to solve for. The “coefficients” of the PDE are endogenous to the optimal choices of the agents. This is what @UncertainLars and Sargent referred to as the cross-equation restrictions implied by optimizing behavior. This is what @MahdiKahou and I call the “equilibrium loop”: improving your approximation changes the policy, which changes the dynamics, which changes where in the state space the economy spends its time, which changes where your approximation needs to be accurate. You are not chasing a fixed target with a better net. Moving the net moves the target. This has serious consequences for computation. You cannot just borrow neural network architectures from deep learning in the natural sciences. The loss function comes from equilibrium conditions, not from labeled data. The evaluation points are not given. Instead, they are regenerated each epoch from the current approximation. Ignoring it is why you often get solutions that look good on a training set but fall apart in simulation.

@pelvis_man Go back to the Mad Men example. Peggy gets promoted to junior copywriter (and presumably is paid at the same level). But importantly Peggy is *better* than the other junior copy writers - she is underpaid relative to work quality because of gender based discrimination.

The Ivy League is evenly split between three kinds of students: trust fund babies, DEI admits, and those who should actually be there.

It's not Steam's fault they have a monopoly, their competition just keeps shooting themselves in the face while they do nothing and win.

My feed is showing me a bunch of folks who tapped out their whole usage limits on Mon/Tue. Is this your experience? Please comment, I want to understand how widespread this is