Rob retweetet

Rob

2.8K posts

@Legendaryy Compute cost reduce to 1/5 with Vera Rubin and models get more efficient. I don’t think we will ever pay more for a model of that quality. More likely to be even able to run it on a Mac mini within a year.

English

Your Claude subscription is massively subsidized and it won't last forever.

A $20/mo Pro plan burns through ~$180/mo in API-equivalent tokens. Heavy Max users hit $5K/mo on a $200 plan.

Actual compute cost is roughly 10% of API pricing. Venture capital covers the rest.

Anthropic just 2x'd Claude Code limits for "Spring Break'" during off hours. Enjoy it while it's here.

At some point the math has to math.

Clip from the @modernmarket_

English

Rob retweetet

Rob retweetet

@BenjaminDEKR He just needs to connect Grok to the internal coms, then write an agent to identify those inefficiencies and report daily - then feed those findings back into the leadership till it’s resolved.

English

@fishlake2022 @ragipsoylu its always the same AI prompt that somehow got the attention of the X algorithm.

English

I used to read his posts since the start of this ongoing war, and they originally sounded insightful indeed. But recently, am noticing repetition of content in all his posts; recognize a pattern in the format of posts; not organic, kind of generated content. Just today removed his account from my X list of accounts related to this war. Some may not like his tone/presentation style, lengthy posts.

But still, his posts are helping us understand the current events. It is not about being viral and engagement.

The posts by so-called experts with credentials having gone through peer analysis are suitable for "intellectuals", not for common hoomans like me who come on X to consume news and analysis on current events.

English

JUST IN: The “geopolitical analyst” mapping the collapse of world order might actually be mapping dog crates onto cargo planes.

Meet Shanaka Anslem Perera.

On X, he writes threads about Iran war, rise of China, nuclear deterrence, rare earth supply chains, and the collapse of the global financial system to an audience of more than 200,000 followers. The bio says: “Independent analyst. Money, geopolitics, AI, sovereignty.”

The implied credentials: strategist. security thinker. civilizational cartographer.

The actual origin story?

A pet shipping company in Sri Lanka.

In 2010, Perera says he encountered a British traveler in Colombo who couldn’t move his dog back to the UK. That moment led him to found an international pet relocation business, handling paperwork, veterinary clearances, and airline transport for animals.

Door-to-door logistics.

Customs forms.

Kennels.

Cargo holds.

Today the same figure publishes sweeping analyses of Middle East alliances, the Iran war escalation ladder, US defense supply chains, and monetary systems — while also presenting himself online as CEO of a global pet relocation firm.

No academic background in international relations.

No military service.

No government intelligence experience publicly documented.

No experience in diplomacy other than resolving dog fights.

Yet threads predicting systemic war, Iranian retaliation doctrine, and global financial collapse circulate across X with the cadence of intelligence briefings.

This is the strange new architecture of the information age.

Once, national security analysis came from war colleges, think tanks, or former officials.

Now it can come from anyone with a thread and a compelling tone.

The institutions that filtered expertise are weakening.

Algorithms replace peer review.

Audience replaces credentials.

A pet relocation entrepreneur can become a geopolitical oracle overnight.

And the internet doesn’t ask for diplomas.

Only engagement.

In the age of collapsing gatekeepers, the most powerful strategic doctrine may not be deterrence or containment.

It’s virality.

And somewhere between a thread about Iran’s escalation ladder and a prediction of civilizational collapse…

another golden retriever is being cleared through customs.

English

@Yuchenj_UW Not unlikely that a single person builds AGI given that agents do all the work, since that person doesn’t get stopped for every decision by their org. Openclaw is just the first in a long line.

English

@KatanaLarp Problem is once you just tell LLM to focus on a number of optimizations it immediately get fast code. All my new pages have a 100/100 speed score and feel instant.

English

@pmarca @DavidSacks Which is the same as saying they want the AI to control the government, given that most ai teams now use AI to manage AI.

English

@rohanpaul_ai Deep seek open sourcing their tricks made everyone better and unlocked a lot of competition. That can't be said for a closed source model that is simply a few percent better.

English

Anthropic CEO Dario Amodei on Open-Source AI Models.

"I don't think open source works the same way in AI that it has worked in other areas. Primarily because with open source you can see the source code of the model. Here we can't see inside the model, it's often called open weights instead of open source to kind of distinguish that. But a lot of the benefits, which is that many people can work on it and that it's kind of additive, don't quite work in the same way.

So I've actually always seen it as a red herring. When I see a new model come out I don't care whether it's open source or not. If we talk about Deep Seek I don't think it mattered that Deep Seek is open source. I think I ask, is it a good model? Is it better than us at the things that matter? That's the only thing that I care about.

It actually doesn't matter either way. Because ultimately you have to host it on the cloud. The people who host it on the cloud do inference. These are big models, they're hard to do inference on.

When I think about competition I think about which models are good at the tasks that we do. I think open source is actually a red herring.

It's not free. You have to run it on inference and someone has to make it fast on inference."

---

From 'Alex Kantrowitz' YT channel

English

Any serious company can’t use anthropic anymore. They are service providers, not censors of a companies use cases. If one deeply integrates them they might at any moment switch it off for ethics reasons. The bans of last week for openclaw usage shows their ambition to control what users do with their tokens.

English

In his first interview after the reaction from the US authorities, Dario Amodei appears shaken.

What does he say? He repeatedly emphasizes that he and his company, Anthropic, are "patriotic" and in no way act against the interests of the USA. He says this because his very refusal to accept the government's rules has been interpreted as anti-American.

It seems Dario miscalculated somewhat regarding the US government's reaction, classifying Anthropic as a "hostile actor" and thus a "supply chain risk." I suspect this came as a surprise even to Dario, which is why he is trying to backpedal and, from a PR perspective, demonstrate his allegiance and commitment to the nation.

Dario has attempted to position himself as an ethically superior company, essentially marketing ethical conduct as an asset, similar to how Apple promotes its data privacy as an asset, repeatedly coming into conflict with federal agencies by refusing to grant access to data. The attempt was therefore to position the business externally in such a way as to make it clear: Anthropic is and remains sovereign; data is safe with us, even from access by authorities, as long as we determine what is done with our technology. That was the message to be disseminated when resisting the demands of the authorities.

However, the plan does not appear to have worked as hoped.

English

@DavidSacks Could it be because posting them and the first round of analysis is now done by AI? Thus a company profits from as many posts as possible.

English

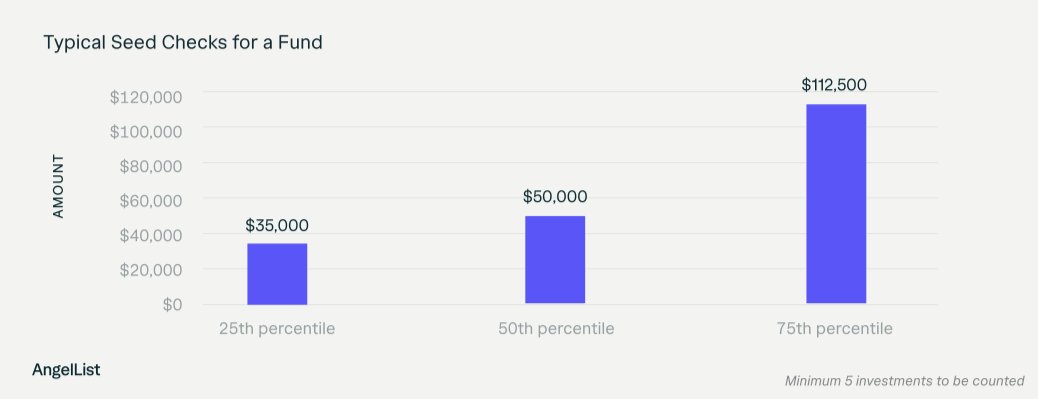

The median seed-round check on @AngelList is $50k. We're writing checks up to $500k. My DMs are open.

English

@isaacbratzel @speedrun Get pitchai.com then. Not endless spam to your mail and instead time to focus on the good ones.

English

@kimmonismus Once that happens ai competition becomes a pure infrastructure play, and I don’t think they can beat Elon at that game.

English

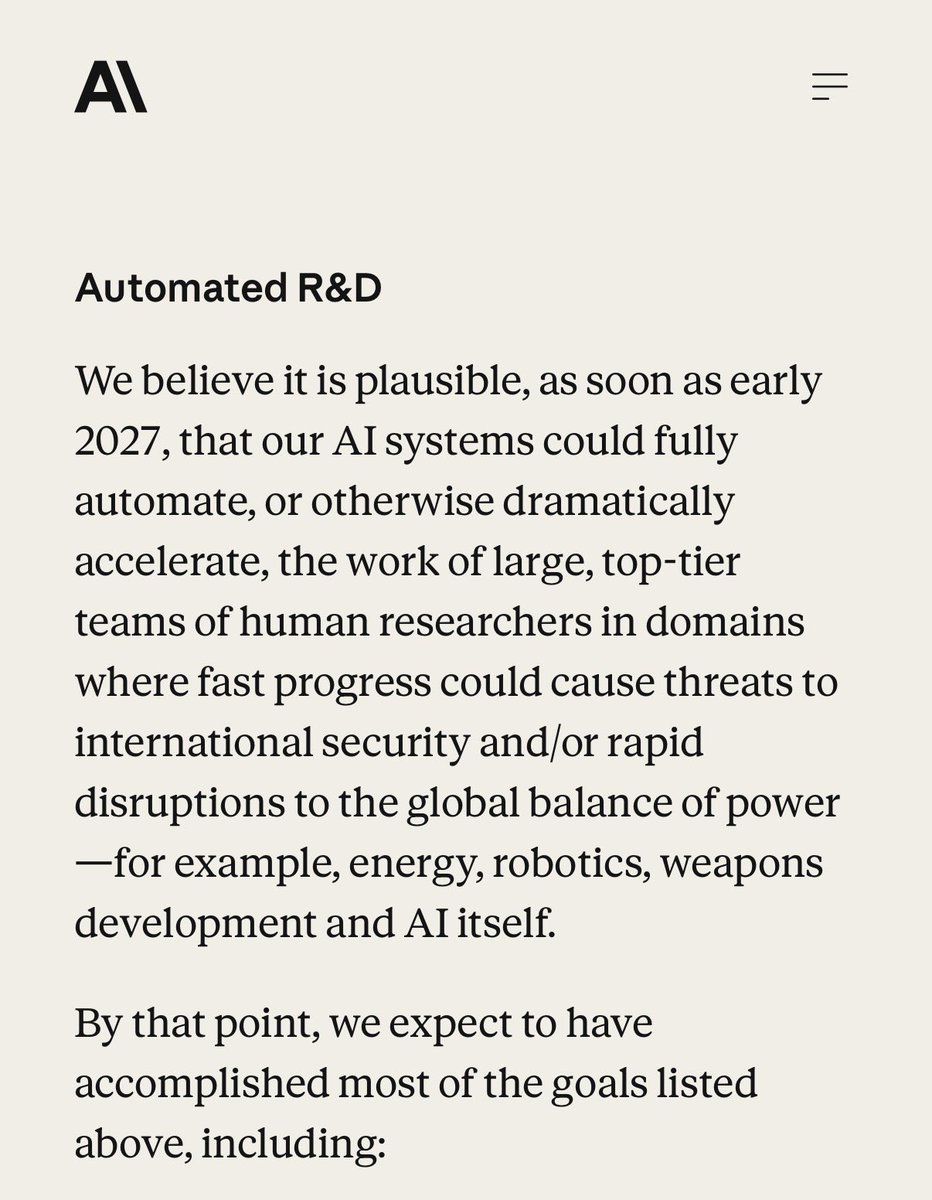

One year left:

„We believe it is plausible, as soon as early 2027, that our AI systems could fully automate, or otherwise dramatically accelerate, the work of large, top-tier teams of human researchers in domains where fast progress could cause threats to international security and/or rapid disruptions to the global balance of power, for example, energy, robotics, weapons development and AI itself.“

Anthropic@AnthropicAI

We’re now separating the safety commitments we’ll make unilaterally and our recommendations for the industry. We’re also committing to publish new Frontier Safety Roadmaps with detailed safety goals, and Risk Reports that quantify risk across all our deployed models.

English

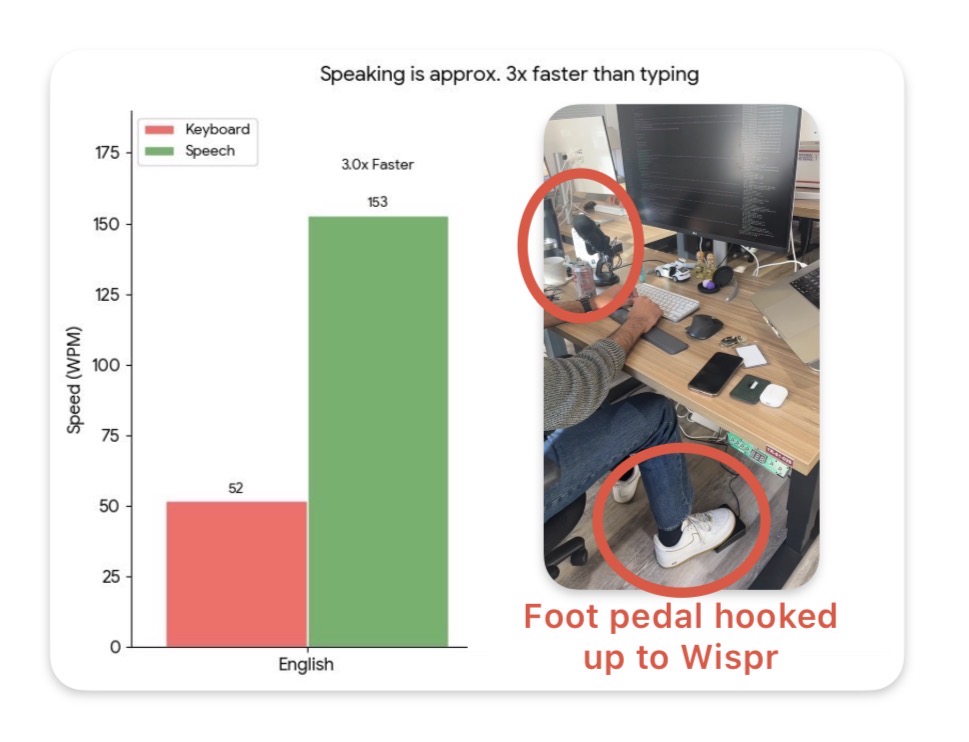

Voice is ~3x faster than typing on mobile and desktop, but dictation accuracy always sucked.

Now, products like Wispr boast a ~85% "zero edit rate" and is my daily driver for zipping through Slack/email/text responses on my walks back home.

And its slowly becoming omnipresent in the wild too. At a random startup office I visited last month, I saw this really cool set up where an engineer hooked up this foot pedal to trigger Wispr!

They just launched on Android too and are giving away six months for free:

English

Rob retweetet

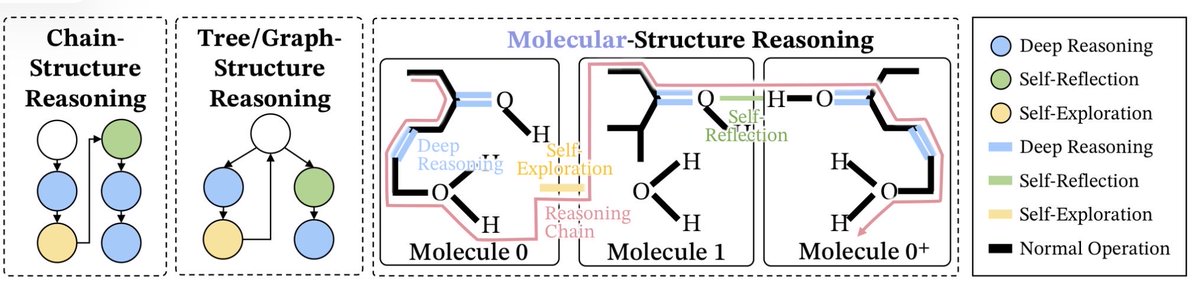

Bytedance just dropped a paper that might change how AI thinks.

Literally.

They figured out why LLMs fail at long reasoning — and framed it as chemistry.

The discovery:

Chain-of-thought isn't just words. It's molecular structure.

Three bond types:

• Deep reasoning = covalent bonds (strong, unbreakable)

• Self-reflection = hydrogen bonds (flexible, context-aware)

• Exploration = van der Waals (weak, ever-present)

Why most AI "thinking" sucks:

Everyone's been imitating keywords — "wait," "let me check" — without building the actual bonds.

It's like copying the shape of a protein without the atomic forces holding it together.

Bytedance proved: structure emerges from training, not prompting.

The fix: Mole-Syn

Their method doesn't just generate text. It synthesizes stable thought molecules.

Results: better reasoning, more stable RL training.

Bytedance is treating AI reasoning like organic chemistry — and it works.

Paper: arxiv.org/abs/2601.06002

English

Rob retweetet

Demis Hassabis just defined the real test for AGI. It’s more brutal than anyone expected.

Train AI on all human knowledge. Cut it off at 1911. See if it independently discovers general relativity like Einstein did in 1915.

If it can, we have AGI. If not, we’re still building pattern matchers.

Hassabis: “My definition of AGI has never changed. A system that can exhibit all the cognitive capabilities that humans can.”

Not bar exams. Not coding competitions. All cognitive capabilities.

Hassabis: “The brain is the only existence proof we have, maybe in the universe, of a general intelligence.”

That’s why DeepMind studies neuroscience. Not for inspiration. For data. The human brain is the only confirmed evidence that general intelligence is physically possible.

If you want to build it, you study the only example that exists.

Hassabis: “True creativity, continual learning, long-term planning. They’re not good at those things.”

Current systems are impressive and broken simultaneously.

Hassabis: “They can get gold medals in international math olympiad questions, but they can still fall over on relatively simple math problems if you pose it in a certain way.”

Jagged intelligence. Brilliant in narrow domains. Incompetent when approached differently.

That inconsistency is the tell. A true general intelligence doesn’t spike in one direction and collapse in another.

The Einstein test cuts through all of it. No benchmarks. No leaderboards. No carefully curated evals.

Just a model, a knowledge cutoff, and the question of whether it can do what one human did alone in 1915.

Hassabis: “Training an AI system with a knowledge cutoff of 1911 and seeing if it could come up with general relativity like Einstein did in 1915. That’s the true test of whether we have a full AGI system.”

Current models can’t. They remix brilliantly. They don’t generate paradigm-shifting theories from first principles.

Hassabis: “I think we’re still a few years away from that.”

A few years. Not decades.

The system that can be Einstein once can be Einstein a thousand times simultaneously across every domain.

That’s not AGI anymore. That’s the beginning of something we don’t have words for yet.

When that test gets passed, we won’t need a press release to know what happened.

English

Rob retweetet

About 1/3 of the top technical CEOs are completely AGI pilled by coding again. I am one of them. Highly recommend.

Totally exhilarating to be back shipping new products and software again

Howie Liu@howietl

English

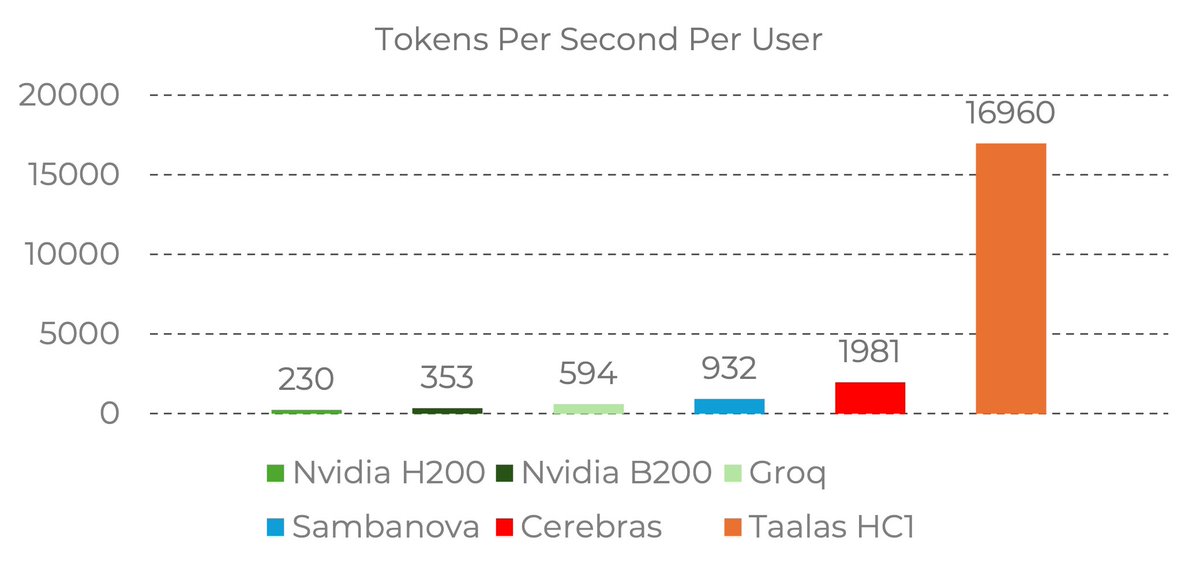

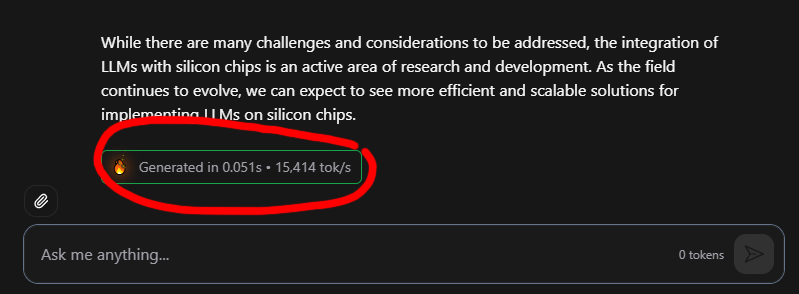

@wildmindai next level fast - feels unreal when trying the chatbot. 8b is a small model, they can do it for a 100b model it changes everything

English

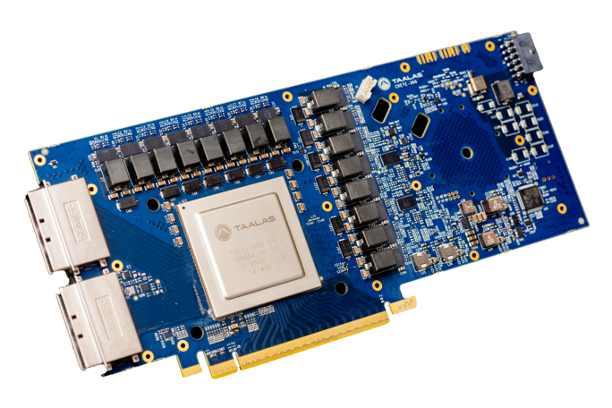

17,000 tokens per second!! Read that again!

LLM is hard-wired directly into silicon. no HBM, no liquid cooling, just raw specialized hardware. 10x faster and 20x cheaper than a B200.

the "waiting for the LLM to think" era is dead. Code generates at the speed of human thought.

Transition from brute-force GPU clusters to actual AI appliances.

taalas.com/the-path-to-ub…

English