Shuo Cheng retweetet

Shuo Cheng

32 posts

Shuo Cheng

@ShuoCheng94

PhD student @ Georgia Tech | Robotics, Computer Vision, Machine Learning

Atlanta, GA Beigetreten Kasım 2021

332 Folgt566 Follower

Shuo Cheng retweetet

New essay on robot learning from human data.

I like @karpathy’s idea that LLMs are “ghosts” distilled from human knowledge. In robotics, we are attempting something similar: to summon a sensorimotor ghost.

Our current ritual is teleoperation. It produces data, but strips away the reflexes, priors, and social interactions that make human behavior rich.

My bet: robot learning will scale less with more robots, and more with better models of humans. Right now we lack both the systems and algorithms to model humans well.

If we succeed, the result won’t just be better robots. It may be the first learned theory of how humans act in the physical world. Robots would simply be the first place we deploy it.

Danfei Xu@danfei_xu

English

🤖 Curious how Sim-and-Real Co-Training with OT works?

Read the full paper here: arxiv.org/pdf/2509.18631

Website: ot-sim2real.github.io

(n/n)

English

Shuo Cheng retweetet

What if one unified method helps robots learn from human videos across many tasks, many robots?

Meet ImMimic: Cross-Domain Imitation from Human Videos via Mapping and Interpolation (CoRL 2025 Oral Presentation🏆) @ICatGT

Check it here sites.google.com/view/immimic!

English

Shuo Cheng retweetet

Introducing EgoMimic - just wear a pair of Project Aria @meta_aria smart glasses 👓 to scale up your imitation learning datasets!

Check out what our robot can do.

A thread below👇

English

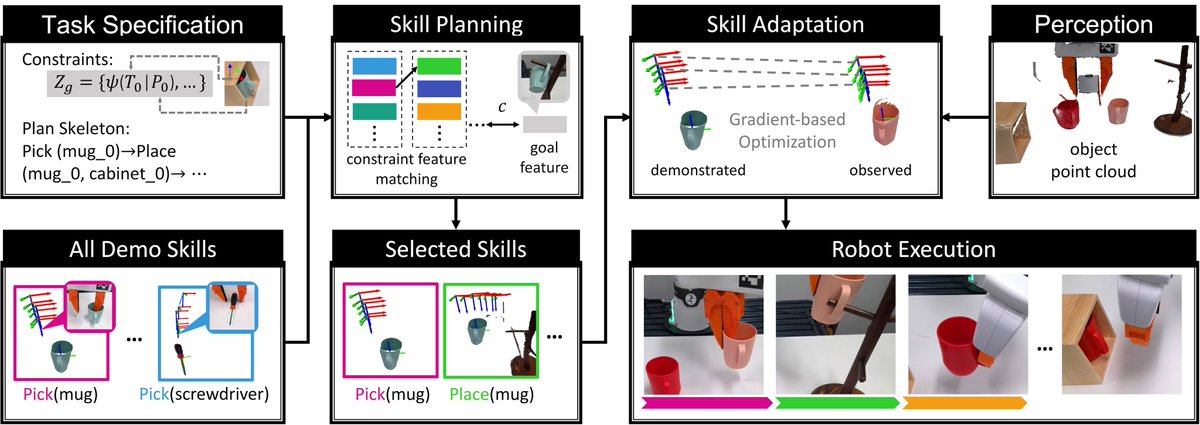

Visit our website for the paper and more details: nodtamp.github.io. Joint work with @CaelanGarrett, @AjayMandlekar and @danfei_xu (N/N)

English

Congratulations to @ShuoCheng94 for leading LEAGUE, 1 of 5 papers out of 1200+ to receive an RA-L best paper award honorable mention at ICRA! As the sole student author on a two-person team in a field trending towards 10+ authors/paper, Shuo's vision and technical prowess shine through.

More exciting work is brewing along this line, so stay tuned.

I'll be at ICRA this year. Email/DM to catch up.

Check out our paper: TuAT7-CC.1, 10:30-12:00

sites.google.com/view/guidedski…

English

Shuo Cheng retweetet

Since we are entering the "BC is all you need" phase of Robot Learning😜 --- Robomimic (robomimic.github.io) allows you to play with SOTA algorithms (BC-Transformer, DiffusionPolicy, etc.) on challenging tasks. Also easy to integration with physical robots!

English

Shuo Cheng retweetet

How to represent granular materials for robot manipulation?

Introducing our #CoRL2023 project: Neural Field Dynamics Model for Granular Object Piles Manipulation, a field-based dynamics model for granular object piles manipulation.

🌐 arxiv.org/abs/2311.00802

👇 Thread

English

Shuo Cheng retweetet

If you're at #ICRA2023 come chat with us about our poster on "Generalizable Pose Estimation using Implicit Scene Representations!" Pod 11 at 3pm BST

Read more about our paper: sites.google.com/view/generaliz…

English