Ajay Mandlekar

230 posts

Ajay Mandlekar

@AjayMandlekar

Simulation Research for GR00T @ NVIDIA GEAR Lab | EE PhD @Stanford

Simulations scale for rigid objects, but deformable objects remain an open frontier. SoftMimicGen generates large-scale training data from just a handful of demonstrations. softmimicgen.github.io

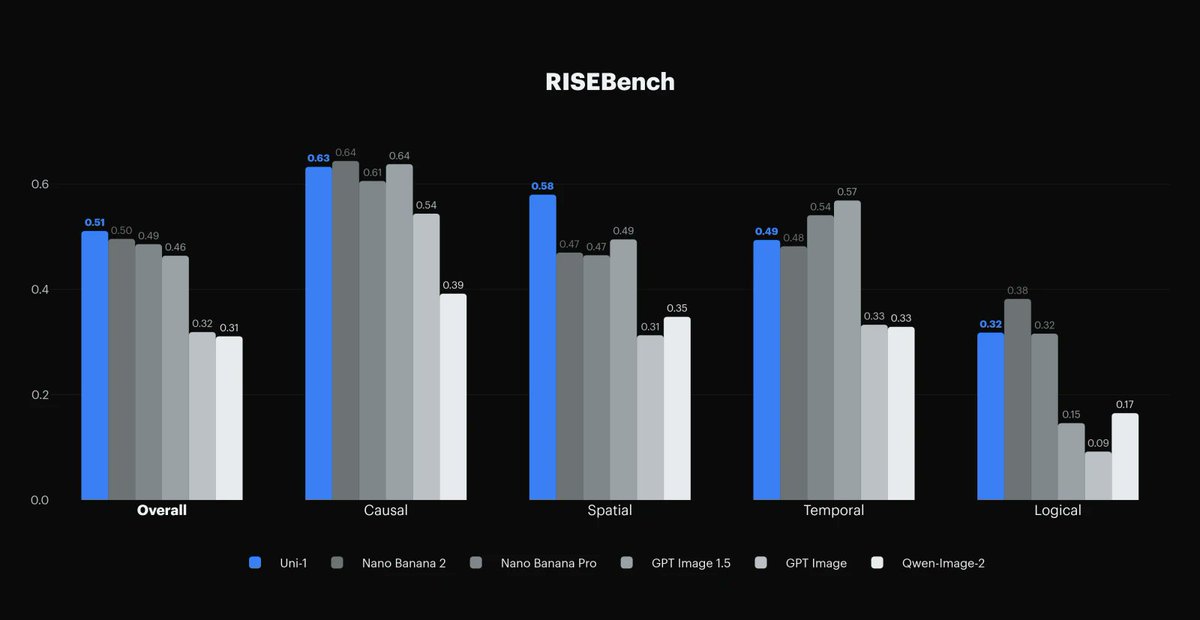

Excited to introduce Uni-1, our new *unified* multimodal model that does both understanding and generation: lumalabs.ai/uni-1 TLDR: I think Uni-1 @LumaLabsAI is > GPT Image 1.5 in many cases, and toe-to-toe with Nano Banana Pro/2. (showcase below)

Proud to introduce EgoScale: We pretrained a GR00T VLA model on 20K+ hours of egocentric human video and discovered that robot dexterity can be scaled, not with more robots, but with more human data. A thread on 🧵what we learned. 👇

Robot foundation models are limited by costly real data, while simulation data is plentiful but visually mismatched to reality. We present Point Bridge, a method that enables zero-shot sim-to-real transfer for robot learning with minimal visual alignment. pointbridge3d.github.io

Here's my enormous round-up of everything we learned about LLMs in 2025 - the third in my annual series of reviews of the past twelve months simonwillison.net/2025/Dec/31/th… This year it's divided into 26 sections! This is the table of contents:

Can large-scale sim data enable real-world generalization?🤔 In our new work, we introduce a generalizable domain adaptation setting, where policies must handle real-world situations never presented in the real training data. (1/n)

🪩The one and only @stateofai 2025 is live! 🪩 It’s been a monumental 12 months for AI. Our 8th annual report is the most comprehensive it's ever been, covering what you *need* to know about research, industry, politics, safety and our new usage data. My highlight reel:

Thanks to everyone who joined the GenPrior workshop yesterday! We had a full house and a stellar lineup of speakers. Huge thanks to our speakers (@gao_young @haroldsoh @GeorgiaChal @Ed__Johns @leto__jean @xiaolonw @AjayMandlekar )for their insightful talks and panel!

Can we scale up mobile manipulation with egocentric human data? Meet EMMA: Egocentric Mobile MAnipulation EMMA learns from human mobile manipulation + static robot data — no mobile teleop needed! EMMA generalizes to new scenes and scales strongly with added human data. 1/9

Large robot datasets are crucial for training 🤖foundation models. Yet, we lack systematic understanding of what data matters. Introducing MimicLabs ✅System to generate large synthetic robot 🦾 datasets ✅Data-composition study 🗄️ on how to collect and use large datasets 🧵1/