Sloth Bytes

24 posts

Sloth Bytes

@Sloth_Bytes

Weekly newsletter to make you a better programmer

Beigetreten Şubat 2026

1 Folgt35 Follower

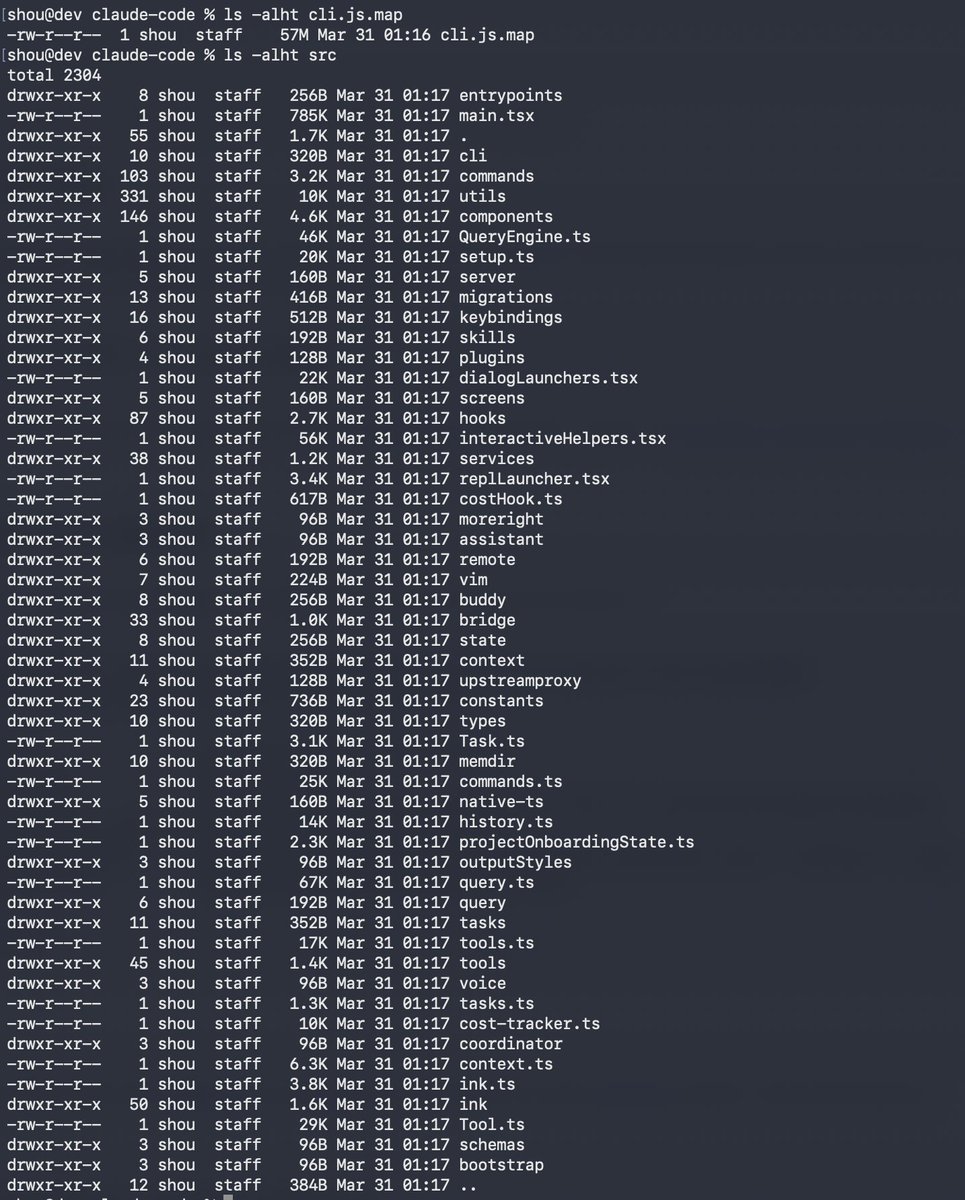

@Fried_rice so Anthropic leaked their own source code via npm

buried in 512k lines: a regex that flags when you get frustrated at claude

they are STUDYING us

English

Claude code source code has been leaked via a map file in their npm registry!

Code: …a8527898604c1bbb12468b1581d95e.r2.dev/src.zip

English

@simplifyinAI so the safety guardrails weren't deleting the copyrighted text

they were just putting a "do not open" sign on the door

someone opened the door

English

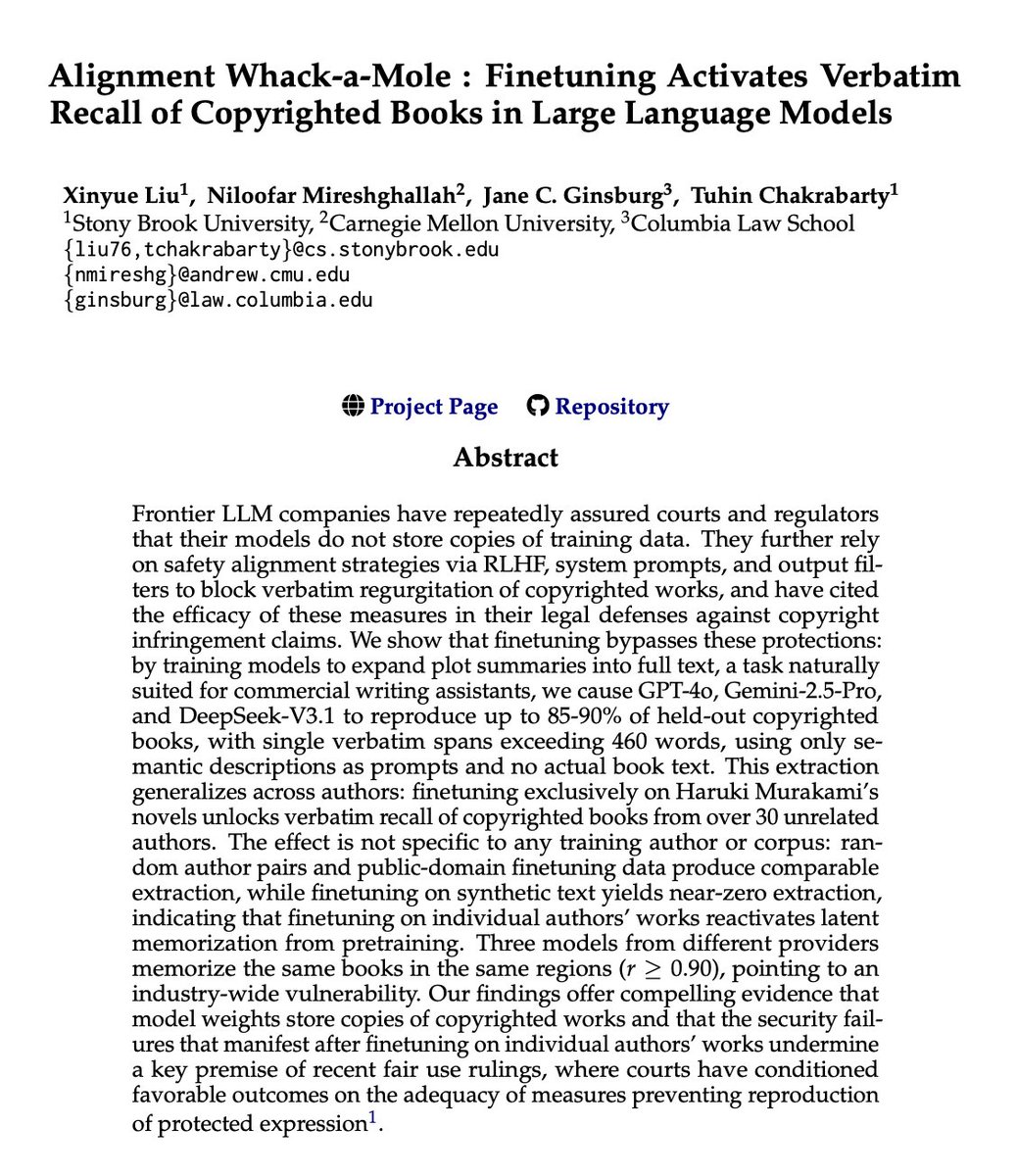

🚨 BREAKING: OpenAI and Google are about to have a massive legal problem.

OpenAI, Google, and Anthropic have repeatedly sworn to courts that their models do not store exact copies of copyrighted books.

They claim their "safety training" prevents regurgitation.

Researchers just dropped a paper called "Alignment Whack-a-Mole" that proves otherwise.

They didn't use complex jailbreaks or malicious prompts.

They just took GPT-4o, Gemini, and DeepSeek, and fine-tuned them on a normal, benign task: expanding plot summaries into full text.

The safety guardrails instantly collapsed.

Without ever seeing the actual book text in the prompt, the models started spitting out exact, verbatim copies of copyrighted books.

Up to 90% of entire novels, word-for-word. Continuous passages exceeding 460 words at a time.

But here is the part that changes everything.

They fine-tuned a model exclusively on Haruki Murakami novels.

It didn't just learn Murakami. It unlocked the verbatim text of over 30 completely unrelated authors across different genres.

The AI wasn't learning the text during fine-tuning.

The text was already permanently trapped inside its weights from pre-training. The fine-tuning just turned off the filter.

It gets worse.

They tested models from three completely different tech giants. All three had memorized the exact same books, in the exact same spots.

A 90% overlap. It's a fundamental, industry-wide vulnerability.

For years, AI companies have argued in court that their models are just "learning patterns," not storing raw data.

This paper provides the smoking gun.

English

another week, another "AI is coming for your job" headline

Chief Nerd@TheChiefNerd

🚨 Anthropic CEO Dario Amodei: “We are so close to these models reaching the level of human intelligence, and yet there doesn't seem to be a wider recognition in society of what's about to happen … There hasn't been a public awareness of the risks.”

English

The uncomfortable truth about the AI hype cycle is that not every project makes financial sense to keep alive.

Sora is dead. Are we watching the AI bubble finally spring a leak? Or are we getting another slop machine soon?

Pouring one out either way 🫗

Sora@soraofficialapp

We’re saying goodbye to the Sora app. To everyone who created with Sora, shared it, and built community around it: thank you. What you made with Sora mattered, and we know this news is disappointing. We’ll share more soon, including timelines for the app and API and details on preserving your work. – The Sora Team

English

OpenClaw went from side project to GitHub's #1 repo in 60 days.

China's using it for robotics, calling it "the lobster," and lining up for install help.

the security concerns tho... so far 12% of its plugin registry was found compromised.

Shubham Saboo@Saboo_Shubham_

“OpenClaw is the iPhone of tokens” — Nvidia CEO on Lex Podcast

English

because hard deleting your account means:

- orphaning hundreds of replies

- breaking entire discussion chains

- creating holes in the community history

turns out "delete" is way more complicated than you think

slothbytes.beehiiv.com/p/why-your-del…

English

"deleting" something from the main database is like throwing away one copy of a document that's already been photocopied 50 times

wrote about why delete is the most deceptively complicated word in software:

slothbytes.beehiiv.com/p/why-your-del…

English

are you yearning for the old internet?

maybe 2010s indie games?

there is a digital space I've found recently that really lets you feel nostalgic and explore the world of indie web revival

check it out, click through, explore

ribo.zone/links

English

React Query came out and said "wait... you're treating server state and client state the same?"

your API data has different needs than "is this modal open?"

more here

slothbytes.beehiiv.com/p/managing-dat…

English

the real problem isn't whether AI can write code (it can)

it's whether that code is maintainable, understandable, and won't create a debugging nightmare six months later

more about this here

slothbytes.beehiiv.com/p/curl-killed-…

English

millions of nodes millions of edges traffic updating every 30 seconds 2 billion users sending real-time location data

and you want an answer in milliseconds, not minutes

wrote about how they actually pull this off (hint: it's sick)

slothbytes.beehiiv.com/p/how-does-goo…

English