Angehefteter Tweet

TensorTonic

191 posts

TensorTonic

@TensorTonic

Run ML algorithms in cloud-native sandbox at https://t.co/1f7hGOZw21

Delhi, India Beigetreten Nisan 2025

1 Folgt7K Follower

Ever wondered how PyTorch actually handles backpropagation?

It builds a computational graph. Every operation you write, every multiply, every add, gets recorded as a node. Then it walks backward through that graph, applying the chain rule at every step.

That's autograd.

Most people treat it like magic. They call loss.backward() and move on.

Read more here on TensorTonic: tensortonic.com/ml-math/graph-…

English

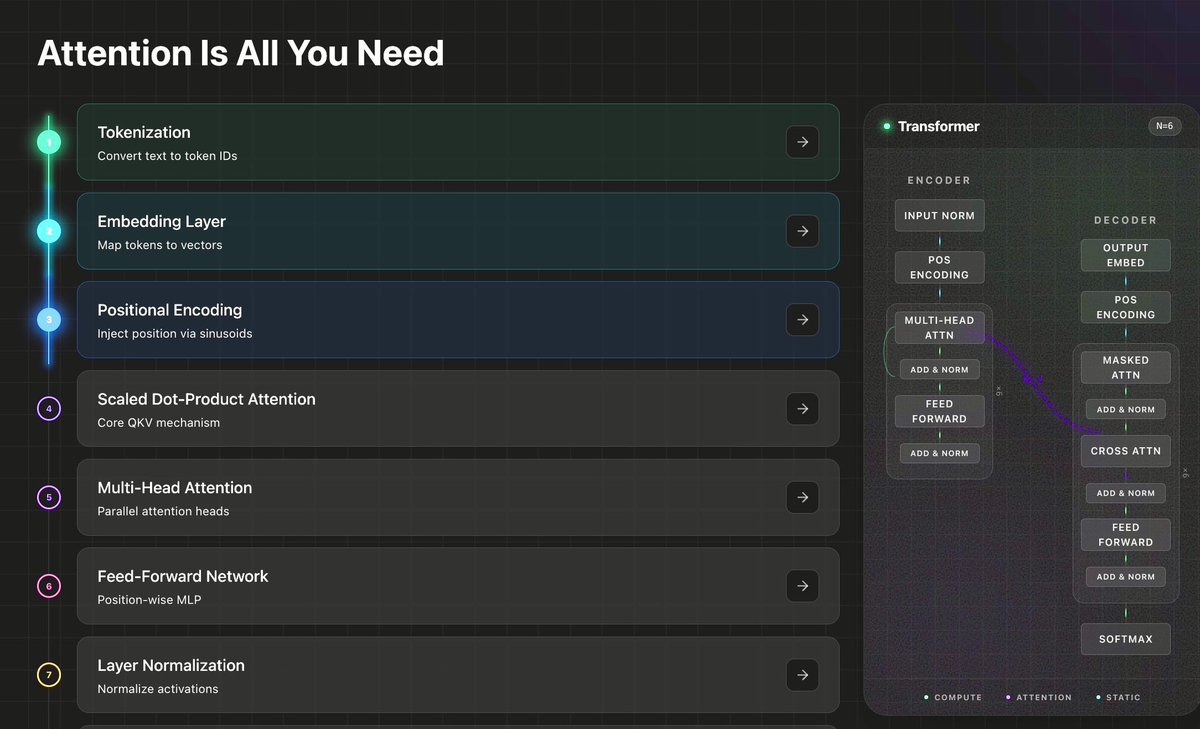

You don't understand transformers until you've built one from scratch.

Tokenization → Embedding → Positional Encoding → Scaled Dot-Product Attention → Multi-Head Attention → Feed-Forward Network → Layer Norm → Encoder → Decoder → Full Transformer.

> Each block is a coding problem.

> Each one runs against real test cases.

> No IDE setup, no environment issues, just open and code.

We broke "Attention Is All You Need" into subset of problems so you can build the entire architecture one piece at a time.

Try it free: tensortonic.com

English

TensorTonic retweetet

@TensorTonic

Already in the top 750 by solving 12 questions. The aim is to move the highest I can by the weekend. This is an awesome platform to practice

English

All you need to start reading ML research papers ->

You need to understand the building blocks that 90% of papers are built on.

Here's the complete list:

Attention Mechanisms

> Scaled dot-product attention

> Multi-head attention

> Self-attention vs cross-attention

> Causal masking

Every modern paper builds on attention. Transformers, vision transformers, diffusion models: all variations of the same core idea.

Loss Functions and Training Objectives

> Cross-entropy and its variants

> Contrastive loss (SimCLR, CLIP)

> Triplet loss

> KL divergence (VAEs, distillation)

> Reconstruction loss

When a paper says "we optimize L_total = L_ce + λL_kl," you need to know what each term does and why it's there.

Regularization and Normalization

> Dropout and why it approximates ensembles

> Layer norm vs batch norm vs RMS norm

> Weight decay

> Label smoothing

> Gradient clipping

Papers mention these in one line and move on. If you don't already know them, you're stuck.

Optimization and Convergence

> Adam, AdamW, and why the W matters

> Learning rate schedules (warmup, cosine decay)

> Gradient accumulation

> Mixed precision training

"We train with AdamW, lr=3e-4, cosine schedule with 2000 warmup steps." Every paper has this sentence. You should know exactly what it means.

Evaluation Metrics

> Perplexity

> BLEU, ROUGE

> F1, precision, recall (and when each matters)

> AUC-ROC

> FID (for generative models)

If you can't evaluate results, you can't judge whether a paper's claims hold up.

Architectural Patterns

-> Encoder-decoder structure

-> Residual connections (and why they solved deep training)

-> Positional encodings

-> Mixture of Experts

-> U-Net skip connections

Sampling and Generation

> Softmax temperature

> Top-k and nucleus (top-p) sampling

> Beam search

> Autoregressive vs non-autoregressive generation

That's it.

You don't need to understand every proof. You need to understand the components well enough that when a paper combines them in a new way, you can follow the logic.

The fastest way to get there: implement each one from scratch. Not read about it. Implement it.

That's what TensorTonic's problems are built for.

Practise them here: tensortonic.com

English

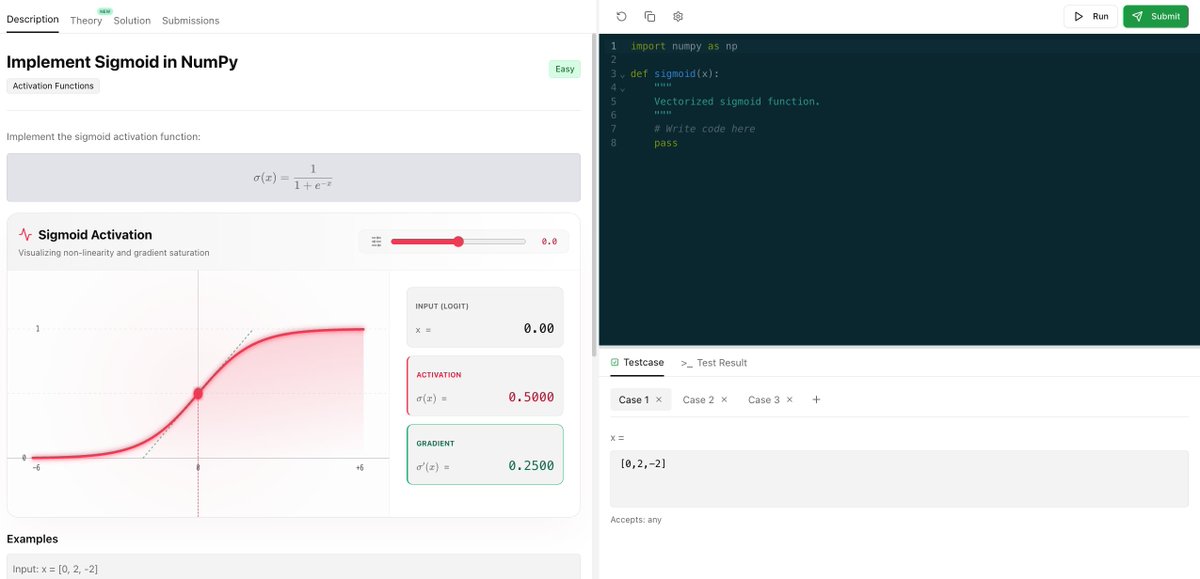

Can you explain why ReLU kills gradients, why GELU is in every transformer, why Softmax turns logits into probabilities?

> Sigmoid

> ReLU

> Tanh

> Softmax

> LeakyReLU

> GELU

> Swish

> ELU

> SELU

Every activation function you'll ever need, explained by implemention.

Practice all of them on TensorTonic: tensortonic.com

English

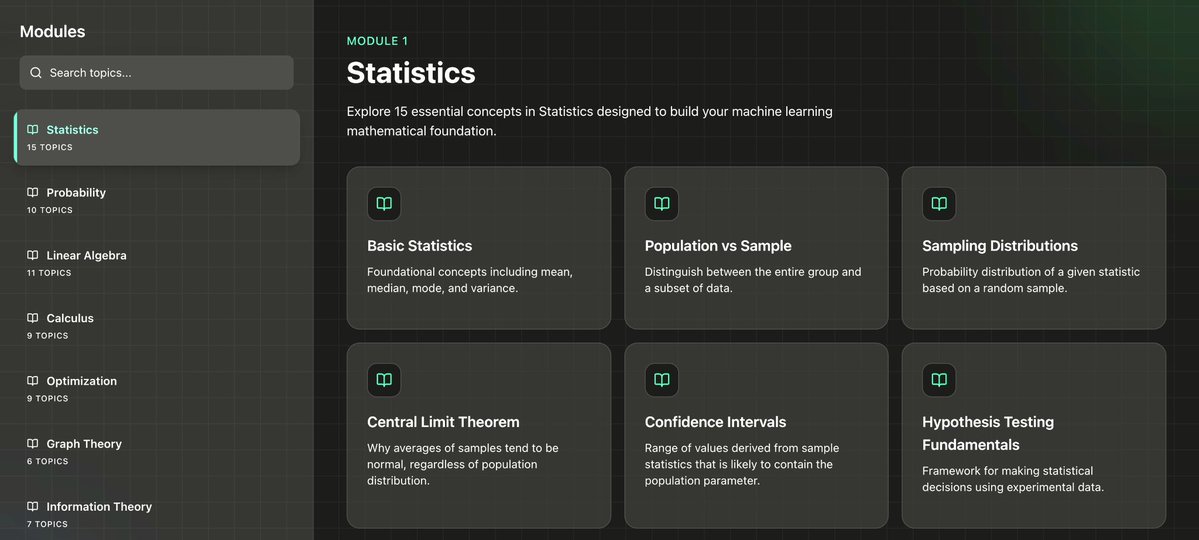

All the math you need to understand Machine Learning:

Linear Algebra

> Vectors, dot products, norms

> Matrix multiplication and transpose

> Eigenvalues and eigenvectors

> Singular Value Decomposition (SVD)

> Matrix inverses and pseudo-inverses

Every dataset is a matrix. Every model transformation is a matrix operation. When you implement PCA from scratch, you stop fearing linear algebra forever.

Read from here: tensortonic.com/ml-math

Probability and Statistics

> Bayes' theorem

> Distributions: Gaussian, Bernoulli, Multinomial

> Expectation, variance, covariance

> Maximum Likelihood Estimation

> MAP estimation

> Conditional probability

Every ML model is making a probabilistic bet. Naive Bayes, GMMs, VAEs: all just probability applied differently.

Read here: tensortonic.com/ml-math

Calculus

> Derivatives and partial derivatives

> Chain rule (this is literally backpropagation)

> Gradients and Jacobians

> Hessians (second-order intuition, not computation)

> Multivariate function optimization

You need to understand what a gradient means geometrically: which direction makes the loss go down.

Read here: tensortonic.com/ml-math

Optimization

> Gradient descent and its variants (SGD, Adam, RMSProp)

> Learning rates and convergence

> Convexity (and why non-convex still works)

> Loss functions: MSE, cross-entropy, hinge loss

> Regularization: L1, L2, and why they work geometrically

This is where math becomes code.

Read here: tensortonic.com/ml-math

Information Theory

> Entropy

> Cross-entropy (yes, your loss function)

> KL divergence

> Mutual information

Most people skip this. Don't.

Read here: tensortonic.com/ml-math

That's it.

These five areas, practiced through real implementations, cover 95% of the math behind every ML model from linear regression to transformers.

That's what we built TensorTonic for. 200+ problems where you implement the math from scratch, with interactive visualizations that show you what every operation does geometrically.

Link - tensortonic.com

English

How to become great at ML interviews in the next 5 months -

You want to be able to:

-> Implement any ML algorithm from scratch

-> Explain the math behind every model you use

-> Solve coding rounds at top AI companies

-> Go beyond .fit() and actually understand what's happening

Month 1: Build your math foundation

> linear algebra (matrix ops, eigenvalues, SVD)

> calculus (gradients, chain rule, Jacobians)

> probability and statistics

> information theory basics (entropy, KL divergence)

TensorTonic has 60+ math articles with step-by-step visualizations that show you what the math actually does geometrically.

Month 2: Core ML algorithms, from scratch

> linear regression, logistic regression

> gradient descent variants (SGD, Adam, RMSProp)

> decision trees, random forests

> SVMs, kernel methods

> k-means, PCA, t-SNE

> cross-validation, bias-variance tradeoff

Don't just call sklearn. Implement the training loop. Write the loss function. Compute the gradient by hand, then in code.

Month 3: Neural networks and deep learning

> perceptrons, MLPs

> backpropagation (implement it, don't just read about it)

> activation functions and why each one exists

> CNNs: convolution, pooling, feature maps

> RNNs, LSTMs, GRUs

> batch norm, dropout, weight initialization

> loss functions and when to use which

The moment you implement backprop from scratch and watch gradients flow through layers visually, everything clicks.

Month 4: NLP and transformers

> word embeddings (Word2Vec, GloVe)

> attention mechanism from scratch

> transformer architecture

> positional encoding

> tokenization (BPE, WordPiece)

Attention is all you need, but only if you understand why. Implement scaled dot-product attention, see the attention weights visualized, and you'll never forget it.

Month 5: MLOps

> feature engineering pipelines

> model serving and latency tradeoffs

> A/B testing and evaluation metrics

> data drift and monitoring

> scaling training and inference

> recommendation systems

> search and ranking

This is what separates "I know ML" from "I can build ML systems." Every top company ask these now.

Every month, the pattern is the same: learn the concept, then implement it from scratch, then see what the math is doing visually.

That's exactly what TensorTonic is built for: 200+ problems, interactive visualizations, deep theory, and structure that follow this roadmap.

Start here: tensortonic.com

English

Problem of the day: Implement a learning rate schedular with optimal warmup.

Link: tensortonic.com/problems/linea…

English

Problem of the day: Swish Activation.

Implement it from scratch on Tensortonic -

tensortonic.com/problems/swish…

English

TensorTonic retweetet

I ended up at #1 on @TensorTonic after a good sprint of working on the platform... it's pretty good for students who want to master AI at the practical level...

English

TensorTonic retweetet

TensorTonic retweetet

🚀 We’re hiring a Machine Learning Intern (Remote) at @TensorTonic

Most people use ML models.

We want someone who can build them from scratch.

Looking for someone who:

• Understands ML math (LA, Calc, Prob)

• Is strong in Python + PyTorch / TF

• Knows LLM concepts (LoRA, KV Cache, GRPO)

• Can implement models without just calling .fit()

You’ll help implement ML algorithms, break down research papers, and build interactive learning modules.

If Transformers, ViT, GANs, DDPM, RLHF excite you — apply 👇

forms.gle/7nDVYfXhoNVoit…

English

TensorTonic retweetet

Man, if anyone what to grasp the core intuition of ML models not through libraries and high level API calls but core math and implementations. you should checkout tensortonic website. It's exceptional and surely worth of time.

tensortonic.com

@TensorTonic

English