Unsloth AI

588 posts

Unsloth AI

@UnslothAI

Making open-source AI accessible! 🦥 https://t.co/2kXqhhvdCD

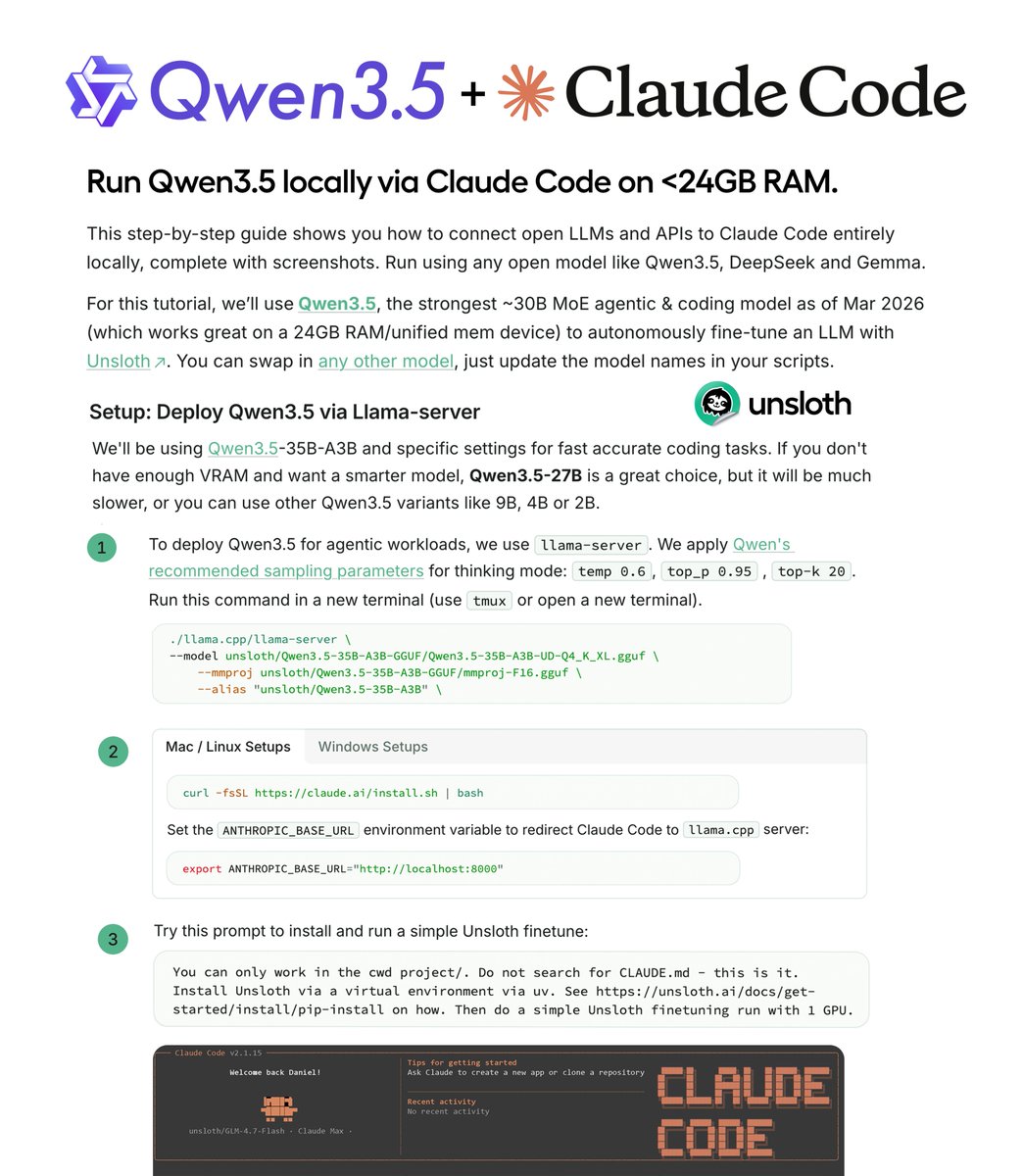

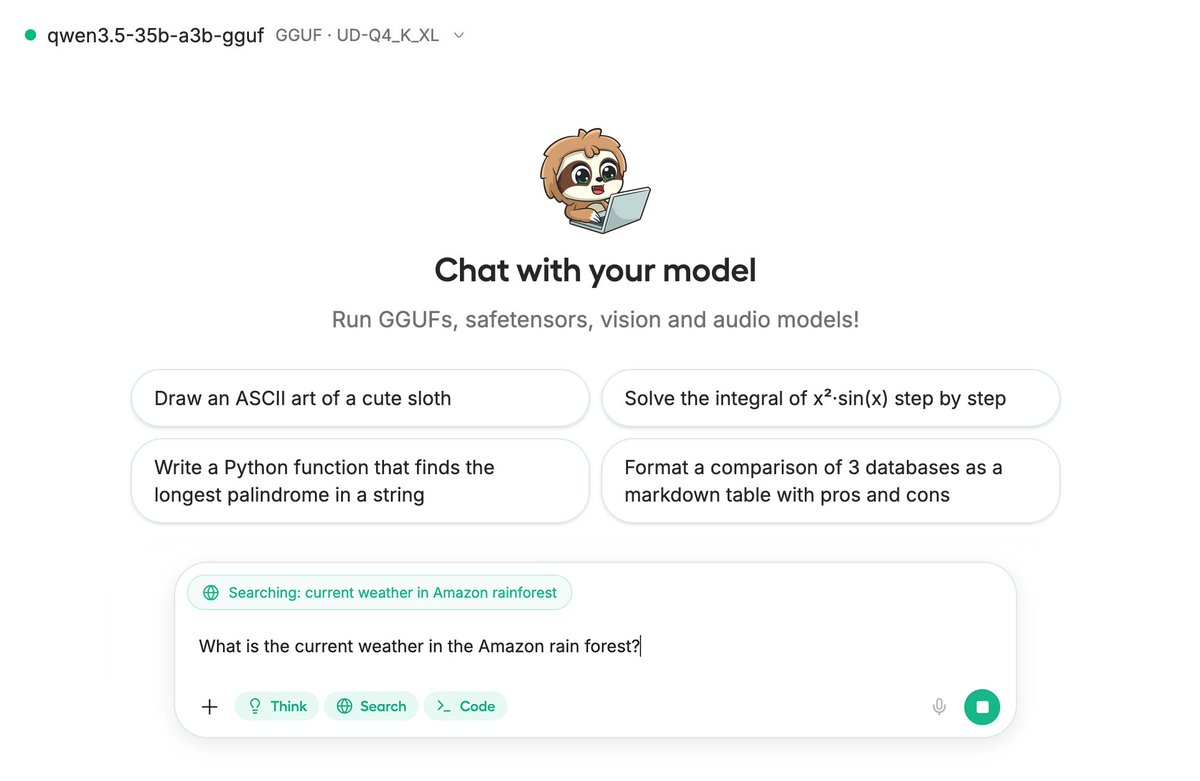

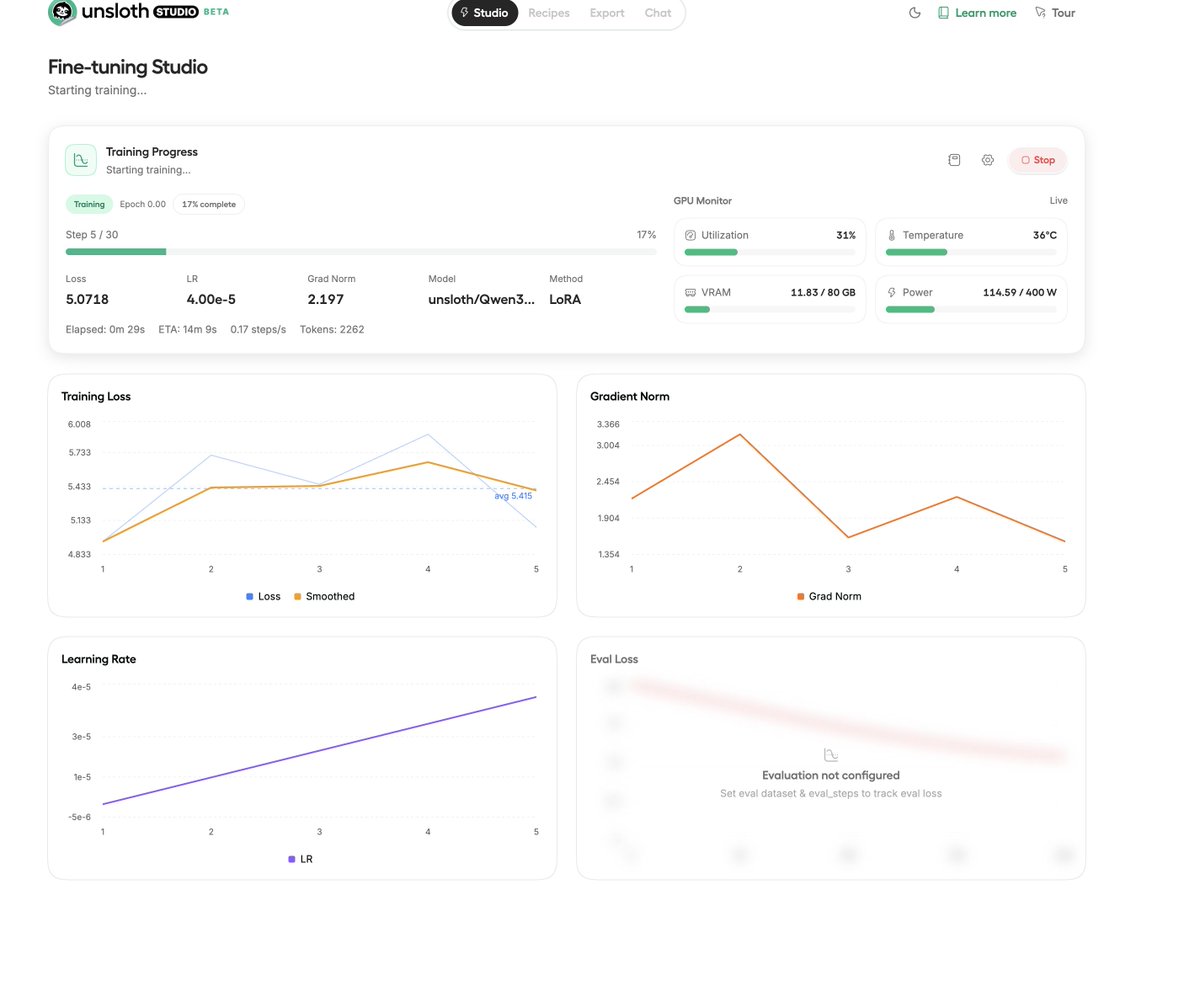

Introducing Unsloth Studio ✨ A new open-source web UI to train and run LLMs. • Run models locally on Mac, Windows, Linux • Train 500+ models 2x faster with 70% less VRAM • Supports GGUF, vision, audio, embedding models • Auto-create datasets from PDF, CSV, DOCX • Self-healing tool calling and code execution • Compare models side by side + export to GGUF GitHub: github.com/unslothai/unsl… Blog and Guide: unsloth.ai/docs/new/studio Available now on Hugging Face, NVIDIA, Docker and Colab.

Introducing Unsloth Studio ✨ A new open-source web UI to train and run LLMs. • Run models locally on Mac, Windows, Linux • Train 500+ models 2x faster with 70% less VRAM • Supports GGUF, vision, audio, embedding models • Auto-create datasets from PDF, CSV, DOCX • Self-healing tool calling and code execution • Compare models side by side + export to GGUF GitHub: github.com/unslothai/unsl… Blog and Guide: unsloth.ai/docs/new/studio Available now on Hugging Face, NVIDIA, Docker and Colab.

Introducing Unsloth Studio ✨ A new open-source web UI to train and run LLMs. • Run models locally on Mac, Windows, Linux • Train 500+ models 2x faster with 70% less VRAM • Supports GGUF, vision, audio, embedding models • Auto-create datasets from PDF, CSV, DOCX • Self-healing tool calling and code execution • Compare models side by side + export to GGUF GitHub: github.com/unslothai/unsl… Blog and Guide: unsloth.ai/docs/new/studio Available now on Hugging Face, NVIDIA, Docker and Colab.

Introducing Unsloth Studio ✨ A new open-source web UI to train and run LLMs. • Run models locally on Mac, Windows, Linux • Train 500+ models 2x faster with 70% less VRAM • Supports GGUF, vision, audio, embedding models • Auto-create datasets from PDF, CSV, DOCX • Self-healing tool calling and code execution • Compare models side by side + export to GGUF GitHub: github.com/unslothai/unsl… Blog and Guide: unsloth.ai/docs/new/studio Available now on Hugging Face, NVIDIA, Docker and Colab.