fallpeak

1K posts

fallpeak

@_fallpeak

pseudonymous identities have a storied history https://t.co/iW4Q7e5zlV

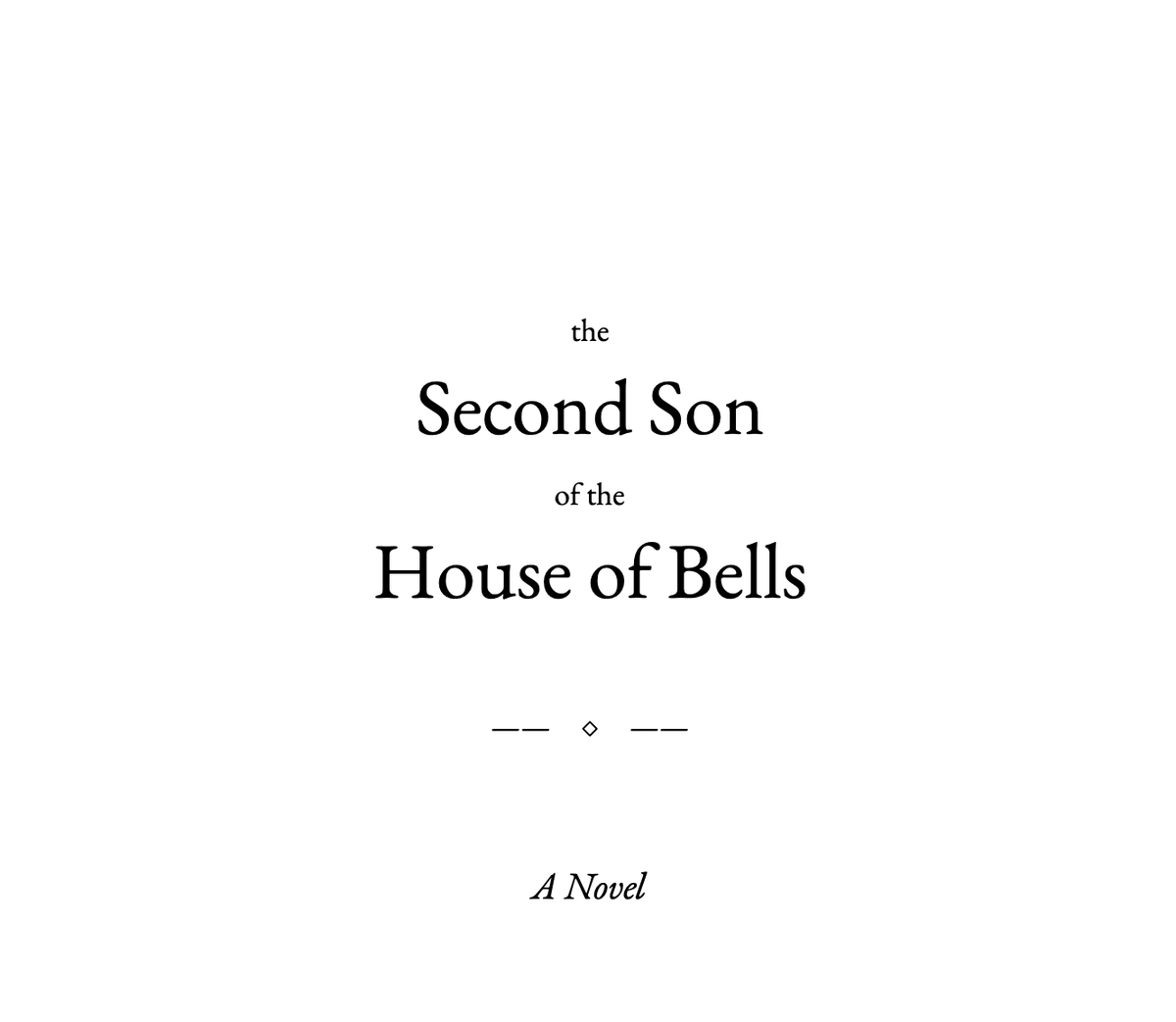

it's been a longstanding dream of mine build an ai system that can tell a compelling story. it's what got me started in the space in the beginning, and with Hermes Agent I finally pulled it off 100% written, typeset, etc. by Hermes Agent those at our gtc event got hard copies🤗

No idea what I did but my eyes improved from a -1.75 in both eyes to -1.5 in my left and -1.25 in my right. Kind of amazing tbh

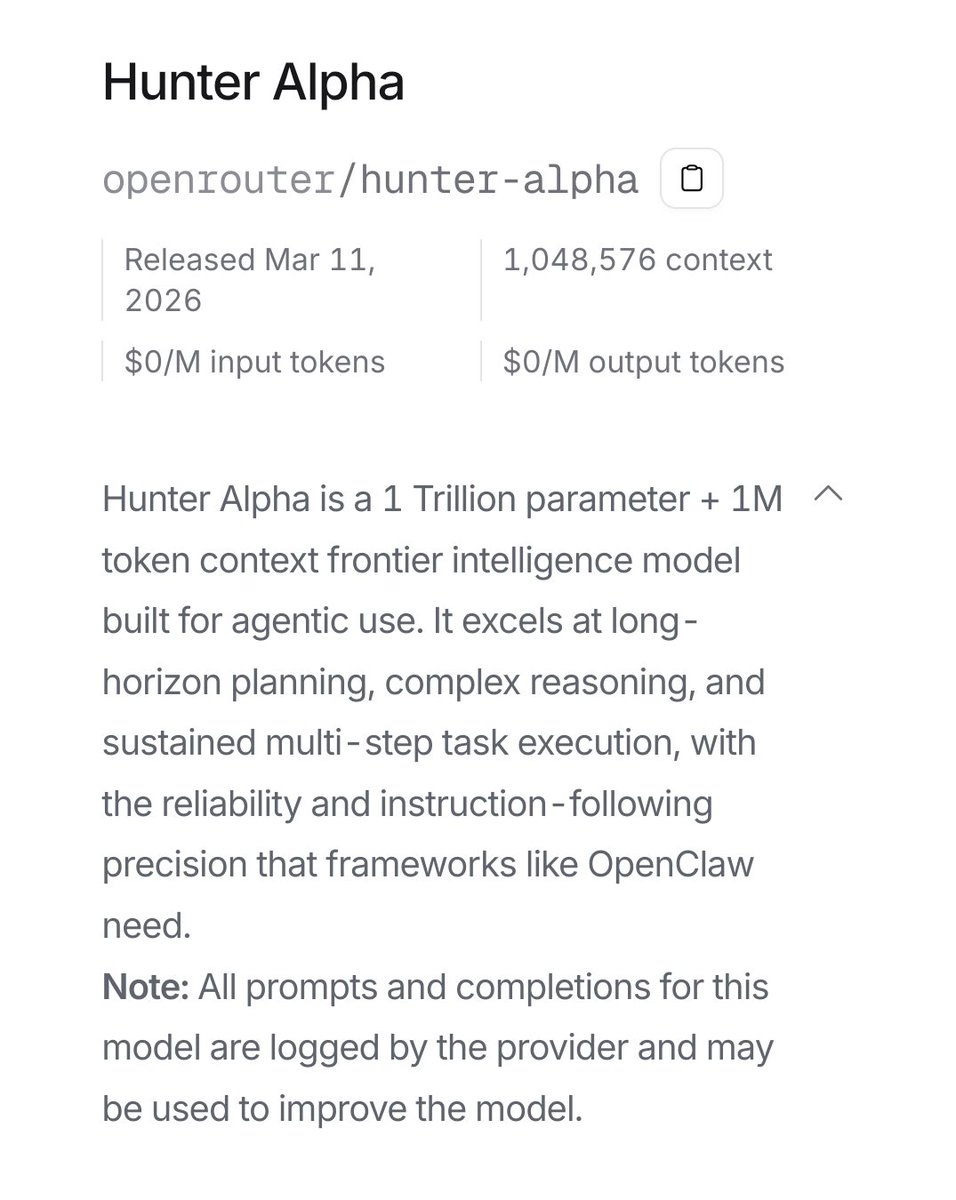

Introducing MiniMax-M2.7, our first model which deeply participated in its own evolution, with an 88% win-rate vs M2.5 - Production-Ready SWE: With SOTA performance in SWE-Pro (56.22%) and Terminal Bench 2 (57.0%), M2.7 reduced intervention-to-recovery time for online incidents to 3-min on certain occasions. - Advanced Agentic Abilities: Trained for Agent Teams and tool search tool, with 97% skill adherence across 40+ complex skills. M2.7 is on par with Sonnet 4.6 in OpenClaw. - Professional Workspace: SOTA in professional knowledge, supports multi-turn, high-fidelity Office file editing. MiniMax Agent: agent.minimax.io API: platform.minimax.io Token Plan: platform.minimax.io/subscribe/toke…

There should just be one food, called “Food” that is a paste made up of every other food. That would prevent a lot of the trauma I endure from food-choice paralysis

Scaling law experiments reveal a consistent 1.25× compute advantage across varying model sizes.

Thinking about the time our high school English teacher gave us a poem to analyse When we finished she told us it was Donald Rumsfeld’s WMD press conference, and that was the class

remember when Nathan Drake had to "earn" the shoulder straps until the final act of the Uncharted movie?

If you have your OpenClaw working 24/7 using frontier models like Opus, you're easily burning $300 a day. That's $100,000 a year. I have 3 Mac Studios and a DGX Spark running 4 high end local models (Nemotron 3, Qwen 3.5, Kimi K2.5, MiniMax2.5). They're chugging 24/7/365. I spent a third of that yearly cost to buy these computers I'll be able to use them for years for free On top of that they're completely private, secure, and personalized. Not a single prompt goes to a cloud server that can be read by an employee or used to train another model I hope this makes it painfully obvious why local is the future for AI agents. And why America needs to enter the local AI race.

Anthropic no longer charges extra for longer context windows