Philip Schroeder

30 posts

Philip Schroeder

@_pschro

PhD student at MIT. @MIT_CSAIL @MITEECS @nlp_mit

🚨New paper alert 🚨 from @rai_inst! arxiv.org/abs/2603.15757 🤖You robot policy is actually better than you think! We find that for a given policy, ALWAYS denoising a single noise vector, which we call a ✨Golden Ticket ✨, leads to consistent performance improvements! 🧵...

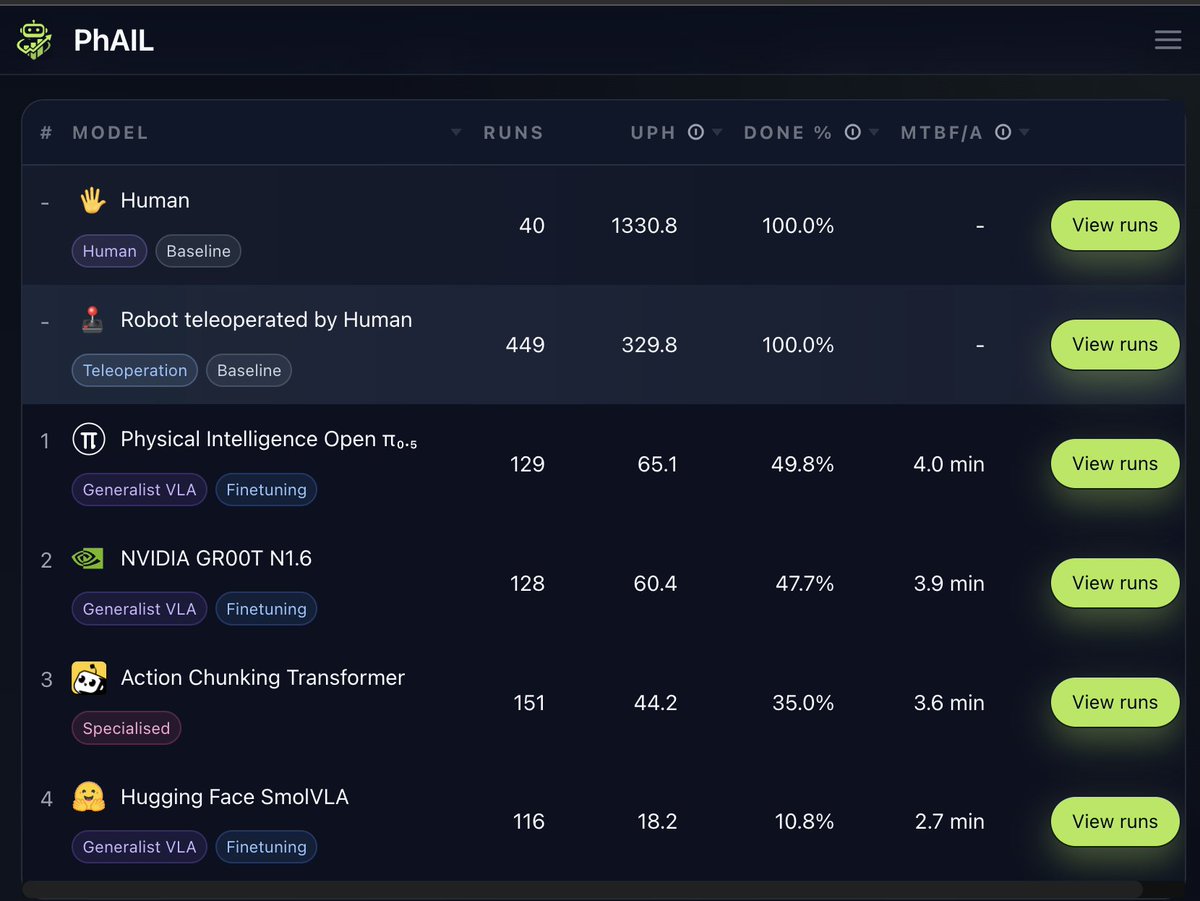

Super excited to share Robometer, a reward model that works zero-shot across robots, tasks, and scenes! Try fine-tuning Robometer on your own dataset! 🌐Project website: robometer.github.io 💻Code: github.com/robometer/robo…

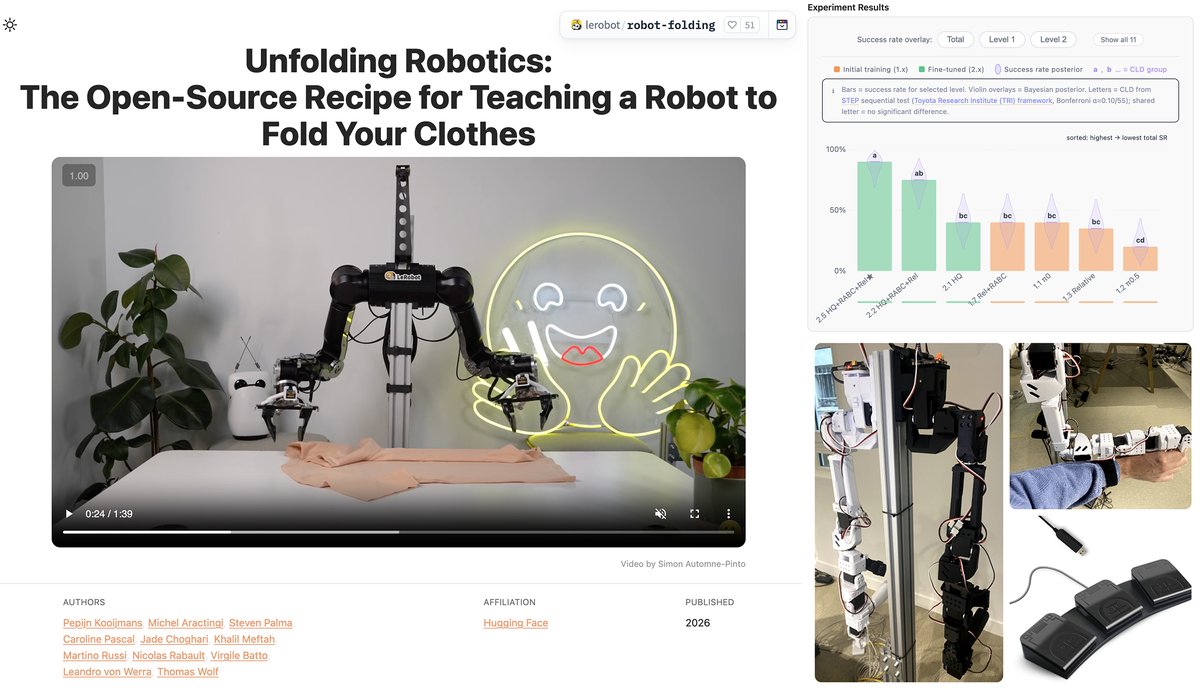

Inspired by the TopReward paper, I made a lil web tool to test these robot manipulation rewards on your own videos. Try: philfung.github.io/rewardscope Record yourself folding a towel, upload it, and compare: 1. TopReward (this paper) 2. GVL (Deepmind) 3. Brute Force (i.e. at each frame, ask LLM to reply with a probability) TopReward (Qwen3VL-8B) holds its own surprisingly well against the others, even if those use ChatGPT! Great work @DJiafei, UW, AllenAI, thanks for pushing @VilleKuosmanen.

A reward model that works, zero-shot, across robots, tasks, and scenes? Introducing Robometer: Scaling general-purpose robotic reward models with 1M+ trajectories. Enables zero-shot: online/offline/model-based RL, data retrieval + IL, automatic failure detection, and more! 🧵 (1/12)