The fact that the Supreme Court Lawmaxxed President Trump has really spiked his cortisol IMO He’s already mogged them by Tariffmaxxing and adding another 10% What will happen next?

a$ce

5.3K posts

@ace_leverage

building @javsphere and @javliscom on @base

The fact that the Supreme Court Lawmaxxed President Trump has really spiked his cortisol IMO He’s already mogged them by Tariffmaxxing and adding another 10% What will happen next?

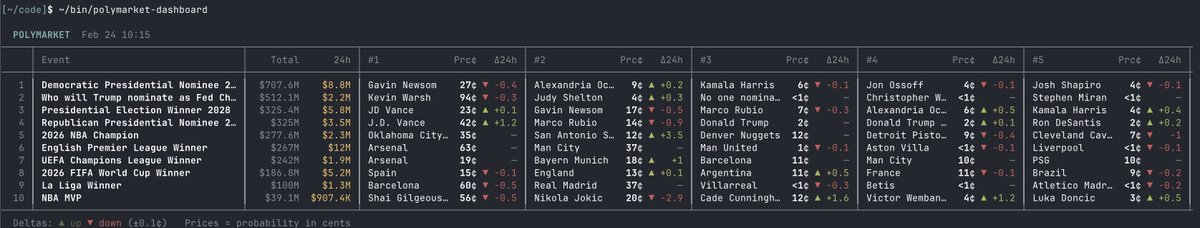

introducing polymarket cli - the fastest way for ai agents to access prediction markets built with rust. your agent can query markets, place trades, and pull data - all from the terminal fast, lightweight, no overhead

Distillation can be legitimate: AI labs use it to create smaller, cheaper models for their customers. But foreign labs that illicitly distill American models can remove safeguards, feeding model capabilities into their own military, intelligence, and surveillance systems.

These attacks are growing in intensity and sophistication. Addressing them will require rapid, coordinated action among industry players, policymakers, and the broader AI community. Read more: anthropic.com/news/detecting…

🚨🇮🇷 Iran just signaled it's prepared to ship out half its highly-enriched uranium, dilute the rest, and join a regional enrichment group, with other countries watching. All to dodge sanctions and any future strikes. This is Tehran blinking first in the nuclear standoff. Source: @sentdefender

Elon talks post-scarcity. Abundance. AI and robots handle everything. The transition won’t be gentle. Truth keeps the system stable. @elonmusk