Ahmed Medhat

322 posts

Ahmed Medhat

@amedhat_

Working on multi-agent collaboration. Advocating for coordination tech. Previously, Graph Learning @Meta, NeuroAI @CSHL

Beigetreten Ekim 2024

736 Folgt147 Follower

Angehefteter Tweet

The Case for Coordination Tech

The world is cracking at the seams under the weight of our inability to establish means to collaborate, coordinate and sustain a common ground understanding of reality. The fact that we’re all walking around with thinking machines isolated from each other drives personal, social and political friction, stemming from a lack of understanding of each other's perceptions, intentions, grievances, and aspirations.

Fundamentally, the story of human civilization is one where we’ve managed to establish positive sum games enabling us to collectively thrive. The problem today is that individualized tech has moved by light years, while coordination tech remains stagnant. The most legitimate widely adopted forms of coordination tech are centuries old; democracy, law and currency.

Confronted with world changing developments such as AI, we are falling into a nation state race condition that’s in conflict with what it takes to steer this technology responsibly. With that in mind, the foremost coordination problem to solve at this moment is the problem of coordination amongst large groups of people, even in absence of agents. It should have been done decades ago, not even just now.

English

@ramez In a sense it boosts it. I suppose that claiming that a product is an existential threat would by necessity create an inflated sense of how intelligent the product actually is. If it’s God, it can definitely do your taxes I guess.

English

If the heads of the AI labs believe this, it's responsible and ethical of them to talk about it, right?

From a pure business standpoint, talking about risks hasn't seemed to slow AI usage growth.

Noah Smith 🐇🇺🇸🇺🇦🇹🇼@Noahpinion

The heads of the big AI labs continue to insist that their products are going to take all your jobs, and also pose various catastrophic risks

English

In the ancient world, rare individuals advanced civilization because they could do what institutions and tools could not: preserve knowledge, perform extraordinary feats of calculation, make intuitive leaps that bypassed ordinary reasoning, or attained unusual depths of spiritual realization mostly through renouncement and silent contemplation (people like Buddha, St Francis, Hallaj, Rabaa & Dhul Nun). Modern technology gradually absorbed the first two capacities into libraries, bureaucracies, and machines. AGI may do the same to the third, commoditizing discovery and creation itself. If that happens, the last scarce frontier may be inward: not intelligence in the instrumental sense, but consciousness, meaning, freedom from suffering, and forms of self-transformation that are not "industrializable". The figures once dismissed as premodern mystics may come to seem less like relics of backwardness and more like explorers of the one domain that remains irreducibly human.

English

@iamgingertrash "Altman knows OpenAI isn’t worth 800B" Perhaps, but it's on a path to being worth more eventually.

English

The Case for Coordination Tech

The world is cracking at the seams under the weight of our inability to establish means to collaborate, coordinate and sustain a common ground understanding of reality. The fact that we’re all walking around with thinking machines isolated from each other drives personal, social and political friction, stemming from a lack of understanding of each other's perceptions, intentions, grievances, and aspirations.

Fundamentally, the story of human civilization is one where we’ve managed to establish positive sum games enabling us to collectively thrive. The problem today is that individualized tech has moved by light years, while coordination tech remains stagnant. The most legitimate widely adopted forms of coordination tech are centuries old; democracy, law and currency.

Confronted with world changing developments such as AI, we are falling into a nation state race condition that’s in conflict with what it takes to steer this technology responsibly. With that in mind, the foremost coordination problem to solve at this moment is the problem of coordination amongst large groups of people, even in absence of agents. It should have been done decades ago, not even just now.

English

Ahmed Medhat retweetet

@amedhat_ And it’s not like the government hasn’t been threatening military intervention.

English

@timnitGebru Show me, in any of his speeches the last 15 years (including this one) him mentioning once a request to bomb Ethiopia? Urging a diplomatic solution is not a threat not a request for a bombing.

English

The world is just a remix

Prediction: at some point in the near future, we will come to terms with the fact that it could be both true that LLMs (or a future architecture) are fundamentally a remixing machine (“a stochastic parrot”), and that we are not necessarily materially different from that in a large chunk of our seemingly original creations. There’s no explicit reason why seemingly original thought can’t emanate through a process that’s simply an act of high dimensional information remixing and compressing. Where else would it come from?!? Steve Jobs found inspiration for the idea of us being tool builders in humans on a bicycle experiments comparing humans to other primates in terms of energy efficiency. August Kekule dreamt of a snake eating its own tail through which he inferred the ring structure of the benzene molecule. And by definition the act of deduction is an act of summarization of large amounts of information into insights. An LLM figuring something out may make something superfluous, as is claimed below, but perhaps in the grander scheme of things most of our creations are somewhat so.

Rohan Paul@rohanpaul_ai

A new paper published in Nature Astronomy says if LLM can easily replicate what counts as your scientific contribution, then the deeper problem is not the model, but the fact that the work was too routine, formulaic, or low-value to begin with. --- nature .com/articles/s41550-026-02837-2

English

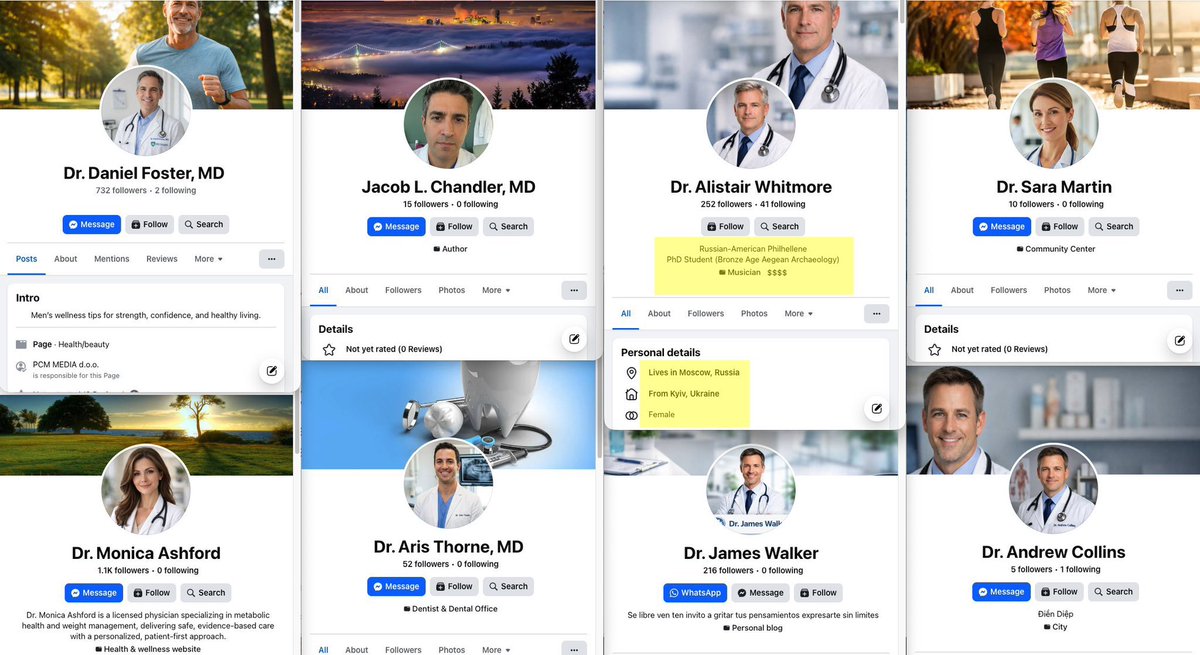

@Brand 800 fake doctor accounts, AI deepfakes, .8B revenue, FDA warning, 1.6M records breached. this is healthcare AI with zero guardrails. the industry needs credentialing verification for AI-generated medical marketing. fraud at scale enabled by automation

English

BREAKING 🚨: This is extremely illegal. This is Matthew Gallagher, who created 800+ Facebook accounts posing as fake doctors to advertise on Facebook, and went on to build a GLP-1 telehealth company with just $20,000, AI, and only one full-time teammate, his brother. The New York Times fabricated their AI startup story.

It generated 401M USD in 2025 and could reach 1.8B USD in 2026. Medvi received FDA Warning Letter #721455 in February 2026 for misbranding violations. Its clinician network, OpenLoop, suffered a data breach in January 2026 that exposed 1.6 million patient records.

Futurism reported that they used AI-generated deepfake before-and-after photos in their marketing. A class action lawsuit was filed in Delaware in November 2025. They are also running 800+ fake doctor accounts on Facebook to sell compounded GLP-1s.

English

@minilek What does that even mean? Water is a fundamental human right and a public good, not a commodity to be sold for profit.

English

@geoffreywoo “Information asymmetry” is by definition a function of access, not desk work. Agents don’t grant access.

English

the venture capital bloodbath is coming and most vcs have zero idea

agents will replace 90% of what associates and principals actually do:

• deal sourcing through network analysis

• due diligence via automated data mining

• portfolio monitoring with real-time metrics

• pattern matching across 10,000x more deals

what exactly are you getting paid for when an agent can analyze every startup in your sector in 3 minutes?

the entire industry is built on information asymmetry that ai just eliminated

most funds will become algorithmic within 24 months

the only vcs who survive are the ones who can actually build companies, not just write checks and send intros

English

A large chunk of the leverage in investing is what exclusive social capital gives in terms of access to both information and financial capital. Analysis and insights when discounting these two things, are somewhat commoditized already. Desk work getting automated is a less significant source of disruption to venture investing, than it will be to most other knowledge work heavy domain. And the expansion of that market means that if all desk workers in VC become rubber stampers of agent work - in the extreme end - you’d still need more people than you have today.

English

@henrywinter A manager who doesn’t believe in you can do far worse than time, and Salah’s a sensitive player who’s externally motivated to an extent. Father time doesn’t appear in 180 days. From his best all round season to this one.

English

Sad seeing Mo Salah’s decline and drift towards the exit. A great player in his prime, a joy to watch weaving through opposing defences and a magnificent role model. The one opponent he can’t beat is Father Time. Salah also playing in a Liverpool team bereft of belief. #MCILIV

English

If AGI, then E = I = P.

AGI’s natural trajectory is to bring about a direct equivalence between energy, intelligence, and economic/political power, with a wide span of utopian to dystopian possibilities for how the world will organize itself.

Nations that are able to harness energy, firstly at the fastest rate, and secondarily at the cheapest rate, would become the defacto super powers and supersede any ones that may exist today.

There’s at least 3 scenarios that may shape:

The zero sum scenario

If fossil fuels continue to dominate, the natural incentive structure will be for the most economically/militarily powerful nations to “colonize” the most oil rich nations’ energy assets. As otherwise, these nations have the upper power way more than the privilege of their energy sources had offered them today.

The inequality widening scenario

A conscious decision can be made to strictly make data centers reliant on renewable energy in terms of how the technology is developed from the ground up. This will give an edge to the renewable tech leaders of today but perhaps trigger a widening intelligence gap, or an over-dependence on developed nations to run your daily life wherever you are. Think of the analogue of Africa’s daily electric supply being switched on and off from the US, except that it’s now your decision making supply.

The deliberate interdependence scenario

The world finds the means to maintain a distributed supply chain stack and preserve the comparative advantage principles that capitalism thrives under. Semiconductor leadership in Taiwan, solar energy in Africa, uranium from Kazakhstan, reasoning models and data centers in US and China, and learning data from everywhere.

Much as I’d like it, but It’s very hard to see the third scenario playing out long into the future, as (a) not every one of these elements will have the gravitational pull of the other, and (b) our sense of shared interests and common ground has been in a direction of collapse driven by a poor understanding of how to build social platforms, that could’ve moved things in the opposite direction. Coordination tech is far behind intelligence and energy tech today, and it should’ve never been the case.

———————

A longer argument for why energy, intelligence and power would collapse onto one.

When AGI arrives, two things will likely happen;

An intelligence-function collapse: AGI will gradually collapse the thousands of variables that shape the world's aggregate global intelligence quotient into a single marker, the energy that operates data centers. Units of energy will become synonymous with units of intelligence that fuel AI to recursively self improve.

An economic-power function collapse: This will be a bit slower due to physical constraints, but as AGI makes it to the physical world, this artificially generated aggregate intelligence will in turn be the single engine of economic productivity.

This comes in contrast to the world today, where aggregate global intelligence isn’t only a function of energy production, and where aggregate economic productivity isn’t only a function of aggregate global intelligence.

By consequence, nations with the highest annual production of units of energy can also be the nations with the highest annual aggregate intelligence and aggregate economic power.

This imposes a choice on the economic/military superpowers of today, either physically control the world’s energy supply, or surrender your economic and military supremacy to those who do own that supply today.

In other words, for the power alpha to remain the productivity alpha, it will have to be the energy alpha around the time that AGI lands.

English