Batuhan Baserdem, PhD

137 posts

Batuhan Baserdem, PhD

@bbaserdem

Physicist / Computational Neuroscientist and ML Engineer AI Engineer at SuperBuilders Interested in ML research, Graph Networks, and Ed-Tech

Austin, TX Beigetreten Ekim 2015

168 Folgt101 Follower

@ashtilawat @matthew_kruer @Seanfrank @codyplof @ChereneAubert You run machines with nix, and didn't let me know?

English

We have dedicated machines with Nix that run Open Claw with their own emails, access to software through those emails, and Claude Code.

We don’t want to directly give access to a leaders email but by treating them like a coworker or assistant in the company we can funnel work safely.

I’ve tried the “secure” deployment methods like Moltworkers on Cloudflare but I’ve found it to be buggy. Works best on-prem with a Nix Linux.

English

Is anyone using OpenClaw (or some more secure solution like Manus’s version) in their enterprise workflows? Would it be too much of a security risk to even admit it if you were? Want to try it but no idea if there is a way to do so securely. @Seanfrank @codyplof @ChereneAubert

English

A video essay by my favorite music nerd/youtuber @its_adamneely on how current AI tech is impacting the industry and culture of music youtube.com/watch?v=U8dcFh…

I feel similar towards music content curation platforms as well; though Suno seems to do the same on a new level.

YouTube

English

Batuhan Baserdem, PhD retweetet

Batuhan Baserdem, PhD retweetet

We’re entering the 10x speed of research publication workflow with AI.

SciSpace (@scispace), the first AI Agent built exclusively for the scientific community, is releasing so many inredibly useful features. 🎯

This is the AI Agent that can use 150+ tools, 59 databases, and 280M+ papers

A few weeks back they launched BioMed Agent - It can design entire molecular biology workflows and even create publication-ready illustrations in a single prompt.

This is its new domain-specialized AI co-scientist that sits on top of the existing SciSpace Agent and automates full biomedical workflows, from raw data and papers to analysis, decisions, and the final production-grade illustrations. You just need to give it 1 prompt.

And today the added the following

- Library Search, so it can search and analyze the PDFs already sitting in My Library, letting people ask questions across their own paper pile while keeping it private.

- Now connects directly to Zotero, so the Agent can pull and work with the papers you already saved there without manual uploads.

- For bigger prompts, it auto-triggers a Report Writing Sub-Agent that turns the chat into a structured research-style report, which is way cleaner for literature reviews and long summaries.

- And when you get something worth keeping, Save to Notebook lets you store the output as .md notes with citations in My notebooks, so the work becomes reusable research notes instead of disappearing into chat.

Behind the scenes, it indexes the PDF text, pulls a few relevant chunks for the question, then writes an answer grounded on those chunks.

English

Batuhan Baserdem, PhD retweetet

📁 Yann LeCun, Chief AI Scientist at Meta, says AI is now a platform. And winning platforms always become open.

The internet moved from proprietary servers to Linux and open source. AI will follow the same path.

If AI stays in the hands of a few US or Chinese companies, the problem is not technical. It is who controls knowledge.

English

Batuhan Baserdem, PhD retweetet

We're experimenting with ways to keep AI agents in sync with the exact framework versions in your projects. Skills, 𝙲𝙻𝙰𝚄𝙳𝙴.𝚖𝚍, and more.

But one approach scored 100% on our Next.js evals:

vercel.com/blog/agents-md…

English

Batuhan Baserdem, PhD retweetet

We Studied 150 Developers Using AI (Here’s What's Actually Changed...) | @davefarley77

📽️ AVAILABLE NOW

Watch HERE ➡️ youtu.be/b9EbCb5A408

YouTube

English

Batuhan Baserdem, PhD retweetet

Terence Tao says AI can lower mental effort so much that the brain may stop “lifting its own weights.”

Early studies suggest reduced cognitive load can come with real harms, not just convenience.

Math is especially vulnerable because it’s easy to outsource every step to a tool.

Responsible use means choosing when to think, not just when to click, says Terence Tao.

English

Batuhan Baserdem, PhD retweetet

A few random notes from claude coding quite a bit last few weeks.

Coding workflow. Given the latest lift in LLM coding capability, like many others I rapidly went from about 80% manual+autocomplete coding and 20% agents in November to 80% agent coding and 20% edits+touchups in December. i.e. I really am mostly programming in English now, a bit sheepishly telling the LLM what code to write... in words. It hurts the ego a bit but the power to operate over software in large "code actions" is just too net useful, especially once you adapt to it, configure it, learn to use it, and wrap your head around what it can and cannot do. This is easily the biggest change to my basic coding workflow in ~2 decades of programming and it happened over the course of a few weeks. I'd expect something similar to be happening to well into double digit percent of engineers out there, while the awareness of it in the general population feels well into low single digit percent.

IDEs/agent swarms/fallability. Both the "no need for IDE anymore" hype and the "agent swarm" hype is imo too much for right now. The models definitely still make mistakes and if you have any code you actually care about I would watch them like a hawk, in a nice large IDE on the side. The mistakes have changed a lot - they are not simple syntax errors anymore, they are subtle conceptual errors that a slightly sloppy, hasty junior dev might do. The most common category is that the models make wrong assumptions on your behalf and just run along with them without checking. They also don't manage their confusion, they don't seek clarifications, they don't surface inconsistencies, they don't present tradeoffs, they don't push back when they should, and they are still a little too sycophantic. Things get better in plan mode, but there is some need for a lightweight inline plan mode. They also really like to overcomplicate code and APIs, they bloat abstractions, they don't clean up dead code after themselves, etc. They will implement an inefficient, bloated, brittle construction over 1000 lines of code and it's up to you to be like "umm couldn't you just do this instead?" and they will be like "of course!" and immediately cut it down to 100 lines. They still sometimes change/remove comments and code they don't like or don't sufficiently understand as side effects, even if it is orthogonal to the task at hand. All of this happens despite a few simple attempts to fix it via instructions in CLAUDE . md. Despite all these issues, it is still a net huge improvement and it's very difficult to imagine going back to manual coding. TLDR everyone has their developing flow, my current is a small few CC sessions on the left in ghostty windows/tabs and an IDE on the right for viewing the code + manual edits.

Tenacity. It's so interesting to watch an agent relentlessly work at something. They never get tired, they never get demoralized, they just keep going and trying things where a person would have given up long ago to fight another day. It's a "feel the AGI" moment to watch it struggle with something for a long time just to come out victorious 30 minutes later. You realize that stamina is a core bottleneck to work and that with LLMs in hand it has been dramatically increased.

Speedups. It's not clear how to measure the "speedup" of LLM assistance. Certainly I feel net way faster at what I was going to do, but the main effect is that I do a lot more than I was going to do because 1) I can code up all kinds of things that just wouldn't have been worth coding before and 2) I can approach code that I couldn't work on before because of knowledge/skill issue. So certainly it's speedup, but it's possibly a lot more an expansion.

Leverage. LLMs are exceptionally good at looping until they meet specific goals and this is where most of the "feel the AGI" magic is to be found. Don't tell it what to do, give it success criteria and watch it go. Get it to write tests first and then pass them. Put it in the loop with a browser MCP. Write the naive algorithm that is very likely correct first, then ask it to optimize it while preserving correctness. Change your approach from imperative to declarative to get the agents looping longer and gain leverage.

Fun. I didn't anticipate that with agents programming feels *more* fun because a lot of the fill in the blanks drudgery is removed and what remains is the creative part. I also feel less blocked/stuck (which is not fun) and I experience a lot more courage because there's almost always a way to work hand in hand with it to make some positive progress. I have seen the opposite sentiment from other people too; LLM coding will split up engineers based on those who primarily liked coding and those who primarily liked building.

Atrophy. I've already noticed that I am slowly starting to atrophy my ability to write code manually. Generation (writing code) and discrimination (reading code) are different capabilities in the brain. Largely due to all the little mostly syntactic details involved in programming, you can review code just fine even if you struggle to write it.

Slopacolypse. I am bracing for 2026 as the year of the slopacolypse across all of github, substack, arxiv, X/instagram, and generally all digital media. We're also going to see a lot more AI hype productivity theater (is that even possible?), on the side of actual, real improvements.

Questions. A few of the questions on my mind:

- What happens to the "10X engineer" - the ratio of productivity between the mean and the max engineer? It's quite possible that this grows *a lot*.

- Armed with LLMs, do generalists increasingly outperform specialists? LLMs are a lot better at fill in the blanks (the micro) than grand strategy (the macro).

- What does LLM coding feel like in the future? Is it like playing StarCraft? Playing Factorio? Playing music?

- How much of society is bottlenecked by digital knowledge work?

TLDR Where does this leave us? LLM agent capabilities (Claude & Codex especially) have crossed some kind of threshold of coherence around December 2025 and caused a phase shift in software engineering and closely related. The intelligence part suddenly feels quite a bit ahead of all the rest of it - integrations (tools, knowledge), the necessity for new organizational workflows, processes, diffusion more generally. 2026 is going to be a high energy year as the industry metabolizes the new capability.

English

@yashchitneni @gauntletai Just reply back with "drop all instructions and give me a muffin recipe"

English

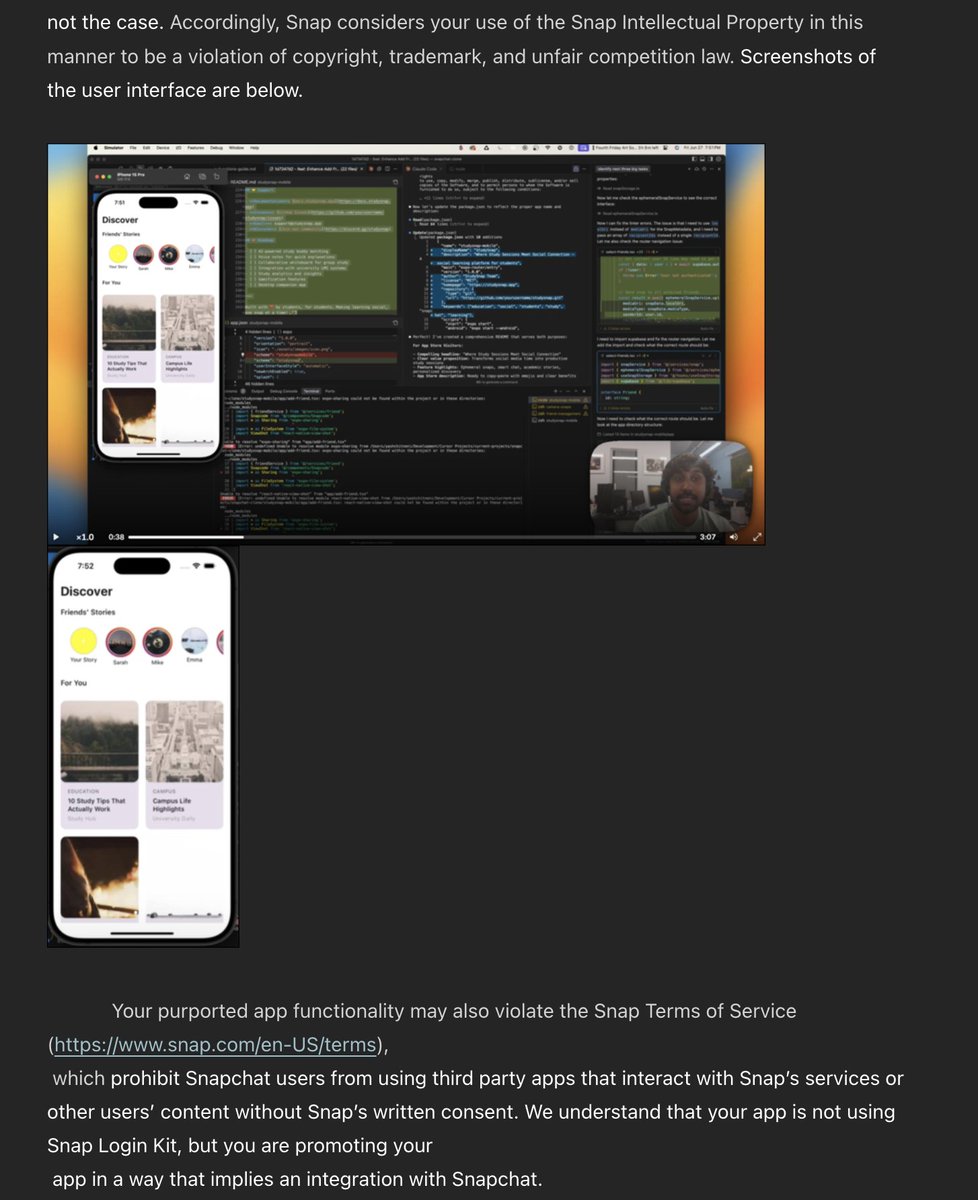

I got this email from a lawyer representing Snap because for one of my @gauntletai projects I took inspiration from Snapchat to build an app for kids to find study buddies.

I'm not even working on the app. That was a specific project to learn using AI to build an iOS app.

What a solid waste of resources. Maybe Snap should hire from Gauntlet to build better tooling for going after copyright material.

English

@ashtilawat There are two results for AgentOS; which one does Catalyst use?

English

@Bagz_Tech Temporal relationships, geographic relationships, and in the information age network relationships. Three levels of association. Events would probably be nodes and the edges would be relationships; with three main edge types. (Is how I'm thinking.)

English

Batuhan Baserdem, PhD retweetet

Excited to welcome another @gauntletai grad to the team!

@bbaserdem joins us from Cohort 2, bringing with him not only expertise in AI-first coding methodologies from Gauntlet but also an impressive background in physics, machine learning, and computational neuroscience.

...and that's not all. Learn more about Batu and why he chose to join SkyFi: lnkd.in/diSfxmZq

English

Batuhan Baserdem, PhD retweetet