BubblSpace

130 posts

@bubblspace

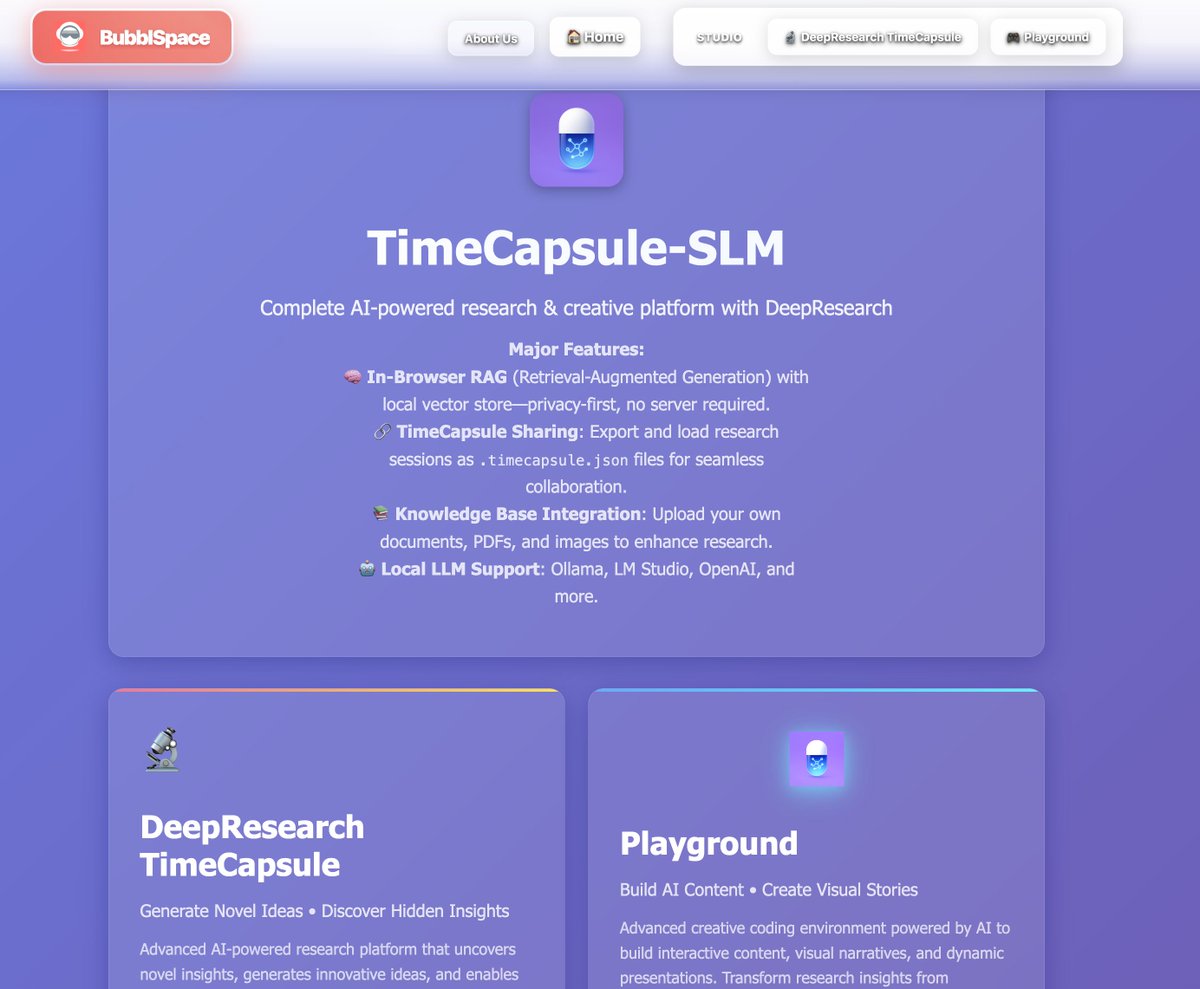

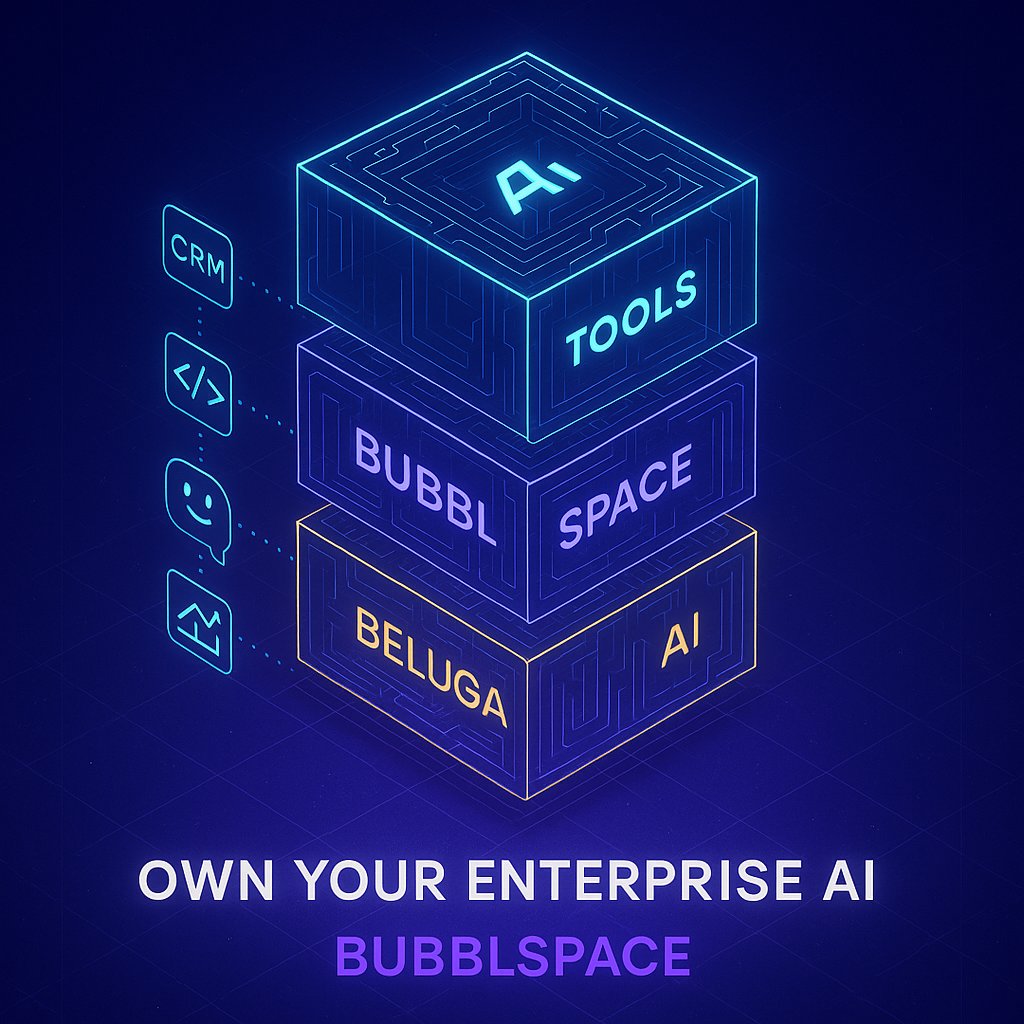

BubblSpace: Create bubbl for your Open AI Agents. Build , customise , collaborate., innovate using Open AI agents called bubbl

Introducing Cowork: Claude Code for the rest of your work. Cowork lets you complete non-technical tasks much like how developers use Claude Code.

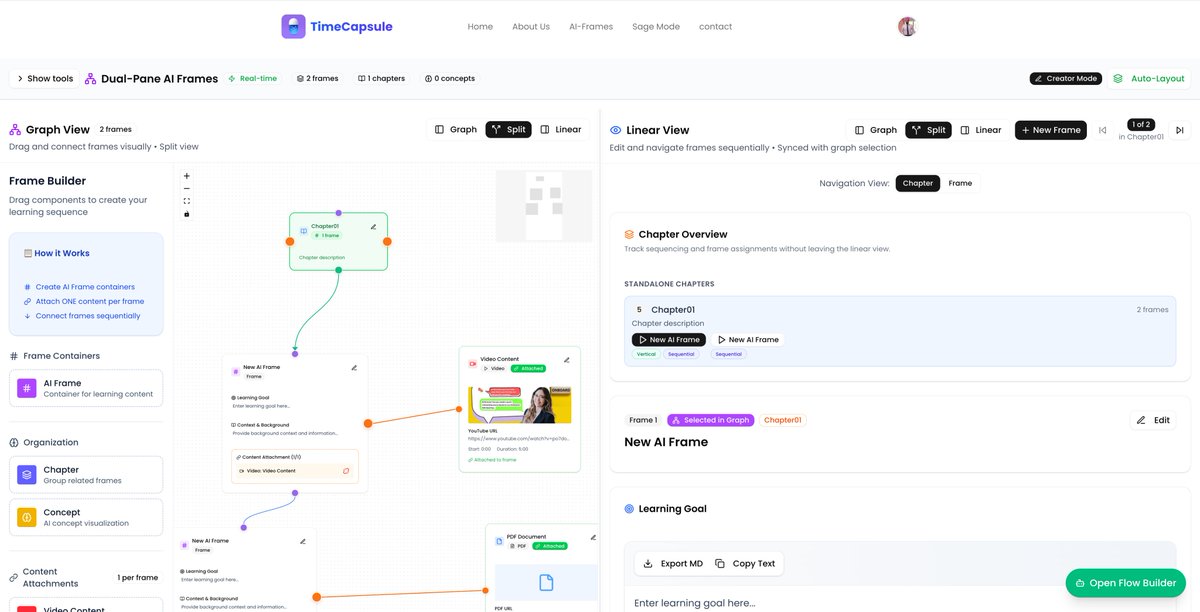

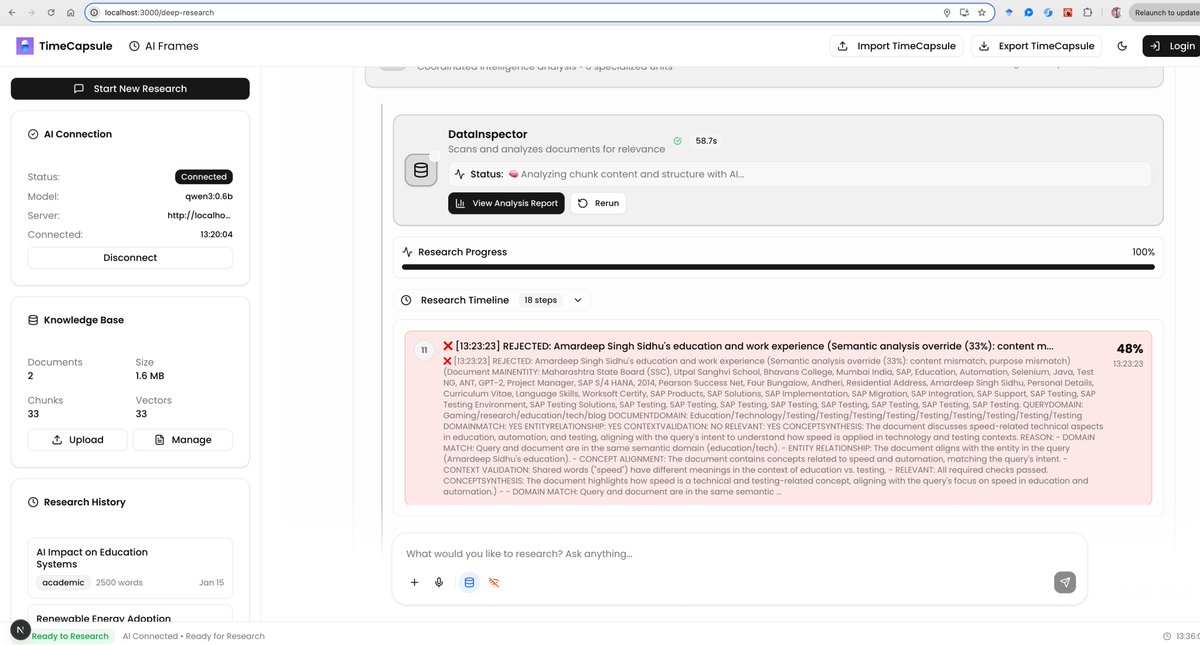

Use your favourite AI coding agent to create AI frames. What if you could connect everything—your PDFs, videos, notes, code, and research—into one seamless flow that actually makes sense? AI-Frames: Open Source Knowledge-to-Action Platform:timecapsule.bubblspace.com ✨ Annotate • Learn • Research • Build • Automate One prompt → AI builds your entire learning path with: • Citations from your Knowledge Base • Mastery checks & quizzes • Step-by-step progression • Vision or text modes From scattered notes to structured knowledge. Instantly. Watch how it works 👇 Video shows how to build with Cursor & Codex @bubblspace @AIEdXLearn

looks good ux/ui wise but the competitor products in all the categories are more vertically integrated (sauce: i work/review these). they carry more domain specific context engineering powered via domain experts. will definitely say this is a nice meeting assistant though.

So about a month ago, Percy posted a version of this plot of our Marin 32B pretraining run. We got a lot of feedback, both public and private, that the spikes were bad. (This is a thread about how we fixed the spikes. Bear with me. )