Nice yeah I'm in a similar boat. For the pre-push hooks are you having an instance of Codex perform a review and "approve" it (presumably with exit code 0) before push? Been thinking about this as "push" is also the signal that generally triggers other review bots in CI.

this got me thinking / writing out the full lifecycle. curious if this lines up with your thinking/experience:

1. write code

2. run checks (ex. unit testing; run by agent based on AGENTS . md and known best practices)

3. create commit(s) (assume commits may be incremental documentation, not necessarily a complete feature, so testing should probably not be run here?)

4. local pre-commit hooks (short-running; linting, formatting, or other static checks)

5. loop on 1-4 until feature complete (note, models often prefer to make commits all at once at the end of work, which changes the timing of pre-commit hooks if any)

6. local pre-push hooks (long-running; in your case, triggers headless Codex review; we do more expensive linting/formatting here)

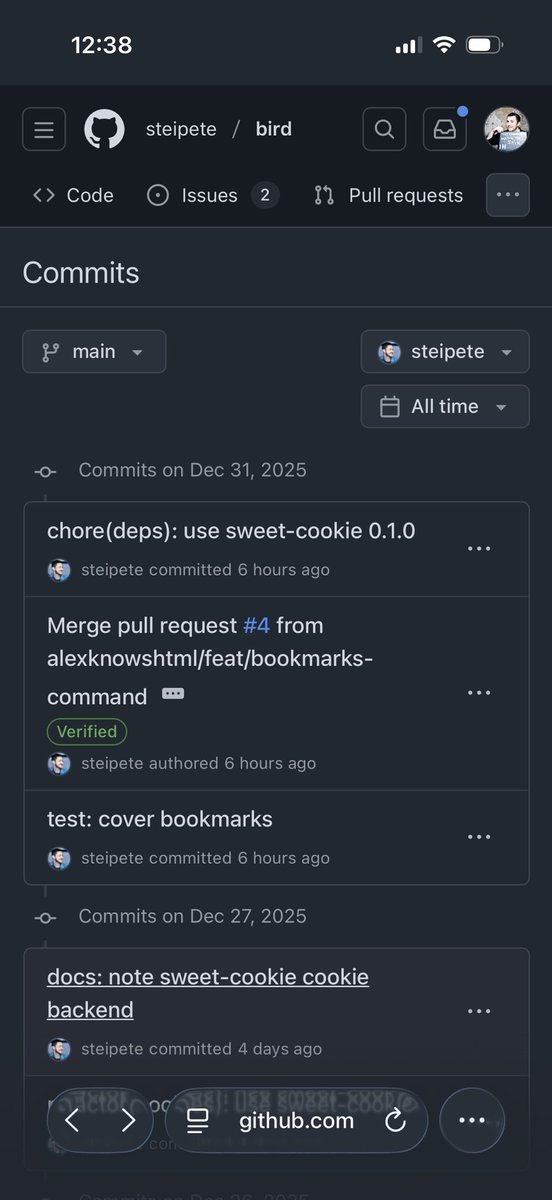

7. CI code review & testing (long-running; push triggers the "review swarm" which culminates in github PR comments)

8. wait (until at least one bot has completed its review; perhaps until all of them are done?)

9. loop on 1-8 (if review bot feedback is substantive, run the whole loop again)

In this model you have key breakpoints at:

"commit" (documenting some progress)

"push" (trigger a "full review"; perhaps the pre-push hook is more of a "sanity check" before investing the compute in a complete review by the council of review bots)

"review" (one or more signals of PR quality being returned by an external system, either a review bot or a CI process that performs some testing loop. FWIW I think conceptually can think of CI testing & review bots both as delivering feedback on a change; CI testing requires more "work" to process into a concrete code change but in both cases thinking, codebase exploration, inference, etc. are required to deliver an improvement)

where this approach might create challenges is incremental/WIP pushes, "saving progress", showing proof-of-work to a team of humans and agents. for example we encourage devs to sync their work frequently b/c active branches, PRs etc. get folded into a daily "brief" which gives a summary of work across the entire company.

(we break some modules into separate repos; still on the fence about this architecture vs. monorepo for agent performance, but so far just having a bunch of cloned projects in a folder has worked well enough)

English