David Page

180 posts

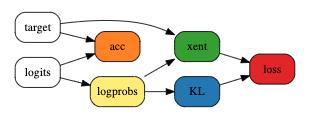

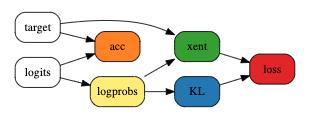

Microsoft and CMU researchers begin to unravel 3 mysteries in deep learning related to ensemble, knowledge distillation & self-distillation. Discover how their work leads to the first theoretical proof with empirical evidence for ensemble in deep learning: aka.ms/AAavp1k

I think its out : github.com/nanoporetech/b…

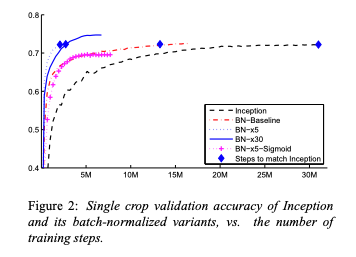

Some base-caller updates coming within 5-10 days. 98%+modal and many reads above Q20. Note the X-axis. Generally, sig +ve uplift in consensus and mutation detection. Slightly slower speed in research version.

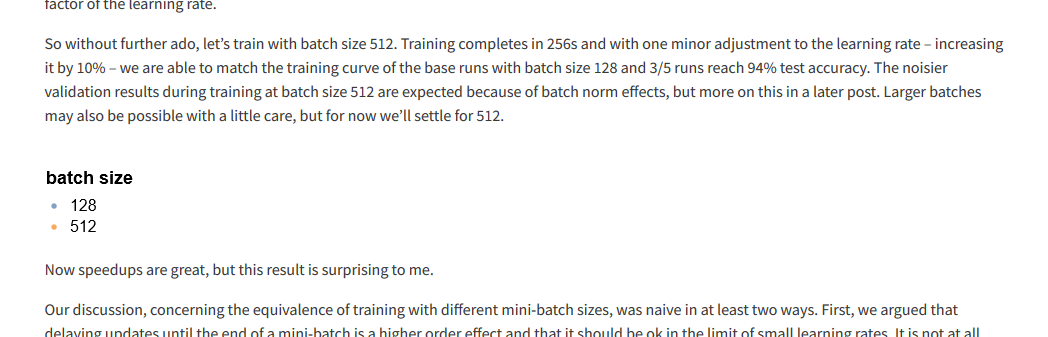

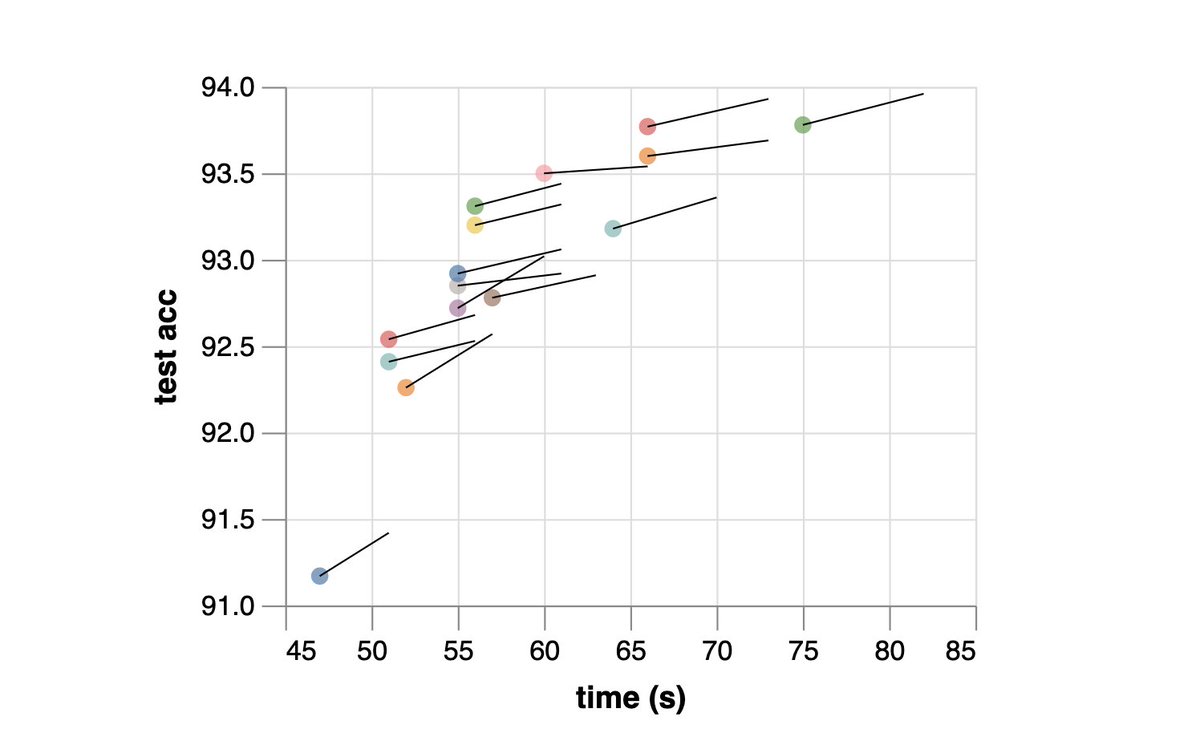

Not everyone can afford to train huge neural models. So, we typically *reduce* model size to train/test faster. However, you should actually *increase* model size to speed up training and inference for transformers. Why? [1/6] 👇 bair.berkeley.edu/blog/2020/03/0… arxiv.org/abs/2002.11794

Introducing SimCLR: a Simple framework for Contrastive Learning of Representations. SimCLR advances previous SOTA in self-supervised and semi-supervised learning on ImageNet by 7-10% (see next). arxiv.org/abs/2002.05709 Joint work with @skornblith @mo_norouzi @geoffreyhinton.