Fern

2.2K posts

Fern

@hi_tysam

Neural network speedrunner and community-funded open source researcher. Set the CIFAR-10 record several times. Say hi!

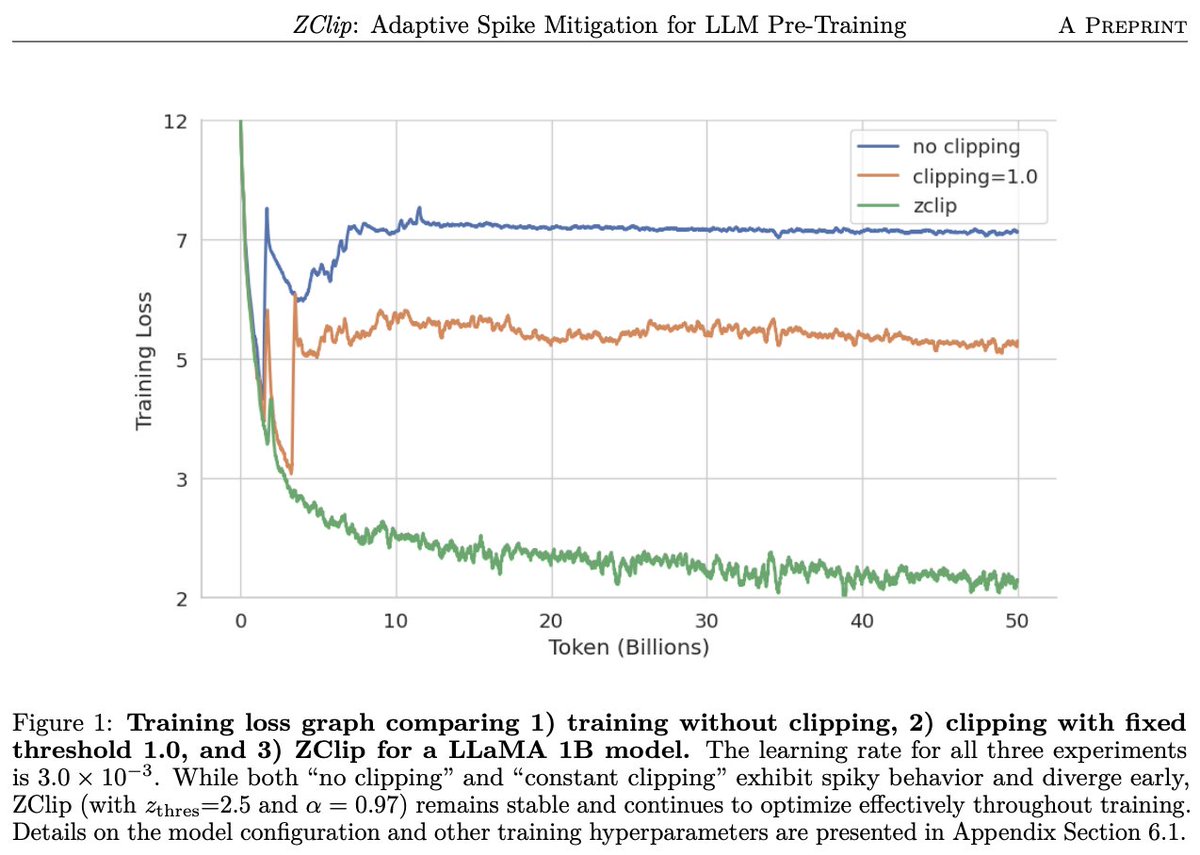

@kalomaze No you are mixing things! What's usually clipped is the gradient norm. What the formula above computes (approximates) is the norm of the parameter update (before lr and wd). These are very different things.

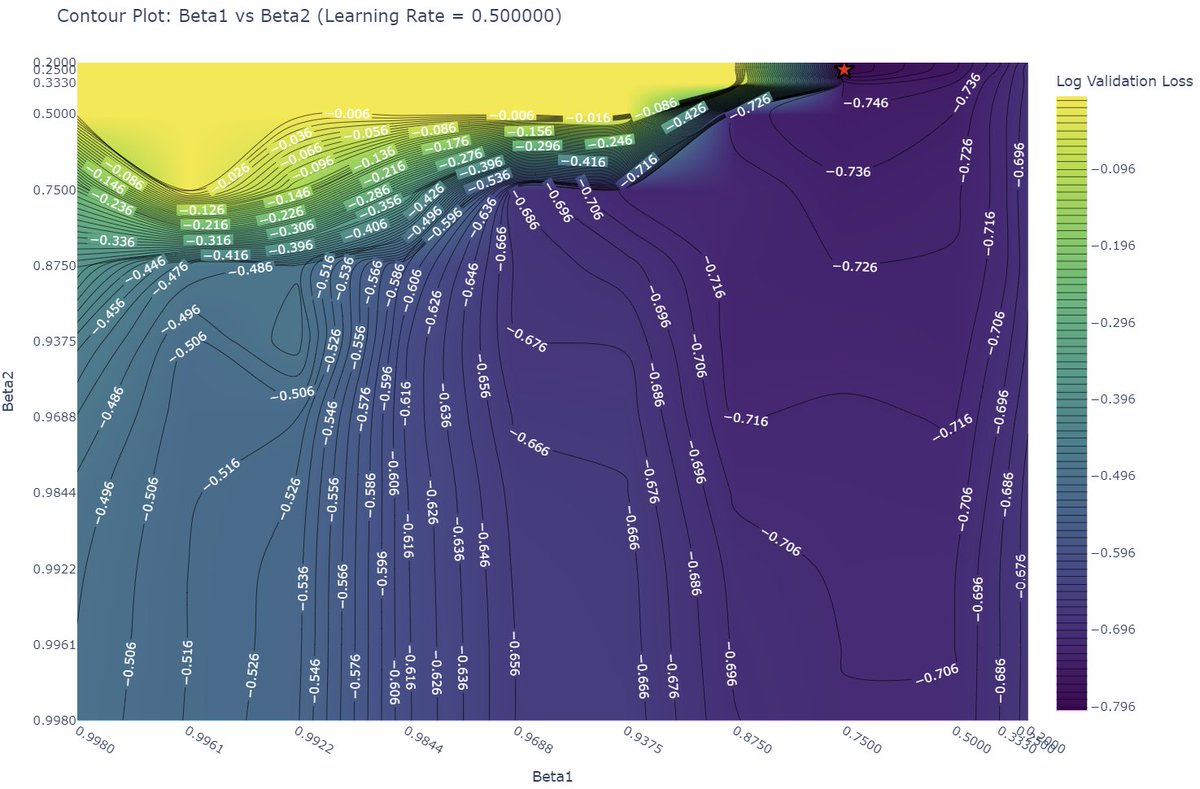

I sweeped batchsize vs beta1 vs beta2 vs lr and plotted on optimal lr and batch size: And what the actual fuck man....

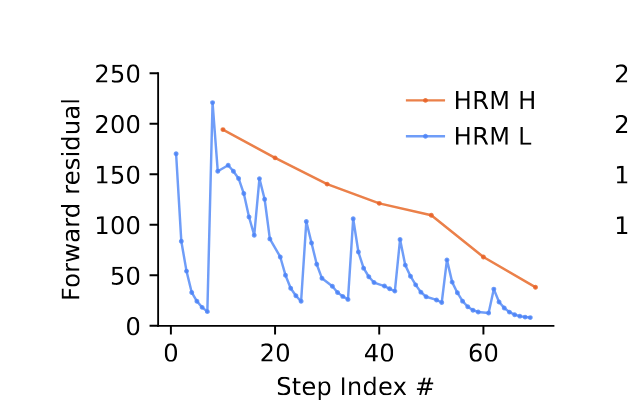

🚀Introducing Hierarchical Reasoning Model🧠🤖 Inspired by brain's hierarchical processing, HRM delivers unprecedented reasoning power on complex tasks like ARC-AGI and expert-level Sudoku using just 1k examples, no pretraining or CoT! Unlock next AI breakthrough with neuroscience. 🌟 📄Paper: arxiv.org/abs/2506.21734 💻Code: github.com/sapientinc/HRM

🚀Introducing Hierarchical Reasoning Model🧠🤖 Inspired by brain's hierarchical processing, HRM delivers unprecedented reasoning power on complex tasks like ARC-AGI and expert-level Sudoku using just 1k examples, no pretraining or CoT! Unlock next AI breakthrough with neuroscience. 🌟 📄Paper: arxiv.org/abs/2506.21734 💻Code: github.com/sapientinc/HRM

btw, one flaw of HRMs is the readout q_head will either cause representational collapse, or be ignored, or some thing in between what you really should be doing instead is curve-fitting on the abs of the cosine distance of successive vectors to determine halting, or such similar

You don't need to backprop through discrete samples to learn an effective network. Introducing an architecture that achieves an impressive 93.11% on CIFAR10 just by predicting its own future state. This intends to be one key step in replacing RL w/ cross-entropy objectives. 🧵