Fabian David Schmidt

99 posts

@fdschmidt

Member of Technical Staff @ Cohere | PhD from Uni of Würzburg on multilinguality & multimodality | prev. Mila & LTL@UniCambridge

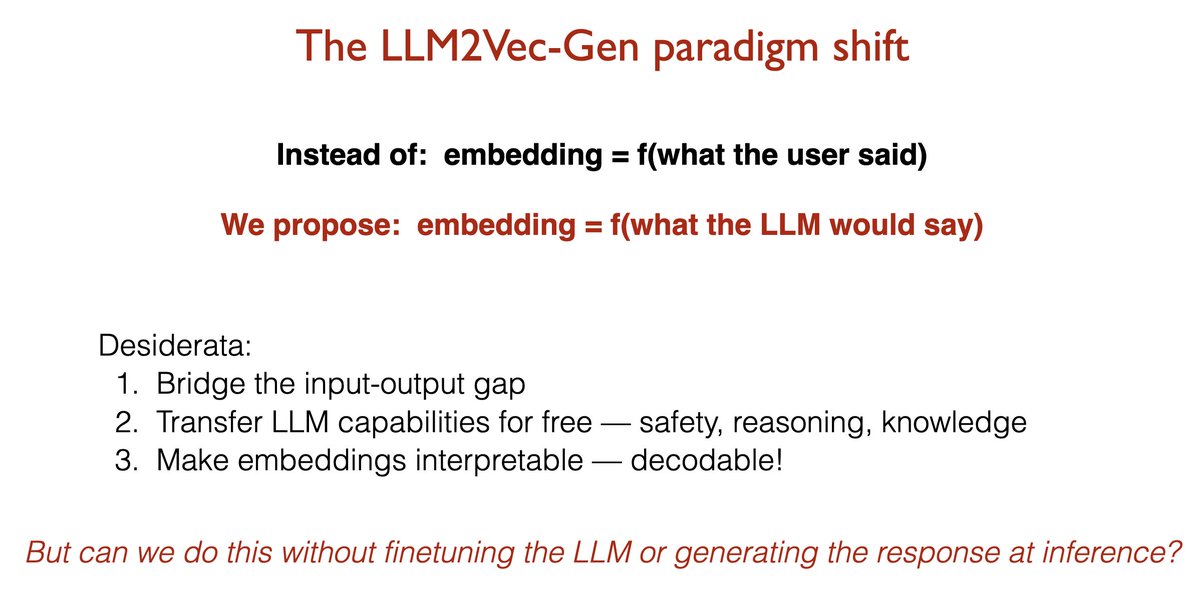

Your LLM already knows the answer. Why is your embedding model still encoding the question? 🚨Introducing LLM2Vec-Gen: your frozen LLM generates the answer's embedding in a single forward pass — without ever generating the answer. Not only that, the frozen LLM can decode the embedding back into text. 🏆 SOTA self-supervised embeddings 🛡️ Free transfer of instruction-following, safety, and reasoning

Your LLM already knows the answer. Why is your embedding model still encoding the question? 🚨Introducing LLM2Vec-Gen: your frozen LLM generates the answer's embedding in a single forward pass — without ever generating the answer. Not only that, the frozen LLM can decode the embedding back into text. 🏆 SOTA self-supervised embeddings 🛡️ Free transfer of instruction-following, safety, and reasoning

first paper of the phd 🥳 the Superficial Alignment Hypothesis (SAH) argues that pre-training adds most of the knowledge to a model, and post-training merely surfaces it. however, this hypothesis has lacked a precise definition. we fix this.

Is there such a thing as too many agents in multi-agent systems? It depends! 🧵 Our work reveals 3 distinct regimes where communication patterns differ dramatically. More on our findings below 👇 (1/7)

Fra politologi til arkæologi. Fra astrofysik til marinbiologi og glaciologi. 159 forskere modtager i dag en bevilling fra Carlsbergfondet til vidt forskellige grundvidenskabelige initiativer. Se hvilke projekter, der har fået støtte 👉bit.ly/4iK2fV2 #dkforsk

Introducing Global PIQA, a new multilingual benchmark for 100+ languages. This benchmark is the outcome of this year’s MRL shared task, in collaboration with 300+ researchers from 65 countries. This dataset evaluates physical commonsense reasoning in culturally relevant contexts.

Olmo isn’t just open weights—it’s an open research stack. Try it in the Ai2 Playground: playground.allenai.org AMA on Discord: Tues, Oct 28 @ 8:00 AM PT with some of the researchers behind these studies + an Ai2 Olmo teammate. Join: discord.gg/ai2

Is there such a thing as too many agents in multi-agent systems? It depends! 🧵 Our work reveals 3 distinct regimes where communication patterns differ dramatically. More on our findings below 👇 (1/7)