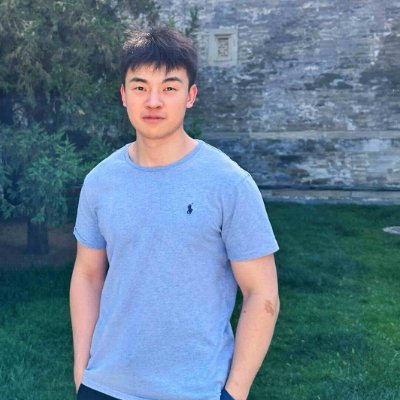

Guoqing Liu

106 posts

Guoqing Liu

@fiberleif

Senior Researcher at @MSFTResearch AI for Science, working on reinforcement learning, large language models, and AI for Science.

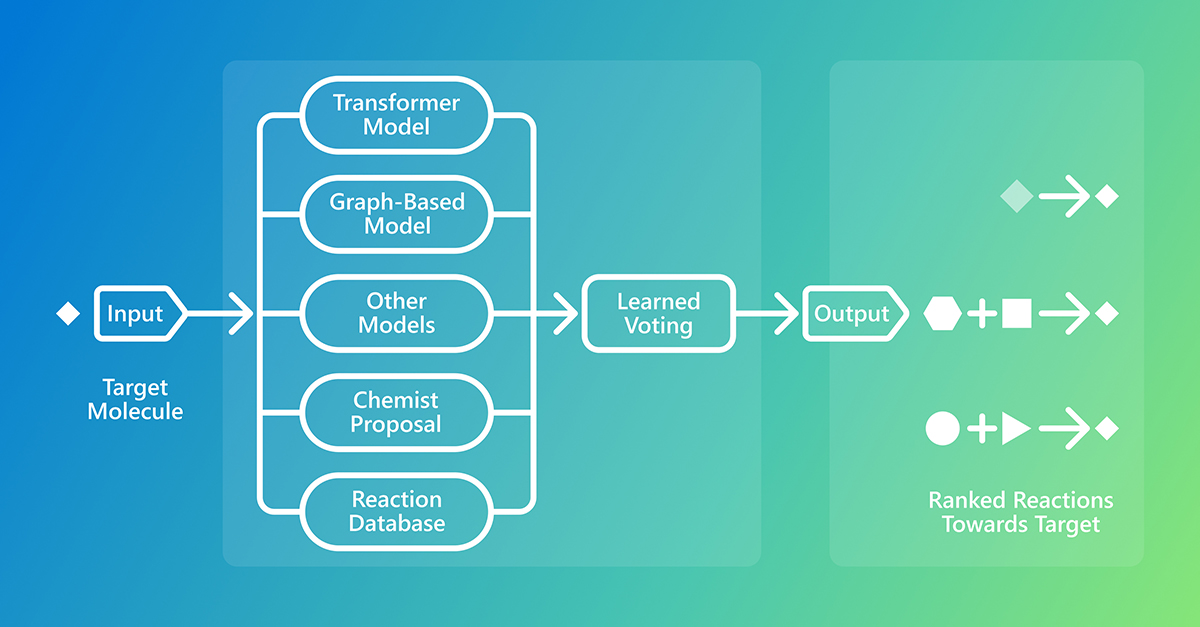

Language Models bring new capabilities to Chemistry, especially when dealing with both structures and rich natural language, ie in synthesis. For this, we now report our new Reasoning model for Synthesis Procedure generation, with dedicated SFT on COT and RLVR for the task. 1/2

How GPT-5 thinks, with @OpenAI VP of Research @MillionInt 00:00 - Intro 01:01 - What Reasoning Actually Means in AI 02:32 - Chain of Thought: Models Thinking in Words 05:25 - How Models Decide How Long to Think 07:24 - Evolution from o1 to o3 to GPT-5 11:00 - The Road to OpenAI: Growing up in Poland, Dropping out of School, Trading 20:32 - Working on Robotics and Rubik's Cube Solving 23:02 - A Day in the Life: Talking to Researchers 24:06 - How Research Priorities Are Determined 26:53 - OpenAI's Culture of Transparency 29:32 - Balancing Research with Shipping Fast 31:52 - Using OpenAI's Own Tools Daily 32:43 - Pre-Training Plus RL: The Modern AI Stack 35:10 - Reinforcement Learning 101: Training Dogs 40:17 - The Evolution of Deep Reinforcement Learning 42:09 - When GPT-4 Seemed Underwhelming at First 45:39 - How RLHF Made GPT-4 Actually Useful 48:02 - Unsupervised vs Supervised Learning 49:59 - GRPO and How DeepSeek Accelerated US Research 53:05 - What It Takes to Scale Reinforcement Learning 55:36 - Agentic AI and Long-Horizon Thinking 59:19 - Alignment as an RL Problem 1:01:11 - Winning ICPC World Finals Without Specific Training 1:05:53 - Applying RL Beyond Math and Coding 1:09:15 - The Path from Here to AGI 1:12:23 - Pure RL vs Language Models

🚀 Research Update! Excited to share HybriDNA: A Hybrid Transformer-Mamba2 Long-Range DNA Language Model, now on arXiv! DNA is the language of life, but modeling it is challenging: ultra-long sequences, single-nucleotide precision, and the need for both understanding & generation. HybriDNA tackles this with a decoder-only Hybrid Transformer-Mamba2 model, scaling to 131kb context & achieving SOTA across 33 DNA tasks! 🧬 🔹 Hybrid Transformer-Mamba2 for long-range genomic modeling 🔹 SOTA on BEND, GUE & LRB benchmarks 🔹 Scales up to 7B params, improving with size 🔹 Efficient long-context DNA modeling 📄 Read here: arxiv.org/abs/2502.10807 🌐 Project page: hybridna-project.github.io/HybriDNA-Proje… Huge thanks to my co-authors @_albertgu, @tri_dao from Princeton and CMU, and all the researchers at MSR AI for Science. Special gratitude to my mentors @fiberleif and @TaoQin for their continuous support! #MachineLearning #Genomics #AI4Science #DNA #FoundationModels #HybriDNA