Chi Chen

173 posts

Chi Chen

@chc273

Quantum Applications at IonQ. Views are mine.

Peter Steinberger is joining OpenAI to drive the next generation of personal agents. He is a genius with a lot of amazing ideas about the future of very smart agents interacting with each other to do very useful things for people. We expect this will quickly become core to our product offerings. OpenClaw will live in a foundation as an open source project that OpenAI will continue to support. The future is going to be extremely multi-agent and it's important to us to support open source as part of that.

Watch Cursor build a 3M+ line browser in a week

🚀 Hello, Kimi K2 Thinking! The Open-Source Thinking Agent Model is here. 🔹 SOTA on HLE (44.9%) and BrowseComp (60.2%) 🔹 Executes up to 200 – 300 sequential tool calls without human interference 🔹 Excels in reasoning, agentic search, and coding 🔹 256K context window Built as a thinking agent, K2 Thinking marks our latest efforts in test-time scaling — scaling both thinking tokens and tool-calling turns. K2 Thinking is now live on kimi.com in chat mode, with full agentic mode coming soon. It is also accessible via API. 🔌 API is live: platform.moonshot.ai 🔗 Tech blog: moonshotai.github.io/Kimi-K2/thinki… 🔗 Weights & code: huggingface.co/moonshotai

MLFFs 🤝 Polymers — SimPoly works! Our team at @MSFTResearch AI for Science is proud to present SimPoly (SIM-puh-lee) — a deep learning solution for polymer simulation. Polymeric materials are foundational to modern life—found in everything from the clothes we wear and the food we consume to high-performance materials in aerospace, electronics, and medicine. Today, we introduce a new way to simulate them. We built a machine learning force field (MLFF) to predict macroscopic properties across a broad range of polymers—trained only on quantum-chemical data, with no experimental fitting. Specifically, we accurately compute polymer densities via large-scale MD simulations, achieving higher accuracy than classical force fields. We also capture second-order phase transitions, enabling prediction of glass transition temperatures. These two properties are fundamental to processing and application design. Finally, we created a benchmark based on experimental data for 130 polymers plus an accompanying quantum-chemical dataset—laying the foundation for a fully in silico design pipeline for next-generation polymeric materials. The incredible team: Jean Helie, @temporaer, Yicheng Chen, Guillem Simeon, @a_kzna, @ErnestoCheco, @erunzzz, Gabriele Tocci, @chc273, @yatao_li, @SherryLixueC, @zunwang_msr, Bichlien H. Nguyen, Jake A. Smith, and Lixin Sun. 📄 Preprint: arxiv.org/abs/2510.13696 ⚙️ Data and code release: in progress⏳ #MLFFs #Polymers #AIforScience #DeepLearning #SimPoly #ScientificML #Microsoft #MicrosoftResearch #MicrosoftQuantum

Today, @ekindogus and I are excited to introduce @periodiclabs. Our goal is to create an AI scientist. Science works by conjecturing how the world might be, running experiments, and learning from the results. Intelligence is necessary, but not sufficient. New knowledge is created when ideas are found to be consistent with reality. And so, at Periodic, we are building AI scientists and the autonomous laboratories for them to operate. Until now, scientific AI advances have come from models trained on the internet. But despite its vastness — it’s still finite (estimates are ~10T text tokens where one English word may be 1-2 tokens). And in recent years the best frontier AI models have fully exhausted it. Researchers seek better use of this data, but as any scientist knows: though re-reading a textbook may give new insights, they eventually need to try their idea to see if it holds. Autonomous labs are central to our strategy. They provide huge amounts of high-quality data (each experiment can produce GBs of data!) that exists nowhere else. They generate valuable negative results which are seldom published. But most importantly, they give our AI scientists the tools to act. We’re starting in the physical sciences. Technological progress is limited by our ability to design the physical world. We’re starting here because experiments have high signal-to-noise and are (relatively) fast, physical simulations effectively model many systems, but more broadly, physics is a verifiable environment. AI has progressed fastest in domains with data and verifiable results - for example, in math and code. Here, nature is the RL environment. One of our goals is to discover superconductors that work at higher temperatures than today's materials. Significant advances could help us create next-generation transportation and build power grids with minimal losses. But this is just one example — if we can automate materials design, we have the potential to accelerate Moore’s Law, space travel, and nuclear fusion. We’re also working to deploy our solutions with industry. As an example, we're helping a semiconductor manufacturer that is facing issues with heat dissipation on their chips. We’re training custom agents for their engineers and researchers to make sense of their experimental data in order to iterate faster. Our founding team co-created ChatGPT, DeepMind’s GNoME, OpenAI’s Operator (now Agent), the neural attention mechanism, MatterGen; have scaled autonomous physics labs; and have contributed to some of the most important materials discoveries of the last decade. We’ve come together to scale up and reimagine how science is done. We’re fortunate to be backed by investors who share our vision, including @a16z who led our $300M round, as well as @Felicis, DST Global, NVentures (NVIDIA’s venture capital arm), @Accel and individuals including @JeffBezos , @eladgil , @ericschmidt, and @JeffDean. Their support will help us grow our team, scale our labs, and develop the first generation of AI scientists.

🔥 Today we announce the Meta OMol25 Electronic Structures Dataset - 500 TB of molecular data in collaboration with @mshuaibii and team at @AIatMeta. We envision a future where researchers can rapidly design molecules and peptides to treat diseases, discover catalysts to revolutionize synthesis and manufacturing, identify the next electrolyte to store and transport energy to protect the grid, and more. But these breakthrough discoveries require data. Data to train next-generation AI models and interatomic potentials. Data to push the boundaries of what's computationally possible in molecular chemistry and lead the world in AI for science. Data that captures the full complexity of chemical systems, from small organic molecules to massive biomolecular complexes. The OMol25 Electronic Structures dataset includes the raw DFT outputs, electronic densities, wavefunctions, and molecular orbital information for over 4M million high-accuracy quantum chemical calculations. We see this as a transformative opportunity to develop higher quality partial charges, partial spins, and advanced electronic features to unlock the next generation of physics-informed ML models. The Materials Data Facility is proud to make these data available via the Eagle cluster at ALCF through a high-performance Globus endpoint. Given the dataset's unprecedented scale, we're first releasing all output data for a 4M random OMol25 split, with the full multi-petabyte dataset following based on community engagement. For this first release, the data are quite raw, and as-created by the Meta team. There's a significant opportunity for the community to build tools that simplify access to these data, allow data query and browsing, create databases of calculated properties and descriptors, and much more. We intend to work on these topics with all of you. We can't wait to see what you can do with these data! Access Details: github.com/facebookresear… Eagle was pioneered as the Petrel project, a new way to provide researchers access to high-quality, high-volume data by Ian Foster, Rachana Ananthakrishnan, Kyle Chard, Michael Papka, Rick Stevens, and others. Globus.org provides core platform capabilities (auth, data transfer, workflow automation, and compute) to over 600k researchers. Thanks to support from NIST and James Warren for making the MDF vision of vast troves of open data to fuel discovery possible. @mshuaibii, @zackulissi , @argonne, @argonne_lcf

We’re thrilled to share the release of our 2025 XPRIZE Impact Report. 🚀From carbon removal to space exploration, our prizes prove philanthropy can deliver 60x ROI in global impact. Learn how we're building the #BusinessOfBreakthroughs: xprize.org/2025-impact-re…

“Can researchers stop AI making up citations?” nature.com/articles/d4158…

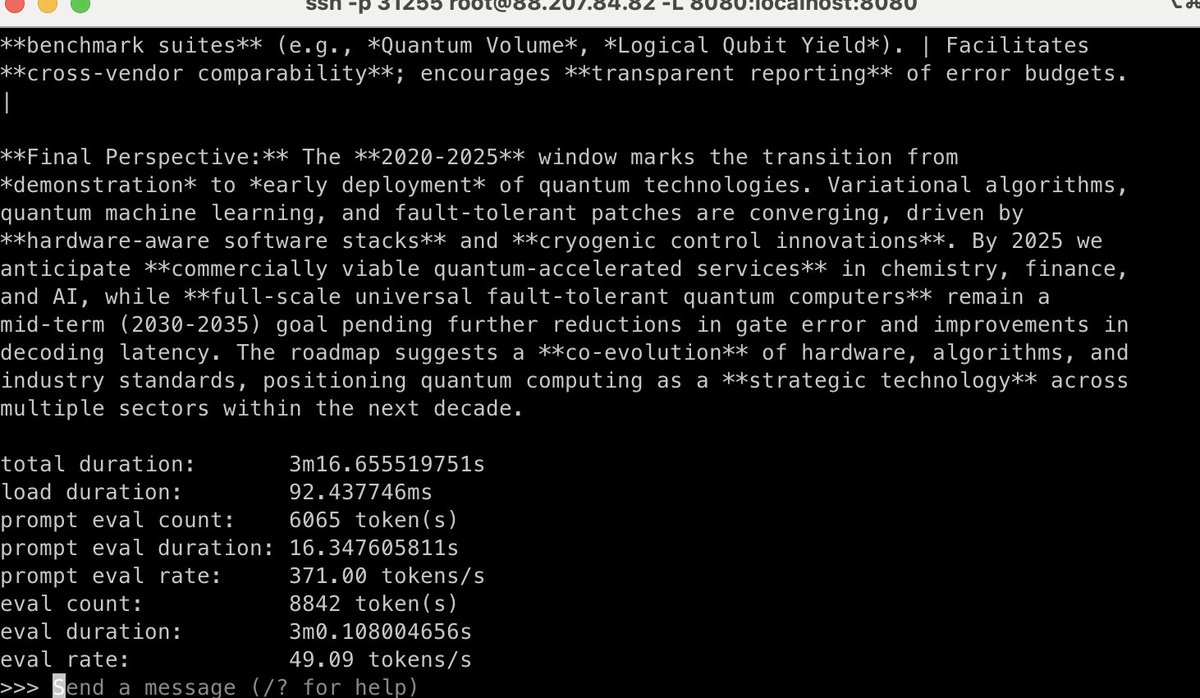

Mind-blowing that anyone can now run o3-level models locally within minutes. Testing gpt-oss:120b on 2x A100 SXM4: ~370 tok/s prompt processing, ~49 tok/s generation. Still more expensive than API calls, but the accessibility is game-changing