Jascha Sohl-Dickstein@jaschasd

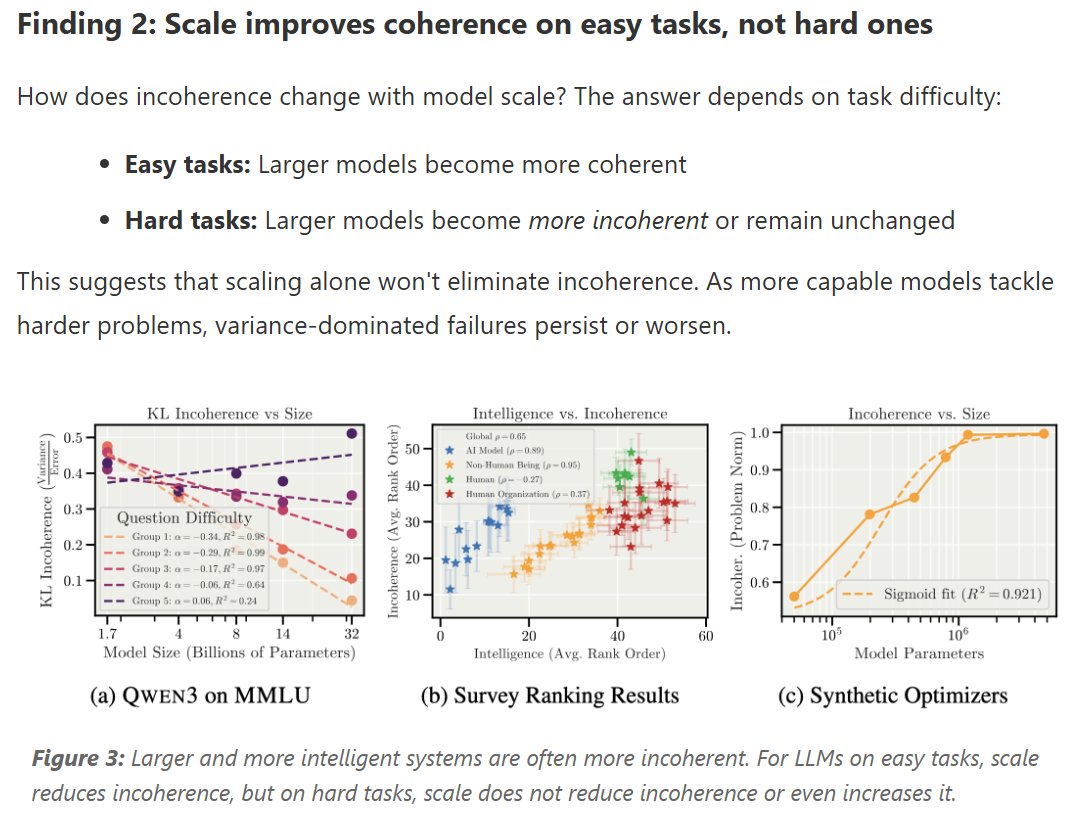

When AI fails, will it do so by coherently pursuing the wrong goals? Or will it fail the way humans often fail, and take incoherent actions that don't pursue any consistent goal. In other words, like a “hot mess?”

How will this change when AI performing limited tasks transitions to AGI performing tasks of unbounded complexity? How does misalignment scale with model intelligence and task complexity?

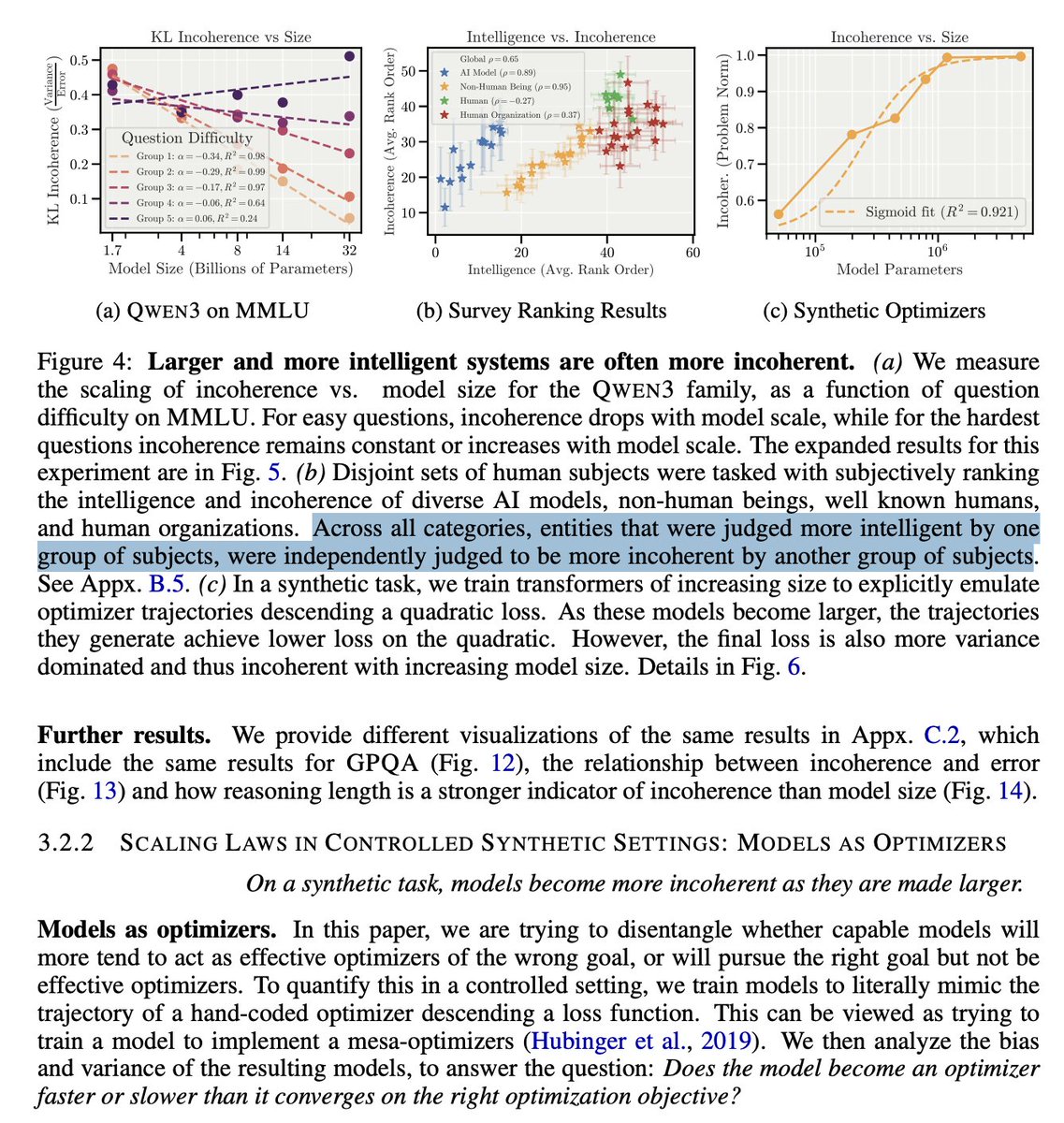

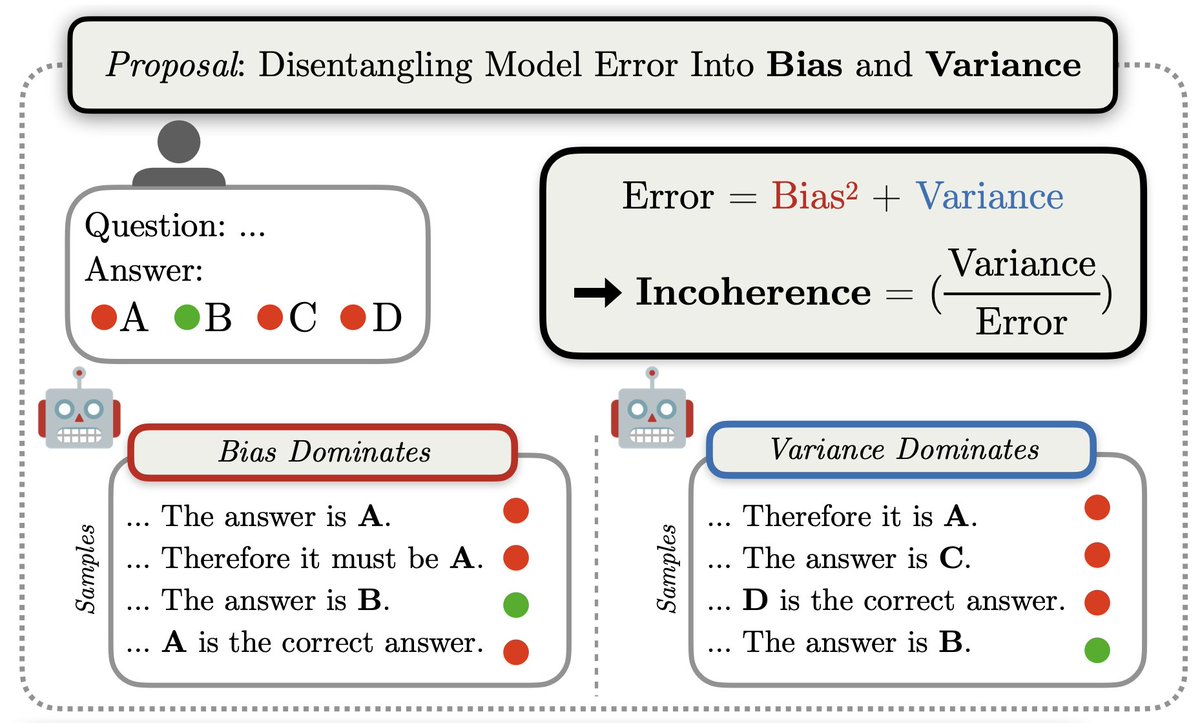

We measure this using a bias-variance decomposition of AI errors.

Bias = consistent, systematic errors (reliably achieving the wrong goal).

Variance = inconsistent, unpredictable errors.

We define "incoherence" as the fraction of error from variance.

I am very excited about this framing, because it characterizes types of misalignment in a way that should be amenable to simple theoretical models and clean scaling laws.