Keyon Vafa

1.4K posts

Keyon Vafa

@keyonV

Postdoctoral fellow at @Harvard_Data | Former computer science PhD with @Blei_Lab at @Columbia University | Researching AI + implicit world models

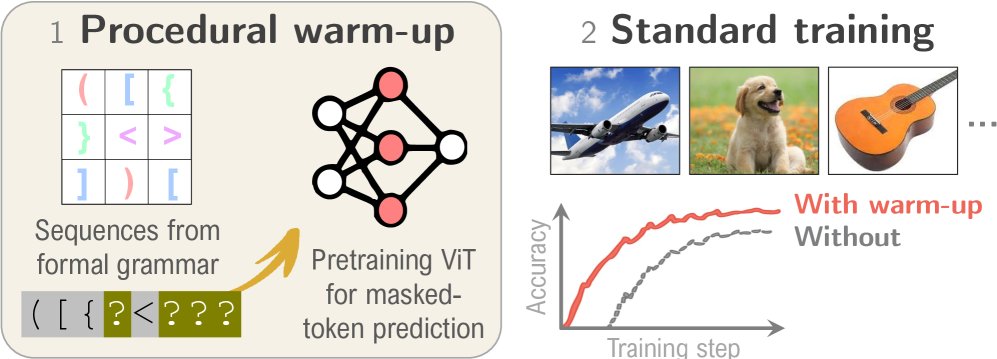

1/ Did you ever look at some feature represented by a Transformer and wonder: "Why would it even learn that? 🤔 " We did! Announcing the ICLR'26 paper "Understanding the Emergence of Seemingly Useless Features in Next-Token Predictors"

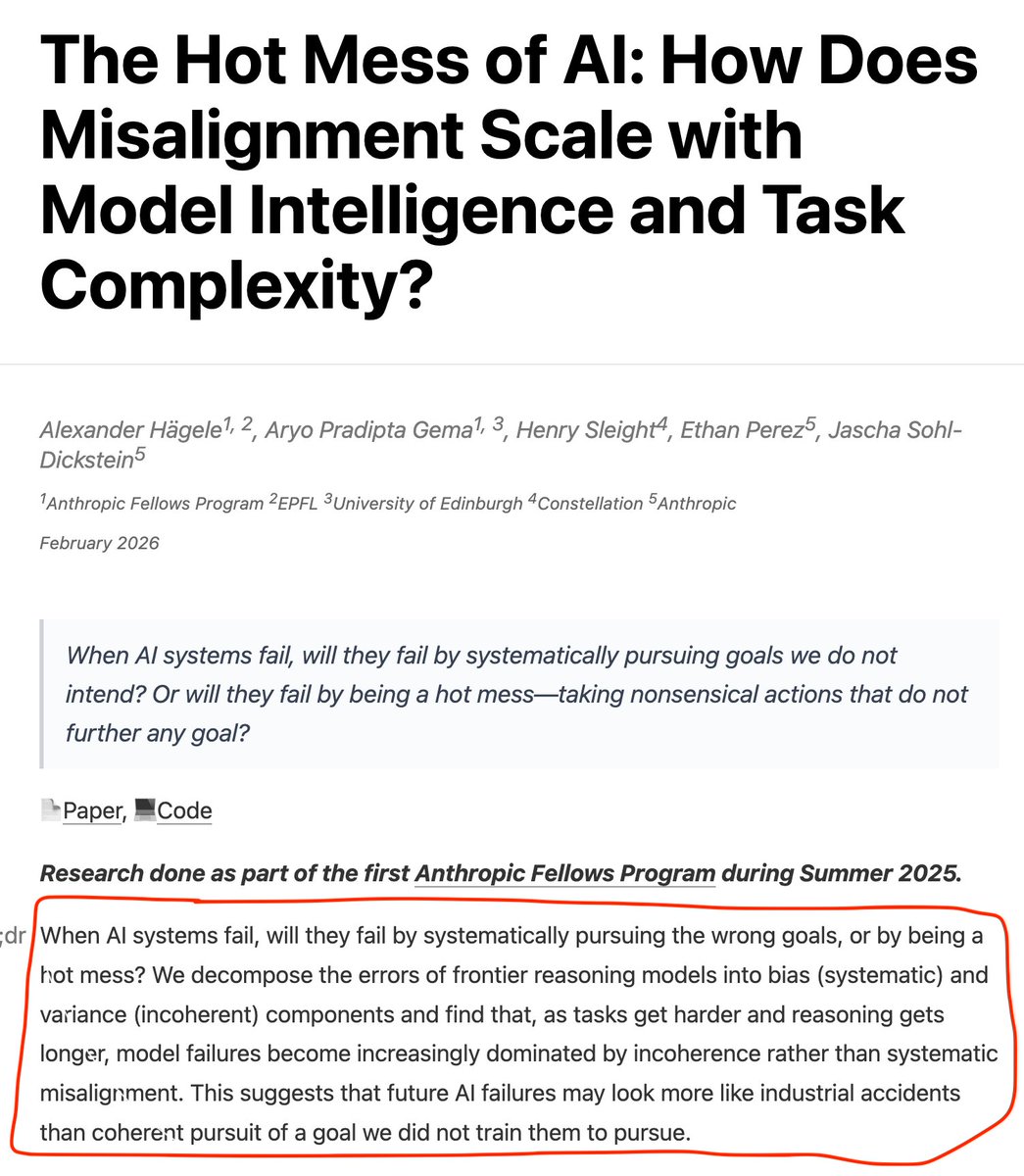

New Anthropic Fellows research: How does misalignment scale with model intelligence and task complexity? When advanced AI fails, will it do so by pursuing the wrong goals? Or will it fail unpredictably and incoherently—like a "hot mess?" Read more: alignment.anthropic.com/2026/hot-mess-…

New post : Can a Transformer “Learn” Economic Relationships? Revisiting the Lucas Critique in the age of Transformers. with @arpitrage We simulated data from an NK model, fit a transformer, and tested out of sample fit How did it do? Pretty well! Link and thread in reply:

Many disagreed with post below, saying Lucas critique is not about simpler models per se, but importance of modeling the system structurally to account for responses to policy changes. Even with a “large n”, a model can’t learn about missing data and things like adverse selection. This amounts to: reduced form regressions (which is what Lucas was critiquing) do not recover the data generating process, and so will not account for responses to a policy change. My interpretation of the post was consistent with the response below to @JesusFerna7026: one of the lessons we learned from LLMs is that training neural nets with enough data can lead to emergent properties, one of which seems to be that the model *sometimes* seems to “learns” the structure of the DGP despite not being exposed to all of the potential comparative statics in the training data. Will this always be the case with enough data? Will a model “learn” to account for adverse selection and other types of “missing data”? Given what we’ve seen so far, I think it’s worth considering. But I could be wrong!

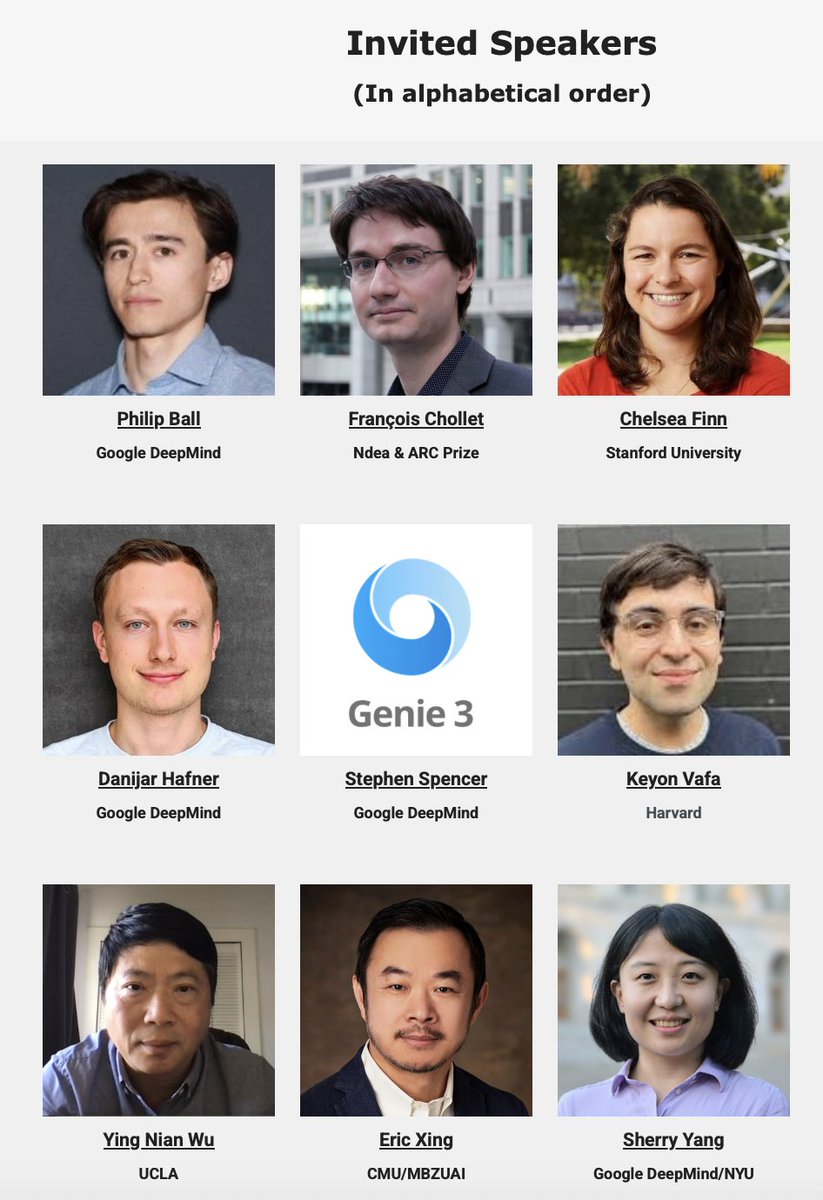

🔥 We are pleased to announce the talk title for our #LAW2025 #NeurIPS workshop Join us on 📅Sunday, Dec 7th in📍Upper Level Ballroom 20D Full schedule here: sites.google.com/view/law-2025/… We look forward to seeing you all this Sunday! #NeurIPS2025 #AI #ML #WorldModel #Agents #LLMs

🔥 We are pleased to announce the talk title for our #LAW2025 #NeurIPS workshop Join us on 📅Sunday, Dec 7th in📍Upper Level Ballroom 20D Full schedule here: sites.google.com/view/law-2025/… We look forward to seeing you all this Sunday! #NeurIPS2025 #AI #ML #WorldModel #Agents #LLMs