Ivan Rocha retweetet

Gemopus-4-26B-A4B from Jackrong is LIVE!

Happy to have benched this one pretty hard (see my benches in the model card) and it is an excellent finetune of an already exceptional model! My friend Jackrong is always cooking the greatest!

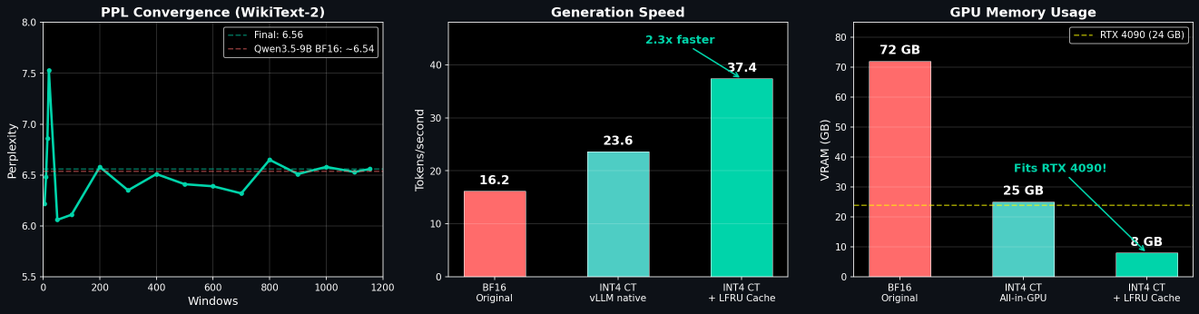

It rocks at one-shot requests over long contexts, and runs incredibly fast thanks to the MOE architecture while not seeming to take as much of a hit vs dense models as in the Qwen 3.5 series. It also crushed my simple needle-in-the-haystack tests all the way out to an extended context of 524k!

If you're VRAM starved, or running on unified memory, this one should run much more usably offloaded to system ram or in unified memory pools; even if you're running a 10GB or less GPU! It would be my daily driver for this purpose!

That said, the dense 31B Gemopus 4 is finalising now, I will post it here when it's live, so follow me for the official launch, and follow Jakcrong on Hugging Face! It will also be an incredible model!

As with the base Gemma 4 models, there are some idiocycracies especially in harnesses, if you have problems, please let us know! If you make something cool with it, please comment that below, too. We'd love to see it!

huggingface.co/Jackrong/Gemop…

English