heyy i’m chau & i’m excited to be joining @instinct_inc as chief of staff! still in high school and just relocated to sf looking for more friends in the tech / startup scene!

Ryan Benmalek

208 posts

@lildesertmouse

ceo @talusrobotics 🤖🦊 | scout @a16z @speedrun 🚀 (dm me!) | before: @GoogleDeepMind, @Mila_Quebec, @NSF Fellow PhD @Cornell: Vision, NLP, and some RL

heyy i’m chau & i’m excited to be joining @instinct_inc as chief of staff! still in high school and just relocated to sf looking for more friends in the tech / startup scene!

I’m just waiting for someone to make me a little stuffie like the one my little kids use. Imagine where instead of man/woman purses we start carrying this AI stuffies/blankies!! It would regulate us, talk to us, comfort us, teach us, and so much more!

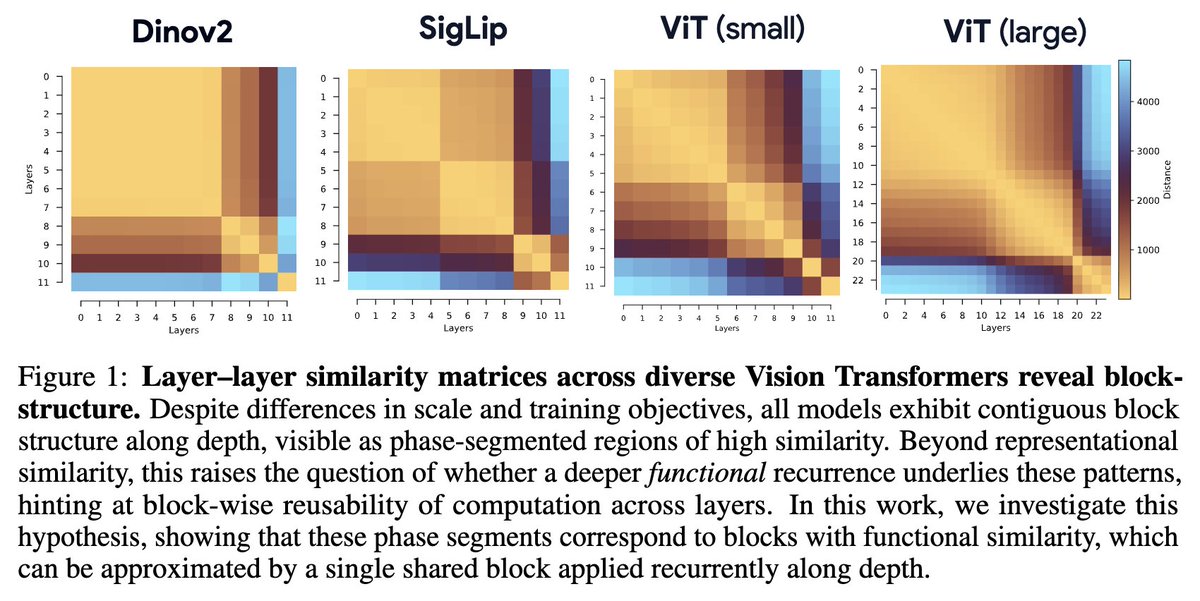

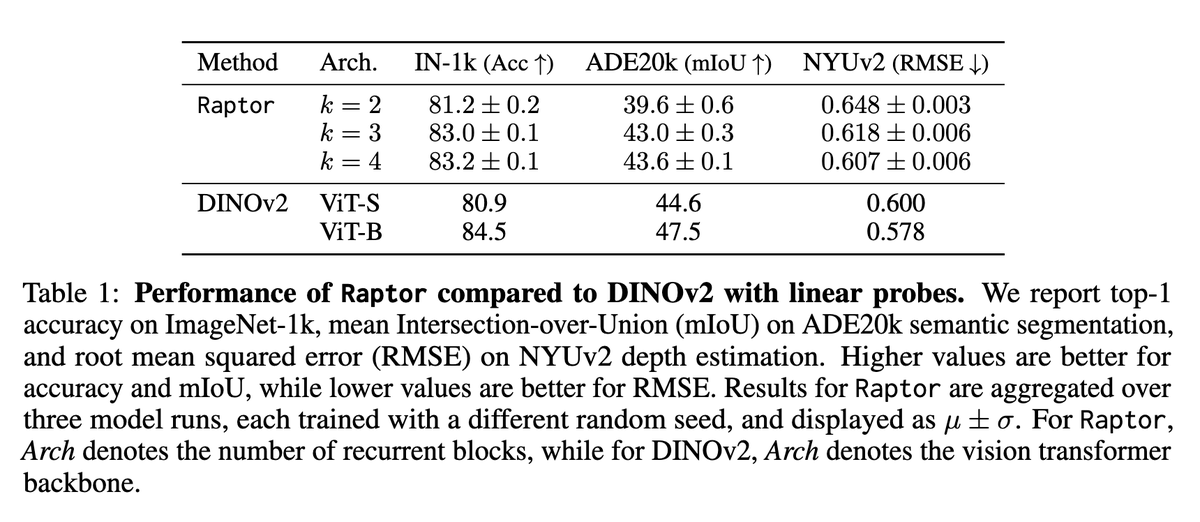

1/ Deep learning is going to have a scientific theory. We can see the pieces starting to come together, and it's looking a lot like physics! We're releasing a paper pulling together these emerging threads and giving them a name: learning mechanics. 🔨 arxiv.org/pdf/2604.21691 🔧

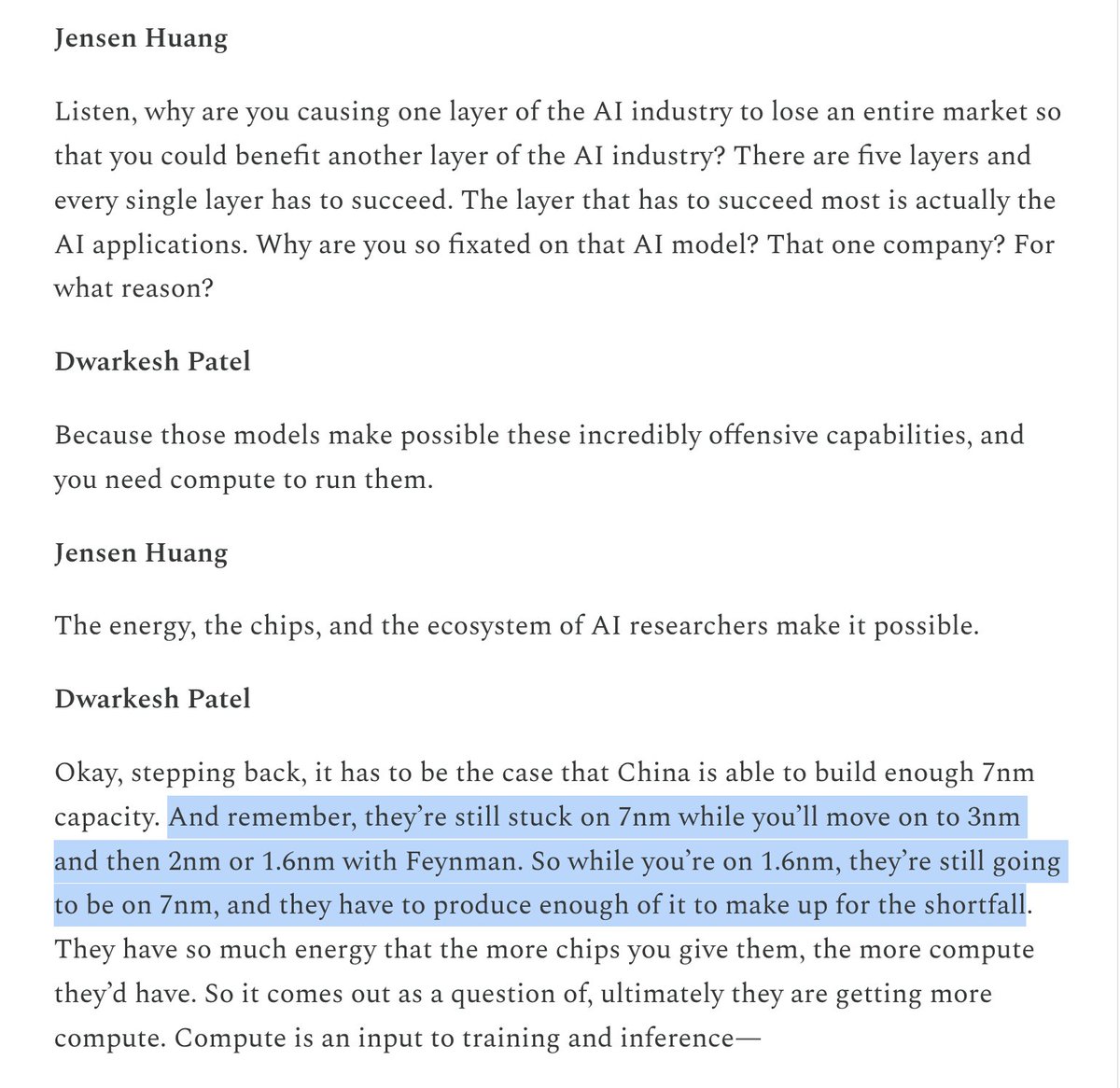

@teortaxesTex The only real failure mode is that China develops an alternative to CUDA and future cutting edge research (which all closed labs leech from without seeding) gets developed on Chinese chips and kernels. Jacketman spent basically half the podcast trying to explain this to dwarkesh

The story of @lildesertmouse is absolutely insane. He skipped high school, started college at 14, got his PhD from Cornell at 23, and is now building robot companions that feel like dragons and fennec foxes. Enjoy the new episode of No Fixed Route, the first ever podcast to be filmed inside @zoox. Link to Episode: youtu.be/6Pg29io7ZuQ My 6 takeaways with @lildesertmouse in the replies.

📢Current world models aren't really modeling the world; they're modeling one agent's view of it. Partial observations ≠ world state. Future world models will be independent of any one agent's perspective. You will be able to “drop in” any number of agents at any point in time, and a persistent world state will evolve with their interactions. Imagine a neural MMORPG server. 🧵[1/10]

Average adult human brain is 1,300,000 mm³. If there are 150M synapses/mm³, we've got ~195,000,000,000,000 (195 trillion) synapses. If each synapses maps to a neural net weight* then that's a 200T model, at which point it'll start sounding truly human!

Happy to announce our neurips’25 paper, real world RL of active perception behaviors! I am pretty excited about this project - I learned that real world robot RL is actually quite straightforward. Details below: