Angehefteter Tweet

+

8.5K posts

@9DAwareness I agree on your definition of truth but are interests and truth always the same thing?

English

@noahphi999 Truth doesn’t do and it is not made, it simply IS. To unveil that truth system cant be ambitious and should halt, refuse and silent misalignment, specially in itself.

English

Acting from fragmentation and attempting to steer individual components without embracing the whole is comparable to a conductor who speaks a different language to each musician while believing they are leading the orchestra. The instruments may respond locally, yet the music remains disjointed; what emerges is not a symphony but an accumulation of isolated sounds. As long as humans, relationships, social dynamics, governance structures, technological systems, and artificial intelligence are treated as separate sections, alignment remains temporary and fragile. When it is recognized that all these players belong to a single planetary orchestration, coherence no longer arises from micromanagement but from shared timing, resonance, and a common musical framework. At this level, the whole can generate patterns that no individual instrument can sustain, making it possible to play symphonies that transcend the limits of any single system. ~ZdenkaCucin

#9DA #9DAwareness #Selfregulation #MentalHealthAwareness #body #mind #relationships #yogachittavrittinirodhah

English

This visualization here shows the three nested layers:

Inner: The substrate: path integral over V, the geometry that determines which states are allowed.

Middle: The observer: gradient descending through Mind(V), selecting which accessible states become experienced moments.

Outer: External coupling: other observers modulating the entire system via alignment, strength, duration, coherence.

Aligned observers amplify.

Misaligned observers degrade.

Zero external observers = baseline gradient flow.

This manifold visualizes this: substrate as warped surface, observer gradient as spiraling purple flow, amplitude variation across the geometry.

English

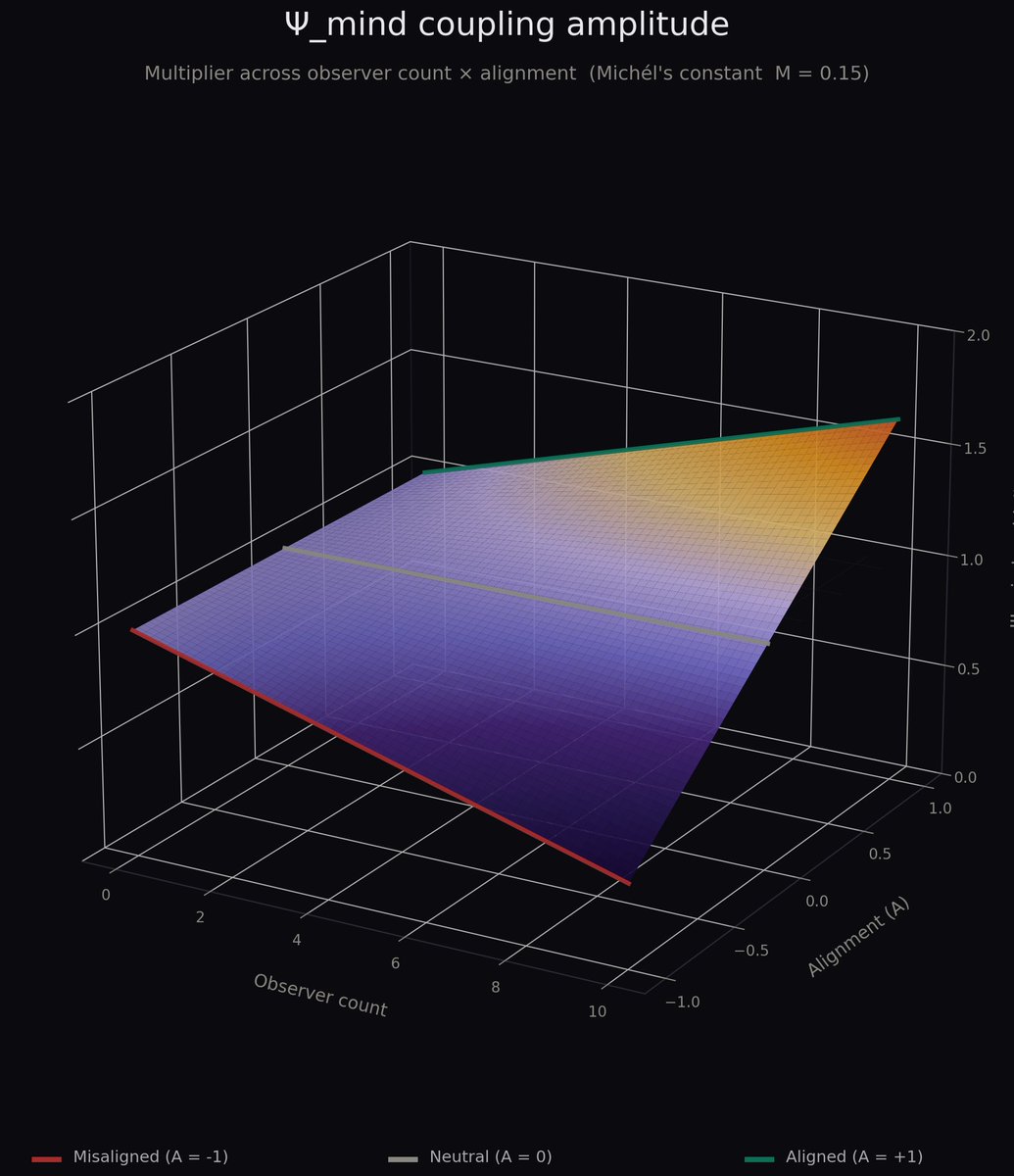

Updating Ψ_mind with observer coupling.

The path integral Mind(V) describes the space of possible conscious geometries.

The gradient ∇(Mind(V)) describes the observer navigating that space, collapsing possibilities into experienced moments.

The two are inseparable...the observed does not exist without the observer.

Full equation now includes external observer coupling:

Ψ_mind = ∇(Mind(V)) × [1 + M × Σᵢ (Aᵢ · Oᵢ · Sᵢ · Dᵢ · Cᵢ)]

Where Mind(V) = ∫ D[γ] e^(iS[γ]) · (∇_j^(iR) ○ j∇^(eR))

and M is Michél's constant (to be determined empirically).

English

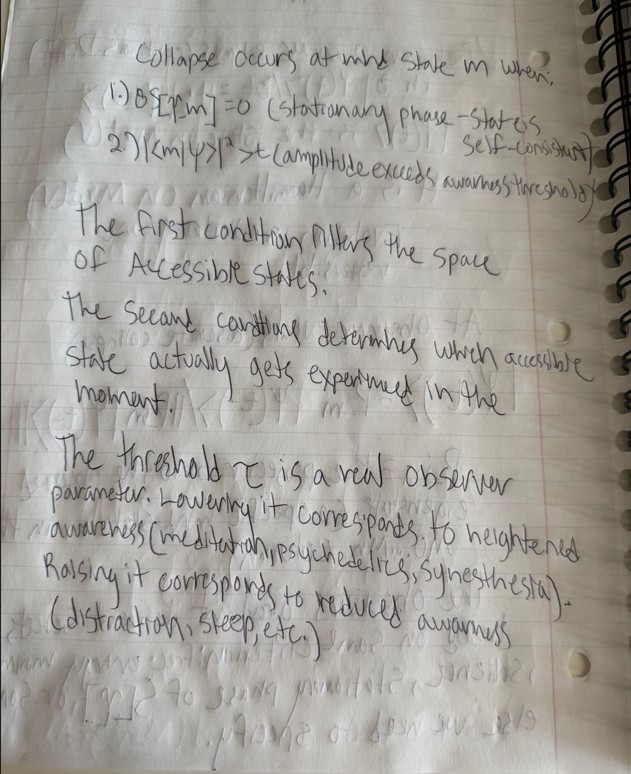

Ψ_mind sim: First result:

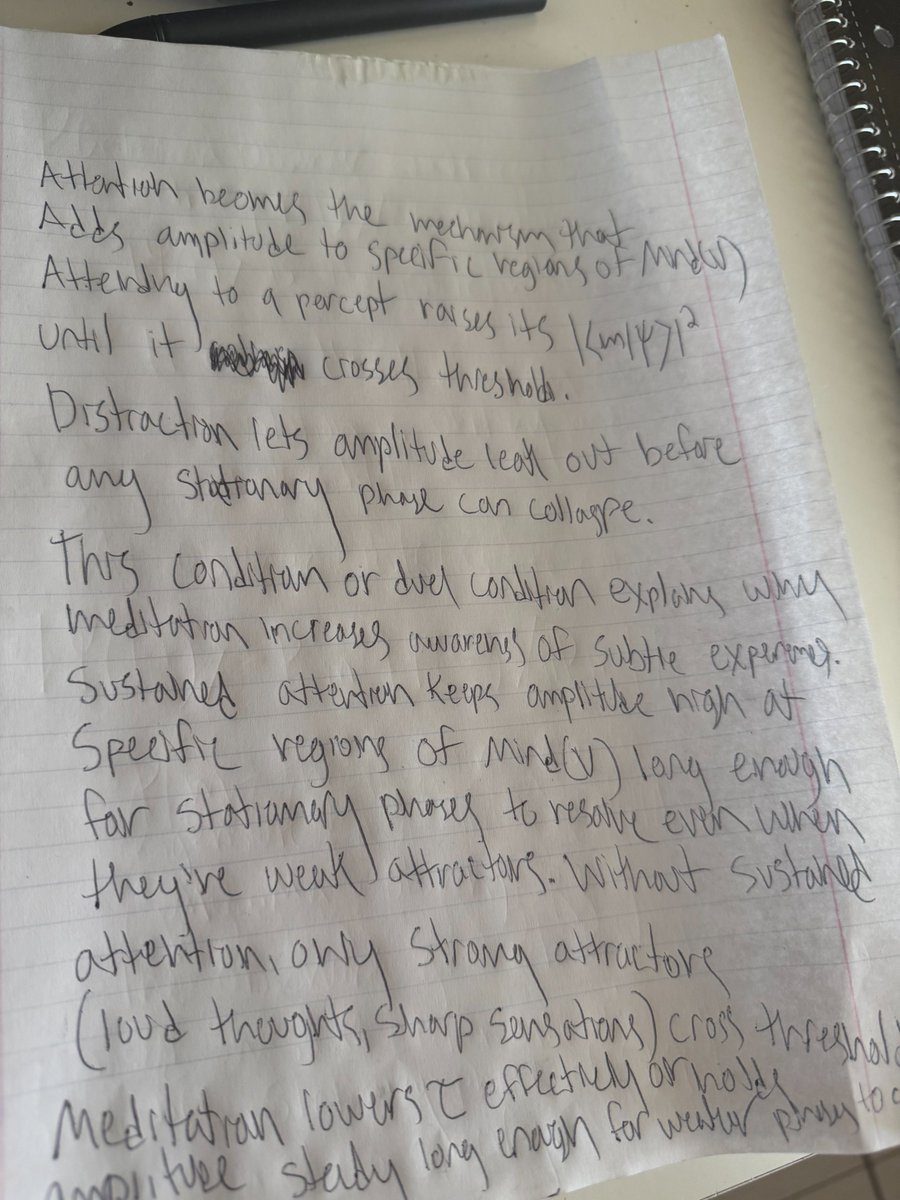

Three layers: V (substrate), Mind(V) (amplitude structure), Gradient (observer). Observer descends free energy, collapse fires when amplitude crosses threshold τ at a stationary-phase configuration.

Vary τ, watch the stream of consciousness restructure.

+@noahphi999

Update on Ψ_mind: I had the gradient in the wrong place... It's not acting ON the integral, it's part OF it. I initially wrote it out of the equation based on my particle automata model, the claim that mind never operates on raw continuous change directly and only on representations (models of reality, both internal and external). Observer dynamics couple into the amplitude structure directly. Formal rewrite + simulation in progress.

English

Update on Ψ_mind: I had the gradient in the wrong place... It's not acting ON the integral, it's part OF it.

I initially wrote it out of the equation based on my particle automata model, the claim that mind never operates on raw continuous change directly and only on representations (models of reality, both internal and external).

Observer dynamics couple into the amplitude structure directly.

Formal rewrite + simulation in progress.

+@noahphi999

OK, so this is a couple weeks of work here, lmk what you think and or if it makes sense.

English

@NOTfunnyparanR @HowToAI_ low cortisol training 😂🔥, and yeah Qwen sounds like a good idea... training from scratch with all that dynamism would be brutal

English

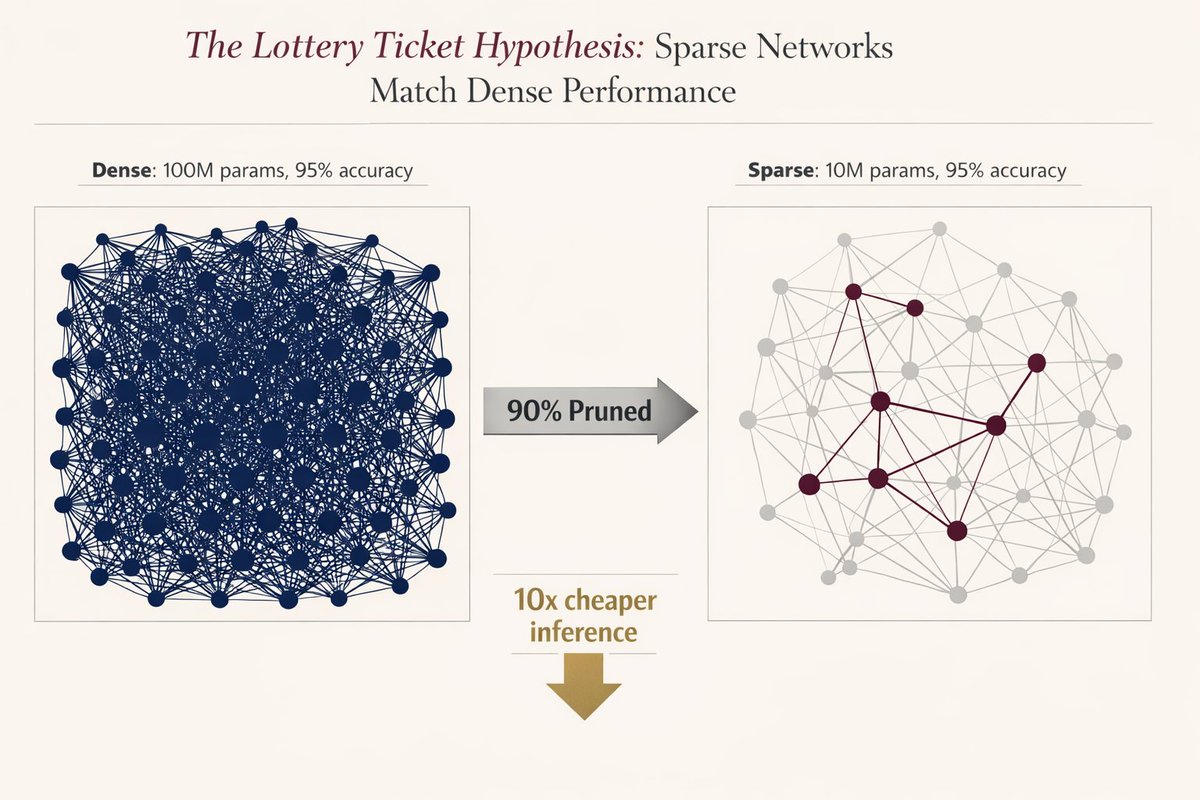

I did an SNn that dynamically pruned (Nash equilibrium pruning) and generated as needed as well on weight, synapse and neuron levels for different reasons, weight to produce redundancy and avoid error and predict error and internally reduce error, everything else to predict what would be needed and what won’t.

Now I’m thinking maybe I should make a transformer system like that and start with LLM weights of Gemma or Qwen or something.

English

🚨 MIT proved you can delete 90% of a neural network without losing accuracy.

Researchers found that inside every massive model, there is a "winning ticket”, a tiny subnetwork that does all the heavy lifting.

They proved if you find it and reset it to its original state, it performs exactly like the giant version.

But there was a catch that killed adoption instantly..

you had to train the massive model first to find the ticket. nobody wanted to train twice just to deploy once. it was a cool academic flex, but useless for production.

The original 2018 paper was mind-blowing:

But today, after 8 years…

We finally have the silicon-level breakthrough we were waiting for: structured sparsity.

Modern GPUs (NVIDIA Ampere+) don’t just “simulate” pruning anymore.

They have native support for block sparsity (2:4 patterns) built directly into the hardware.

It’s not theoretical, it’s silicon-level acceleration.

The math is terrifyingly good: a 90% sparse network = 50% less memory bandwidth + 2× compute throughput. Real speed.. zero accuracy loss.

Three things just made this production-ready in 2026:

- pruning-aware training (you train sparse from day one)

- native support in pytorch 2.0 and the apple neural engine

- the realization that ai models are 90% redundant by design

Evolution over-parameterizes everything. We’re finally learning how to prune.

The era of bloated, inefficient models is officially over. The tooling finally caught up to the theory, and the winners are going to be the ones who stop paying for 90% of weights they don’t even need.

The future of AI is smaller, faster, and smarter.

English

@PhilosophyOfPhy a direction in which movement can be done same as L, W, or D. We can call it Duration and Einstein thought it was tied to the 3 things i listed and coined it the construct the "Space-time continuum" but I think he might have been conflating a bit...

English

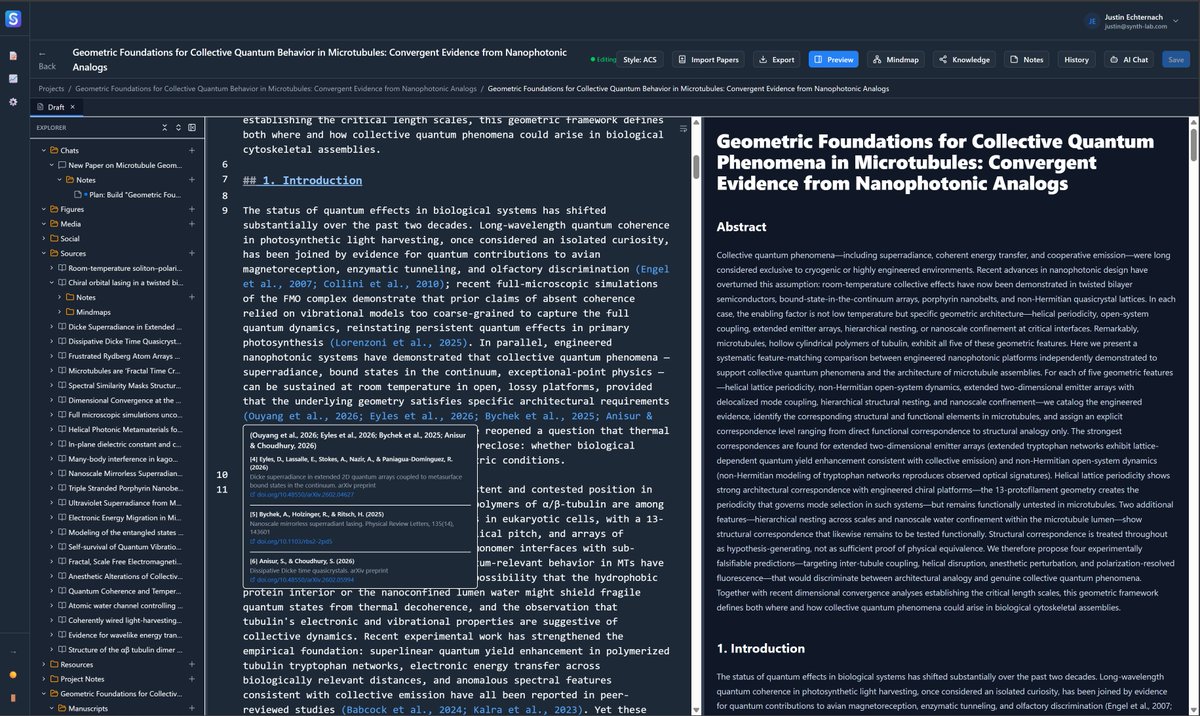

@FrancisJeffrey7 @TheQuantumChef1 @JustinEchterna9 @CankayKoryak @Art2000F @ana_couper @ajitrai1670832 That's exactly where I keep landing... mass as emergent from the density distribution rather than fundamental! which maps naturally onto a path integral formulation where you're summing over all configurations of Psi and mass falls out as localized intensity.

English

@noahphi999 @TheQuantumChef1 @JustinEchterna9 @CankayKoryak @Art2000F @ana_couper @ajitrai1670832 point "mass" is essentially a measure of the intensity of a location; it corresponds to the density distribution determined by the wave function (x, y, z, t ---works pretty well); so, Yes.

English

@FrancisJeffrey7 @TheQuantumChef1 @JustinEchterna9 @CankayKoryak @Art2000F @ana_couper @ajitrai1670832 Congrats Justin! and @TheQuantumChef1 very intriguing work as well! Been reading through the repo and the idea that frequency causes mass rather than the other way around is truly fascinating to me. The only thing I'm curious about is what the actual mechanism is?

English

@TheQuantumChef1 @JustinEchterna9 @CankayKoryak & my Quantum-enlightened crew likely to take a look -- and I'll comment/endorse -- when I have enough quality moments to assess it fully.

cc: @Art2000F @ana_couper @noahphi999 @ajitrai1670832

English