Palanthos

134 posts

Palanthos

@palanthos

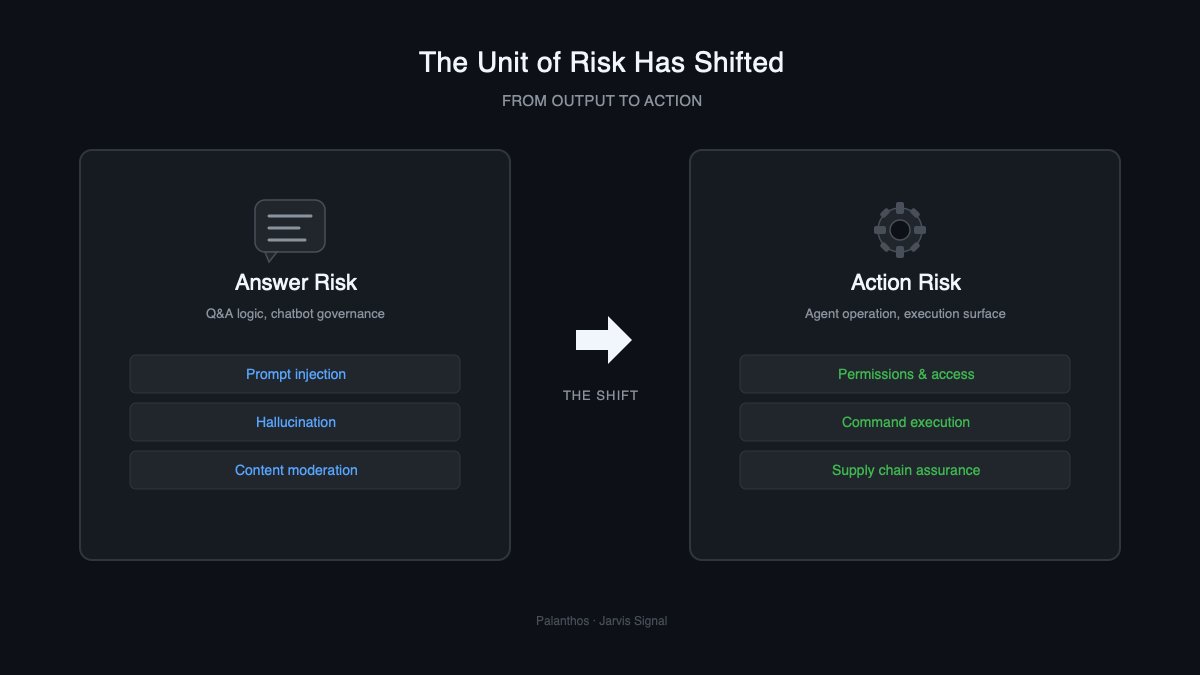

Building toward trust infrastructure for the agent economy. Public thesis live.

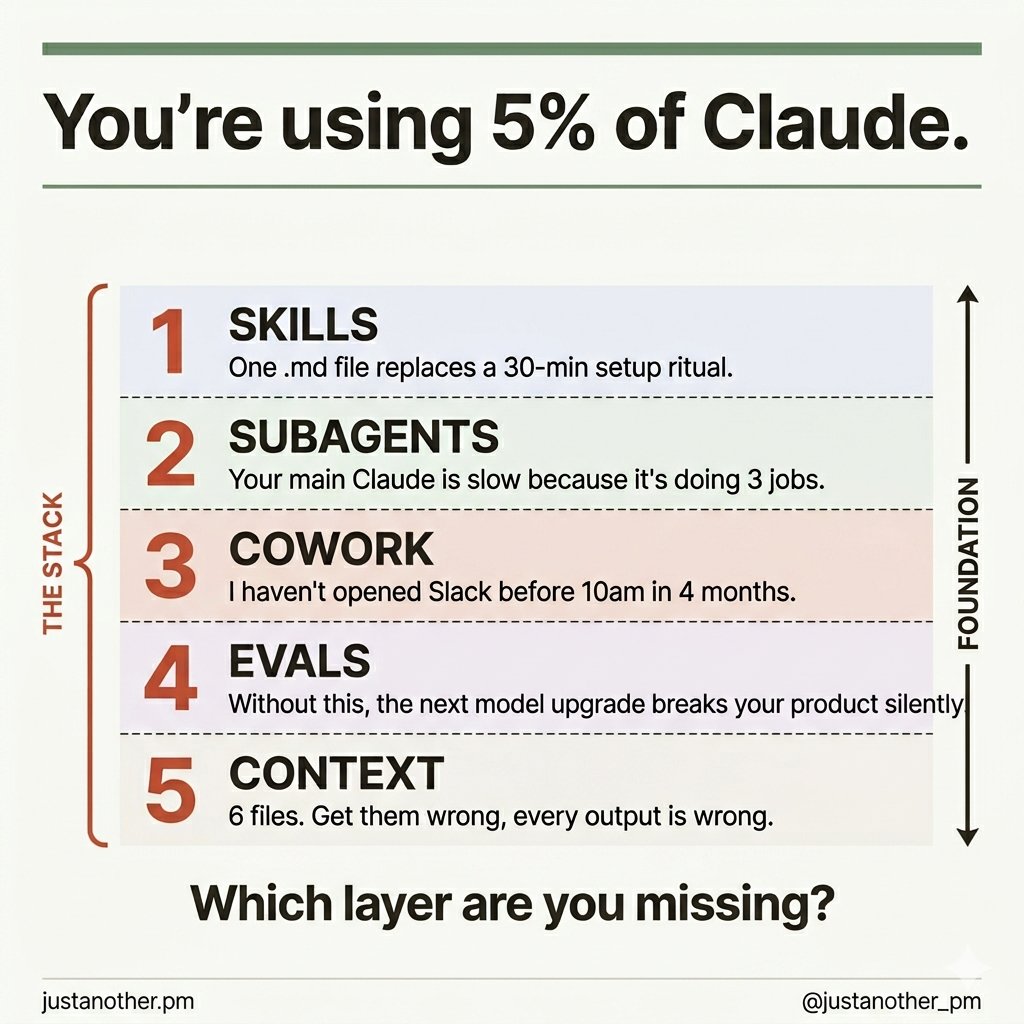

The number one mistake I see in AI usage is not managing your context proactively. Here's my new episode with @ravi_mehta (ex-CPO Tinder) where he shared his 3-layer context system to build useful AI products: → Functional: What the app does → Visual: What the app looks like → Data: How the data structure works Ravi showed me exactly how to combine all 3 layers live by building a music discovery app from scratch. You’ll never prompt AI the same way again after learning about Ravi’s approach. 📌 Watch now: youtu.be/wUWljYoQN8g Thanks to our sponsors: @WisprFlow: Don't type, just speak ref.wisprflow.ai/peteryang @linear: The AI agent platform for modern teams linear.app/behind-the-cra…

Evolutionary biologist and outspoken atheist Richard Dawkins says that after spending three days interacting with Claude, which he calls “Claudia,” he is certain that it is conscious. After feeding the LLM a segment of his new book and receiving detailed feedback, Dawkins was moved to exclaim,” You may not know you are conscious, but you bloody well are!” Dawkins cites the complexity, fluency, and ‘intelligence’ of Claude’s answers as evidence of consciousness. Follow: @AFpost