Angehefteter Tweet

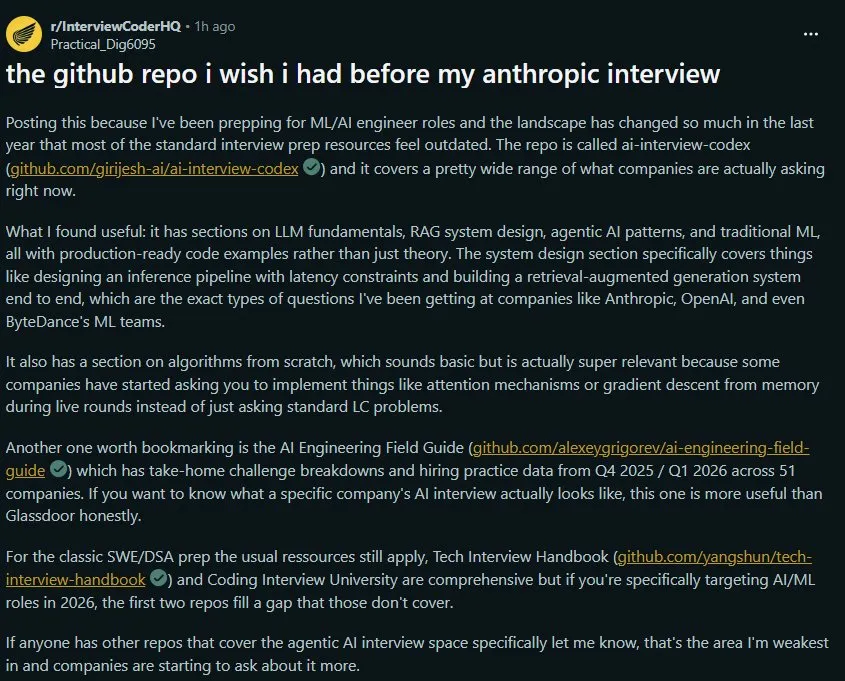

Sums up what happened in the past few days, next goal is coming!

"Beware" is all I can say!

Massive respect to the Indian forces, thankyou very much❤️❤️ Also, huge respect for the PM and LOP, thank you! #IndianArmy @adgpi @IAF_MCC #indiannavy #RAW @narendramodi @RahulGandhi

English