Weronika Ormaniec

29 posts

🎓 Looking for MSc or PhD opportunities in Machine Learning for Fall 2026? Join my group at @Concordia and @Mila_Quebec! 🔍 Focus: autodiff, second-order optimization, and Hessian-based methods for LLMs & scientific ML. 📅 Apply by Dec 1: mila.quebec/en/prospective…

1/6 Hessian approximations are ubiquitous in deep learning, but working with them can get quite involved. We argue for using a linear operator interface for neural network curvature matrices and implement this in PyTorch in our library curvlinops. arxiv.org/abs/2501.19183/

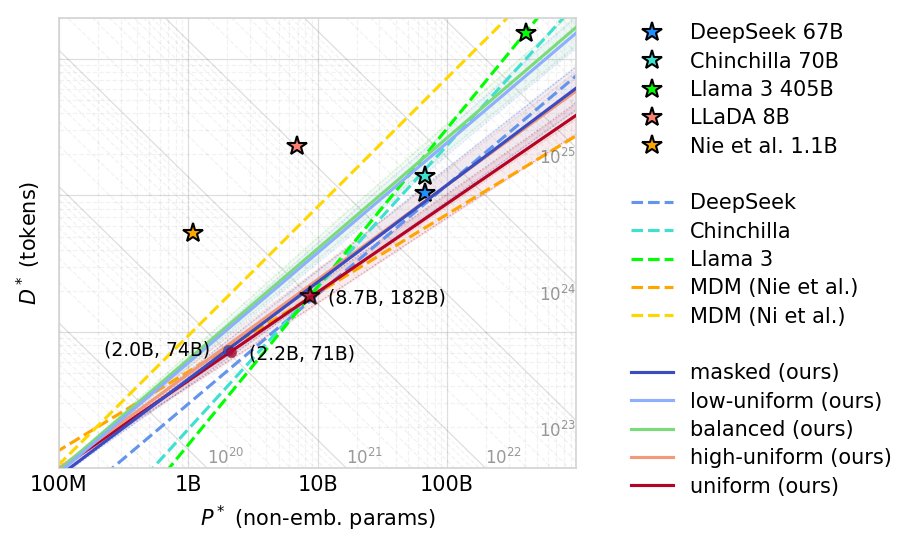

🚨 NEW PAPER DROP! Wouldn't it be nice if LLMs could spot and correct their own mistakes? And what if we could do so directly from pre-training, without any SFT or RL? We present a new class of discrete diffusion models, called GIDD, that are able to do just that: 🧵1/12