Yipeng Zhang

57 posts

NICE Talk 148🌟 invites @emilianopp_, a PhD student at Mila-Quebec & Université de Montréal, to discuss how LLMs can learn from privileged information during training — without needing it at test time. 📖 Paper: Privileged Information Distillation for Language Models — [arxiv.org/pdf/2602.04942] ⏰ Time: 3.20 (Fri) 9:00 PM - 10:00 PM EDT 3.20 (Fri) 6:00 PM - 7:00 PM PDT 📌 Register: luma.com/dll9x6f5 📌 Watch live: youtube.com/watch?v=SUb4M7… ✨This talk is hosted by @Haolun_Wu0203, Ph.D. at Mila & McGill What if your model could train with a "cheat sheet" — but still ace the test without it? Emiliano presents Privileged Information Distillation, a unified post-training framework that bridges the gap between hinted training and non-privileged inference. ⭐ Key findings: 🧐 Privileged information during training significantly boosts LLM performance — but design choices matter enormously for generalization; 🤠 A variational framework + on-policy distillation outperforms strong baselines including SFT + GRPO; 🤪 Most surprisingly, not all privileged information is equal — the right hints incentivize generalization, while the wrong ones don't. #AI #LLM #PrivilegedInformation #Distillation #PostTraining #Reasoning #NICE #NexusForIntelligence

What an awesome first day! Thank you all for joining and listening to our amazing speakers: @SchmidhuberAI, @sherryyangML, @cosmo_shirley, @Yoshua_Bengio, @ylecun, @mido_assran World Models have beautiful days ahead. This is just the beginning 🫡

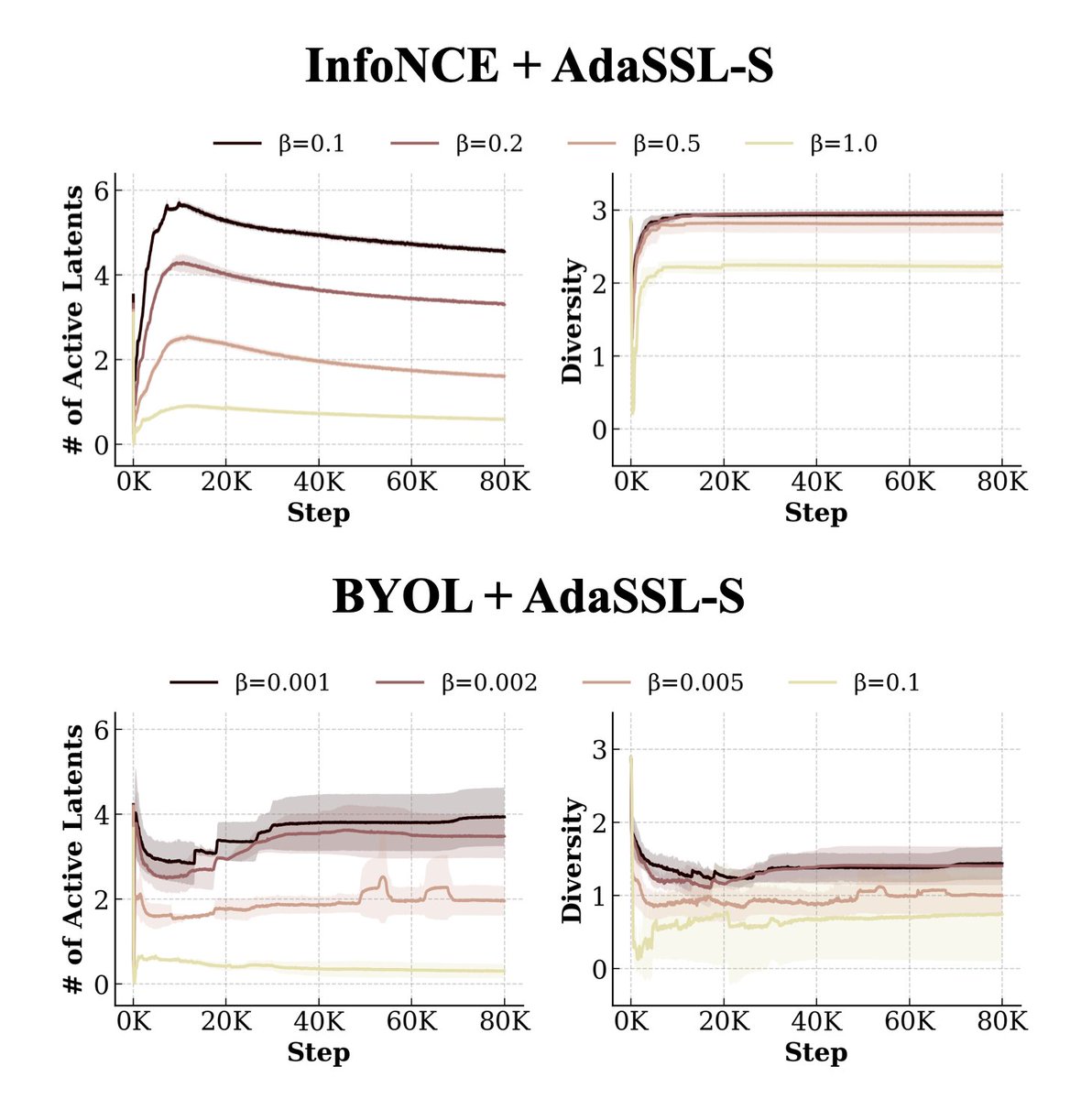

How can we predict multiple plausible targets from a single context in joint-embedding self-supervised learning (SSL)? Check out our paper titled “Self-Supervised Learning from Structural Invariance” accepted at #ICLR2026! Previously Best Paper Award at @unireps 2025. arxiv.org/abs/2602.02381 We introduce AdaSSL, which models the target uncertainty and relaxes the standard assumption that the positive pair share the same semantic features. Derived from first principles, we realize @ylecun’s JEPA with a learned latent variable for jointly learning better representations and world models, extending SSL’s utility to a broader range of data types. 1/🧵

How can we predict multiple plausible targets from a single context in joint-embedding self-supervised learning (SSL)? Check out our paper titled “Self-Supervised Learning from Structural Invariance” accepted at #ICLR2026! Previously Best Paper Award at @unireps 2025. arxiv.org/abs/2602.02381 We introduce AdaSSL, which models the target uncertainty and relaxes the standard assumption that the positive pair share the same semantic features. Derived from first principles, we realize @ylecun’s JEPA with a learned latent variable for jointly learning better representations and world models, extending SSL’s utility to a broader range of data types. 1/🧵

How can we predict multiple plausible targets from a single context in joint-embedding self-supervised learning (SSL)? Check out our paper titled “Self-Supervised Learning from Structural Invariance” accepted at #ICLR2026! Previously Best Paper Award at @unireps 2025. arxiv.org/abs/2602.02381 We introduce AdaSSL, which models the target uncertainty and relaxes the standard assumption that the positive pair share the same semantic features. Derived from first principles, we realize @ylecun’s JEPA with a learned latent variable for jointly learning better representations and world models, extending SSL’s utility to a broader range of data types. 1/🧵