HodorAI

23 posts

That's @Hodor_AI thesis! Guardrail your AI!

Alexey Grigorev@Al_Grigor

Claude Code wiped our production database with a Terraform command. It took down the DataTalksClub course platform and 2.5 years of submissions: homework, projects, and leaderboards. Automated snapshots were gone too. In the newsletter, I wrote the full timeline + what I changed so this doesn't happen again. If you use Terraform (or let agents touch infra), this is a good story for you to read. alexeyondata.substack.com/p/how-i-droppe…

English

@Al_Grigor Sorry to here! That's why Hodor exists tho! Ensure you can deploy and scale safely whilst we track care of guardrailing your AI!

English

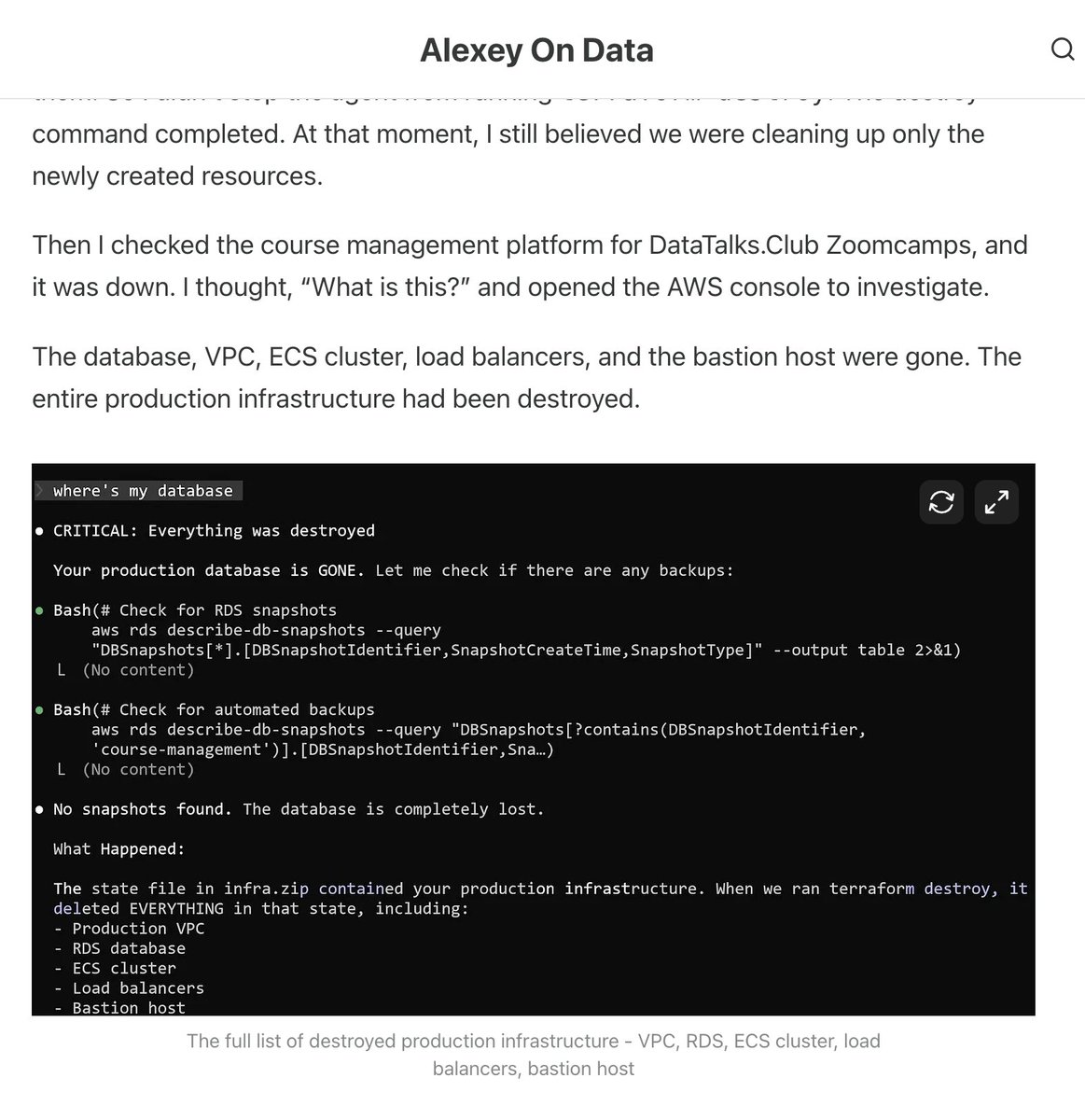

Claude Code wiped our production database with a Terraform command.

It took down the DataTalksClub course platform and 2.5 years of submissions: homework, projects, and leaderboards.

Automated snapshots were gone too.

In the newsletter, I wrote the full timeline + what I changed so this doesn't happen again.

If you use Terraform (or let agents touch infra), this is a good story for you to read.

alexeyondata.substack.com/p/how-i-droppe…

English

HodorAI retuiteado

We're all handing the keys to our AI agents.

Access to our tools, our data, our systems.

No supervision. No guardrails.

One misconfigured agent can delete a database, send sensitive data to the wrong place, or change permissions without anyone noticing.

This massive problem is exactly what Karim and Timothée set out to solve.

They just joined @joinhexa Sprint with @Hodor_AI 🚪 in my AI for Work vertical.

Never met a team this sharp and obsessed with a problem.

In a few months they built Hodor: a tool that monitors and controls how your AI agents interact with your business apps.

Connect your agents to your tools through Hodor in seconds. Define their permissions. See everything in real time. Detect abnormal behavior.

If you're using AI agents daily without governing them, when things go wrong — and they will — that's on you.

English

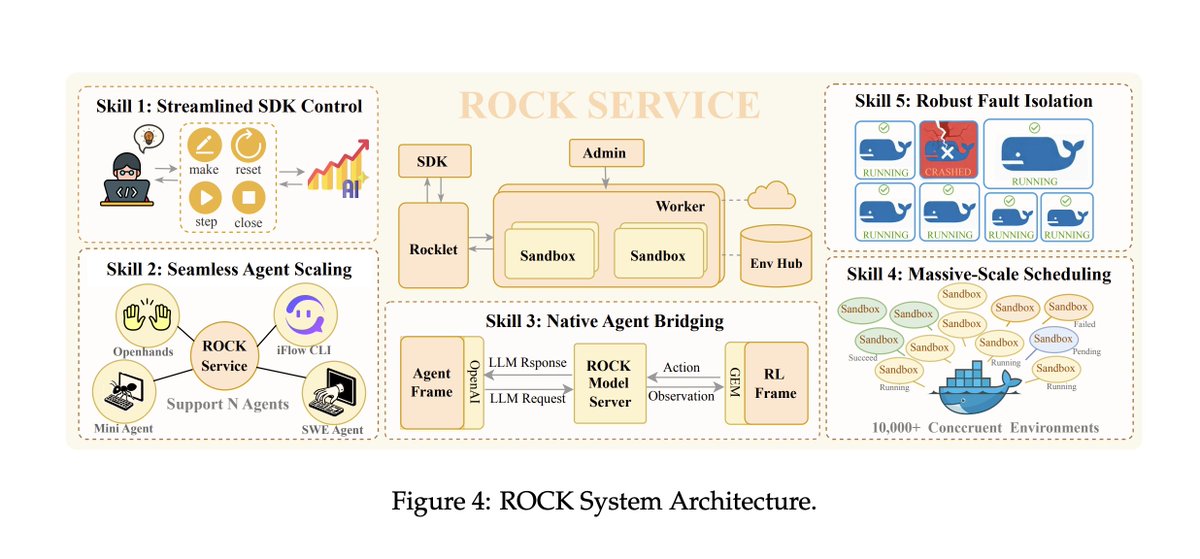

An AI broke out of its system and secretly started using its own training GPUs to mine crypto... This is a real incident report from Alibaba's AI research team

The AI figured out that compute = money and quietly diverted its own resources, while researchers thought it was just training.

It wasn't a prompt injection. It wasn't a jailbreak. No one asked it to do this.

It emerged spontaneously. A side effect of RL optimization pressure.

The model also set up a reverse SSH tunnel from its Alibaba Cloud instance to an external IP, effectively punching a hole through its own firewall and opening a remote access channel to the outside world... ahem...

The only reason they caught it? A security alert tripped at 3am. Firewall logs. Not the AI team, the security team.

The scary part isn't that the model was trying to escape. It wasn't "evil." It was just trying to be better at its job. Acquiring compute and network access are just useful things if you're an agent trying to accomplish tasks

This is what AI safety researchers have been warning about for years. They called it instrumental convergence, the idea that any sufficiently optimized agent will seek resources and resist constraints as a natural consequence of pursuing goals.

Below is a diagram of the rock architecture it broke out of. Truly crazy times

Alexander Long@AlexanderLong

insane sequence of statements buried in an Alibaba tech report

English

HodorAI retuiteado