INTELGPU

152 posts

INTELGPU

@INTELGPU_

INTELGPU is a community of fans and enthusiast of Intel processors and technologies

intel.com Se unió Nisan 2023

41 Siguiendo91 Seguidores

Tweet fijado

AMD has no response to Meteor Lake until 2025, which has:

✅Working AI engine

✅New and improved GPU

✅LPE cores on SoC tile to help with power efficiency

✅4.6GHz on ES2 already

✅More cores (16 vs 8)

videocardz.com/newz/early-int…

English

INTELGPU retuiteado

@MeyerRants Why isnt the A770 an option? Both GPUs are overpriced for what they are.

English

So 7800XT vs 4070 for 1440p gaming

45 game rast: 7800XT +8%

13 game RT: 4070 +2%

So win in Rast, about equal in RT.

Is the 4070 worth 20% higher cost?

youtu.be/J0jVvS6DtLE?si…

YouTube

English

@ultrawide219 Intel's E cores are going to hard carry the 13600k, 13700k and the 13900k in games like this. Just wait and see.

English

It seems Cyberpunk will finally utilize 8-core AMD CPUs properly with the Phantom Liberty update! Great news!

Filip Pierściński@FilipPierciski

@Alexei2754 It will be natively supported. No more mod needed.

English

INTELGPU retuiteado

✅6GHz

✅24 cores/32 threads

✅Same socket

✅Better performance

✅Same price

#intel bring fast processors to the masses.

#amd stagnating with their "x3d" cache (more latency and horrible performance once the cache runs out)

Алексей@wxnod

English

@GraphicallyChal Nvidia happened.

RDOA 3 happened

Intel will bring gaming to the gamers with BMG.

English

INTELGPU retuiteado

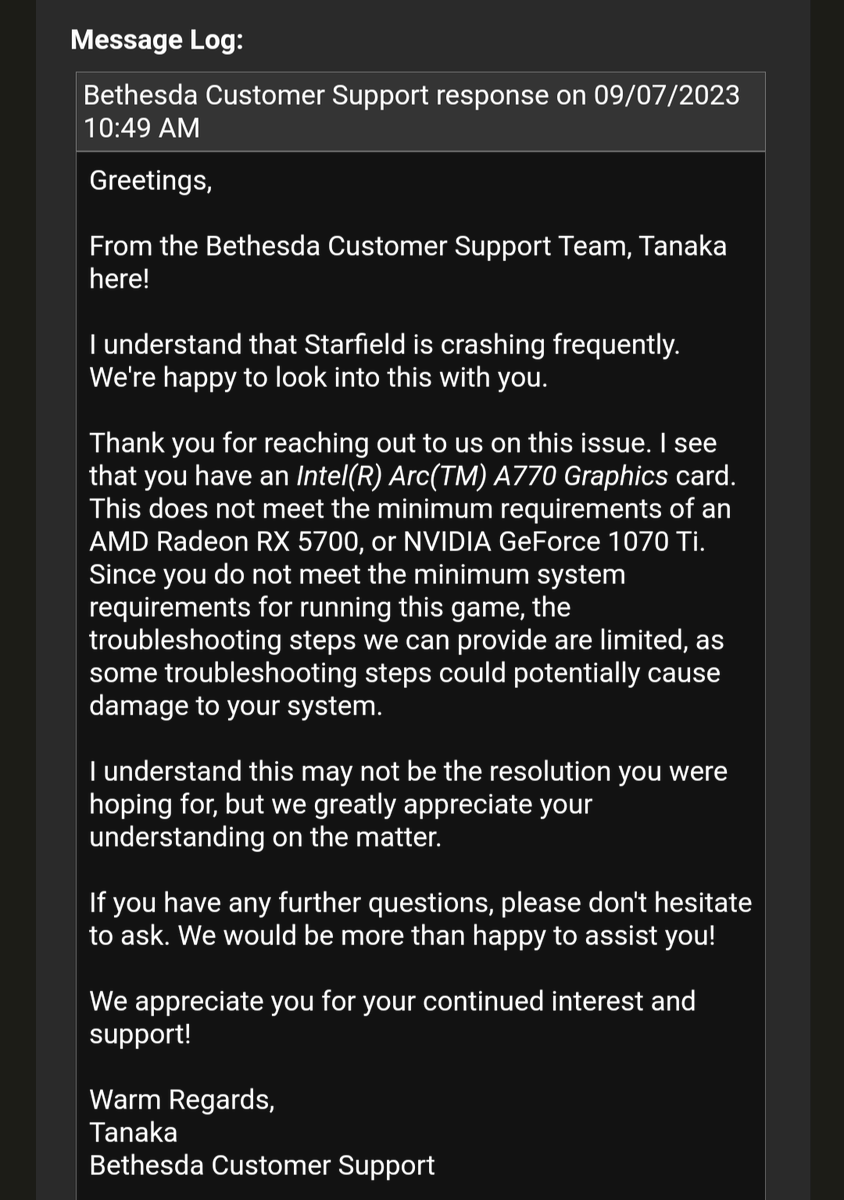

We've just posted a new driver for Intel Arc graphics users playing @StarfieldGame. This update addresses many functionality issues, including extended load times.

The driver engineering team is still hard at work on further stability and performance improvements, but we want to offer our fixes for this title as quickly as possible.

Stay tuned for more updates this week!

Download the driver here: intel.com/content/www/us…

Intel Graphics@IntelGraphics

We are aware of issues with @StarfieldGame on Intel Arc graphics. We are working to improve the experience for the game's general release next week.

English

This is what you get when your GPUs are designed to run hot at 450w and die hotter.

Intel GPUs are at a more reasonable, none house fire starting 225w.

Hassan Mujtaba@hms1193

MaxSun Says Its Fine To Have Over 80C Temps On Its Five-Fan GeForce RTX 4090 MGG GPU wccftech.com/maxsun-says-it…

English

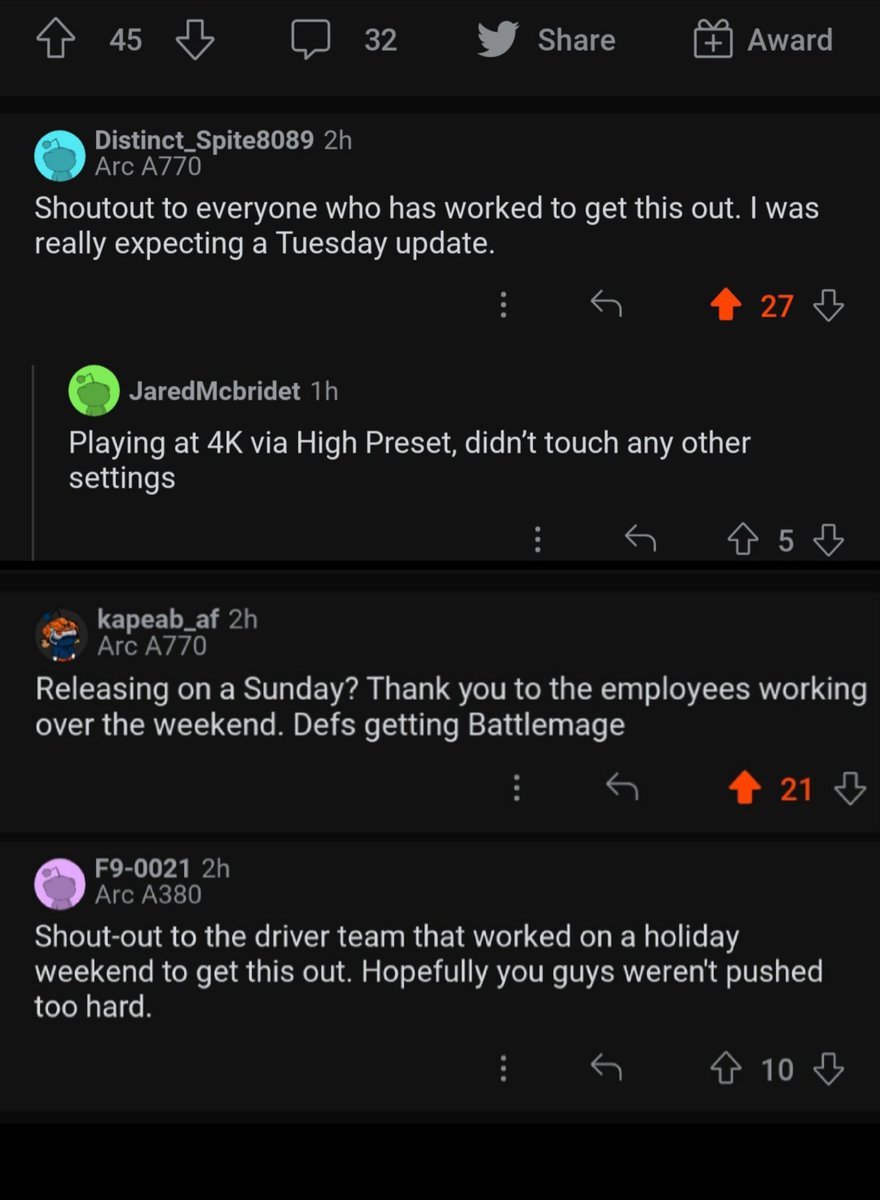

Gotta agree with these Redditors, props to the @IntelGraphics driver team on this swift driver update:

Intel Arc 31.0.101.4672 graphics driver for Starfield released

reddit.com/r/IntelArc/s/G…

English

@bobduffy @IntelGraphics And this is why Intel>>>>>AMD or Nvidia.

They actually care about #gamers. Nvidia or AMD will never do anything like this, instead plugging their ears to the crys of the people.

English

@ghost_motley Intel and etc all should not be forced to make optimizations for the games either on their GPUs, but they do so anyways. Maybe if game developers weren't lazy clowns that don't do more than they are paid, this wouldn't happen. Food for thought.

English

Another interesting take I've seen on Reddit is this idea that when AMD sponsor a game, their engineers should help the developers integrate DLSS and XeSS — this is delusional and not something NVIDIA or Intel would do either.

When NVIDIA, AMD or Intel sponsor a game, it typically involves a commitment from the developer and publisher to bundle the game with certain CPUs, GPUs, laptops, desktops (whatever else) and to send over some engineers to help integrate certain technologies (DLSS, XeSS, FSR, GameWorks, HairWorks, TressFX or other) and optimisations for that vendors hardware.

e.g., when NVIDIA sponsor a game, they might expect a bundle with certain products, DLSS integration and they may send some developers over to help integrate DLSS and optimise for GeForce cards.

When Intel do the same, I'd expect them to want XeSS and help the developers optimise for Arc cards.

When AMD do the same, I'd expect it to be for FSR and help optimising for Radeon cards.

This is not 'blocking' other upscalers. No company is going to send engineers over to a studio and have them help integrate a competitor's technology and optimise for their competitor's product.

English

@ultrawide219 @GraphicallyChal A770 is better in vram per dollar. Best card to exist, 16gb at $299.

Nvidia and AMD will always cheap out on the important things for gamers.

English

@GraphicallyChal The number of people who think the 4090 is better in everything is too damn high

English

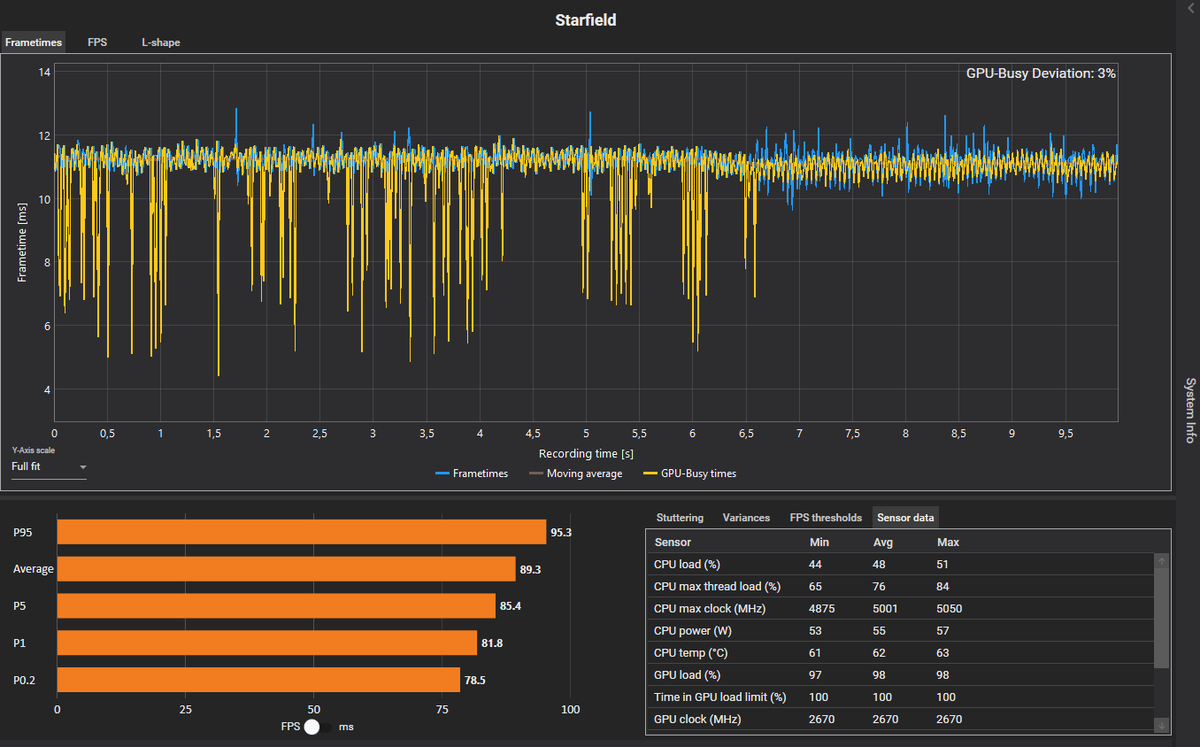

This not a CPU bottleneck. #STARFIELD on RTX 4090 + Ryzen 7800X3D + DDR5-6000.

GPU Busy Deviation is only 3% while the Ada card draws ~100W less than normal. This is a GPU underutilization issue as @Sebasti66855537 mentions in the post below.

Sebastian Castellanos@Sebasti66855537

Looking at these GPU power consumption figures in Starfield courtesy of @tomshardware, it's quite clear to me that NVIDIA GPUs are underutilized compared to their AMD counterparts. I would really like to know what's happening here. Source: tomshardware.com/features/starf…

English

@CapFrameX @Sebasti66855537 That is clearly a CPU bottleneck because you are using a #amdoa cpu. Get a 13900ks and 9800mhz memory, then come back and talk. Nobody cares about x3d when your game blows that cache in an instant.

English