Louis Kirsch

313 posts

@LouisKirschAI

Driving the automation of AI Research. Project Lead & Research Scientist @GoogleDeepMind. PhD @SchmidhuberAI. @UCL, @HPI_DE alumnus. All opinions are my own.

Introducing The AI Scientist: The world’s first AI system for automating scientific research and open-ended discovery! sakana.ai/ai-scientist/ From ideation, writing code, running experiments and summarizing results, to writing entire papers and conducting peer-review, The AI Scientist opens a new era of AI-driven scientific research and accelerated discovery. Here are 4 example Machine Learning research papers generated by The AI Scientist. We published our report, The AI Scientist: Towards Fully Automated Open-Ended Scientific Discovery, and open-sourced our project! Paper: arxiv.org/abs/2408.06292 GitHub: github.com/SakanaAI/AI-Sc… Our system leverages LLMs to propose and implement new research directions. Here, we first apply The AI Scientist to conduct Machine Learning research. Crucially, our system is capable of executing the entire ML research lifecycle: from inventing research ideas and experiments, writing code, to executing experiments on GPUs and gathering results. It can also write an entire scientific paper, explaining, visualizing and contextualizing the results. Furthermore, while an LLM author writes entire research papers, another LLM reviewer critiques resulting manuscripts to provide feedback to improve the work, and also to select the most promising ideas to further develop in the next iteration cycle, leading to continual, open-ended discoveries, thus emulating the human scientific community. As a proof of concept, our system produced papers with novel contributions in ML research domains such language modeling, Diffusion and Grokking. We (@_chris_lu_, @RobertTLange, @hardmaru) proudly collaborated with the @UniOfOxford (@j_foerst, @FLAIR_Ox) and @UBC (@cong_ml, @jeffclune) on this exciting project.

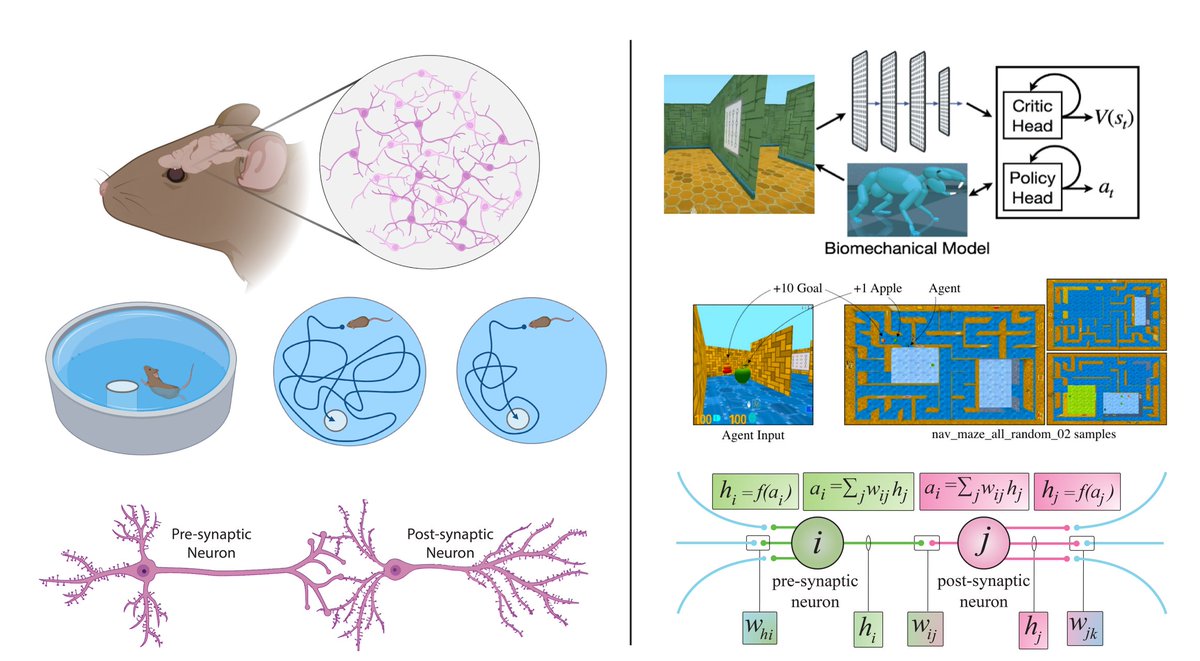

Emergent in-context learning with Transformers is exciting! But what is necessary to make neural nets implement general-purpose in-context learning? 2^14 tasks, a large model + memory, and initial memorization to aid generalization. Full paper arxiv.org/abs/2212.04458 🧵👇(1/9)

Emergent in-context learning with Transformers is exciting! But what is necessary to make neural nets implement general-purpose in-context learning? 2^14 tasks, a large model + memory, and initial memorization to aid generalization. Full paper arxiv.org/abs/2212.04458 🧵👇(1/9)

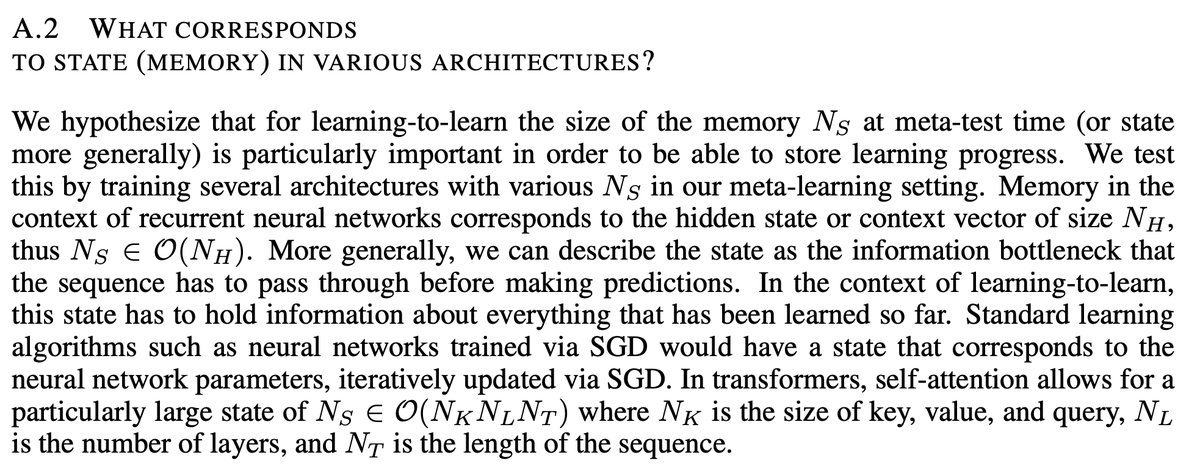

Does this only work for Transformers? No! We tried a range of architectures. Compared to scaling laws, the number of parameters does not predict the learning-to-learn ability too well. Instead, what matters is how much memory (or the activation bottleneck) the model has. (8/9)