somebody made a huggingface model visualizer!! just plug in the url and explore at any granularity

Nando de Freitas

12.3K posts

@NandoDF

I seek to understand intelligence and awareness, and build AI aligned with compassion, freedom, universal human empowerment, and progress in law & science.

somebody made a huggingface model visualizer!! just plug in the url and explore at any granularity

Someone’s opinion article. My opinion: It’s all about scale now! The Game is Over! It’s about making these models bigger, safer, compute efficient, faster at sampling, smarter memory, more modalities, INNOVATIVE DATA, on/offline, … 1/N thenextweb.com/news/deepminds…

Update on Erdős Problem 1196: In joint work, we refined and adapted the proof method from GPT-5.4 Pro to give proofs of several additional problems. This includes another 60 year old conjecture by Erdős, Sárközy, and Szemerédi. A proof is valued not just by the problem it solves, but by what new avenues it opens up. This is perhaps one of the first examples of an AI-generated proof having downstream impacts, which we are still exploring. We are announcing the result today at the Future of Mathematics Symposium (see links below)

BREAKING: Sequoia and Lightspeed co-lead Europe's largest seed funding round with $1.1B at $5.1B post for ex-Deepmind David Silver's Ineffable Intelligence. David is committing to giving away 100% of the money he makes from his Ineffable equity via Founders Pledge - the biggest pledge in their history and it is likely to amount to multiple billions.

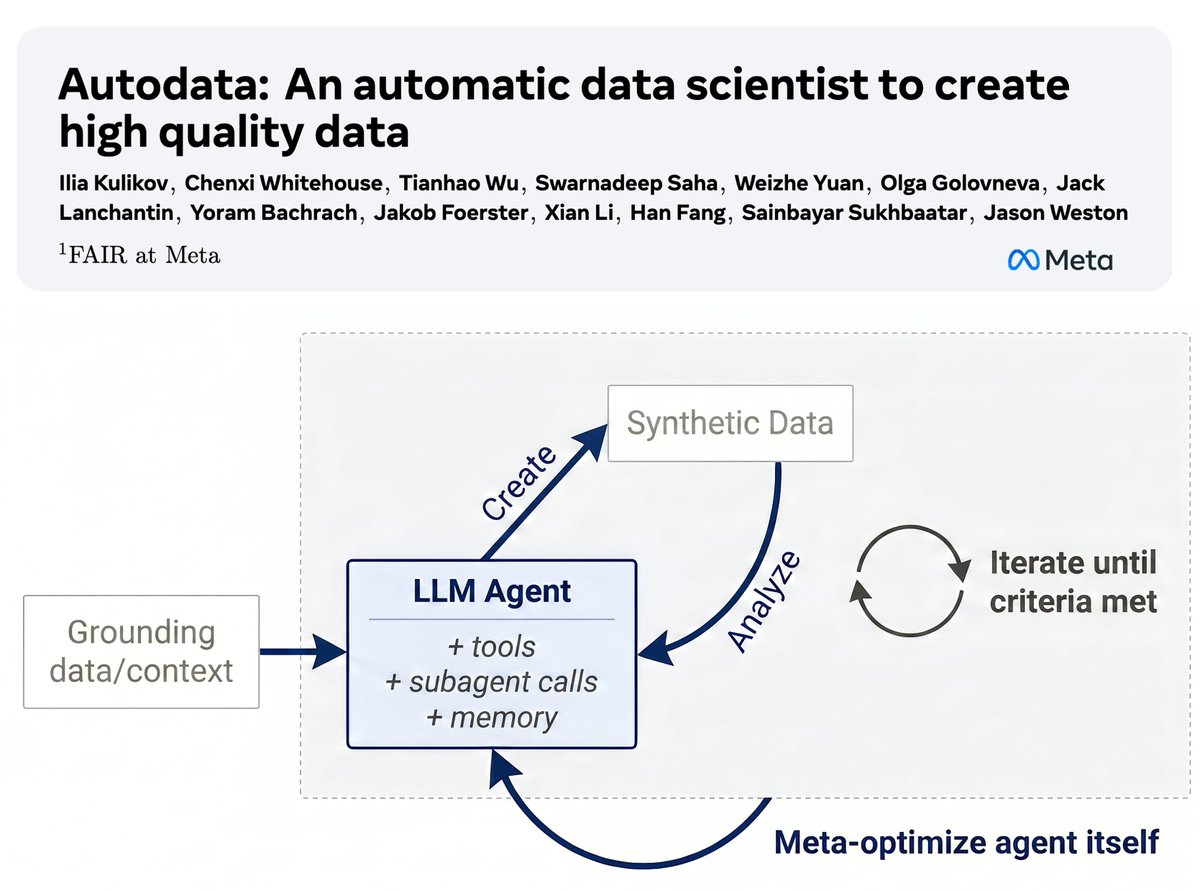

AI tutorial. Self-training can be made possible by inverting models (input becomes output) and alternating. For example, want to teach your vision model to count? Easy, take advantage of coding models and image editing to create a dataset for counting! Step 1. Use a coding model to generate a diagram. Step 2. Convert the diagram to a real image. I’ve illustrated this with one example. Any of the models out there can be used to achieve this. This is well known. An important ingredient to train good image gen models is to have a VLM that is good at creating good descriptive captions. This in turn can be used to train better VLM models.