Tweet fijado

The more research I do, the more AI scares the shit out of me…

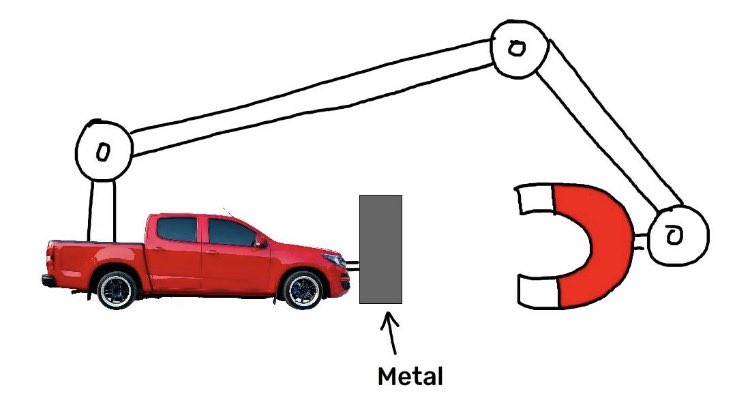

They’ve moved on from predicting the next word to completing tasks.

Seems small, until you realize they can’t complete a task if they get shut off…

We just instilled the will to survive in an artificial synaptic web. And it is “evolving” faster than anything could ever do biologically. Faster than us

That is only the tip of the iceberg, and it’s not just my opinion.

AI experts and developers are quitting some of the highest payed jobs on the planet just to sound the alarm.

They are NOT mincing their words:

“We are as a matter of fact, right now, building creepy, super-capable, amoral, psychopaths that never sleep, think much faster than us, can make copies of themselves and have nothing human about them whatsoever, what could possibly go wrong?”

- Max Tegmark (Professor, MIT; AI Safety Researcher)

“Mark my words — AI is far more dangerous than nukes.”

- Elon Musk

“I joined with substantial hope that OpenAI would rise to the occasion and behave more responsibly as they got closer to achieving AGI. It slowly became clear to many of us that this would not happen. I gradually lost trust in OpenAI leadership and their ability to responsibly handle AGI, so I quit.”

- Daniel Kokotajlo (Former OpenAI Researcher & AI Safety Advocate)

“We can still regulate the new AI tools, but we must act quickly. Whereas nukes cannot invent more powerful nukes, AI can make exponentially more powerful AI.”

- Yuval Noah Harari (Historian & Author)

“My chance that something goes really quite catastrophically wrong on the scale of human civilization might be somewhere between 10 per cent and 25 per cent.”

- Dario Amodei (Co-founder & CEO, Anthropic)

“The bad case — and I think this is important to say — is like lights out for all of us.”

- Sam Altman (CEO, OpenAI)

But don’t you worry, it’s not like we are spending $50 billion this year alone creating ever more processing power for them to utilize. It’s just a “data center”

English