@PrefShares you can say this when SPX -15%, not -5%

prefshares

6.5K posts

@PrefShares you can say this when SPX -15%, not -5%

We sold $META over a year ago during a record-breaking 20-plus day winning streak. Our conviction only strengthened recently when the company posted blockbuster earnings last quarter, only for the stock to totally disregard it. “If all the news is great and the stock is not acting well, GET THE HELL OUT, which is a pretty simple thing most analysts don't know.” — Stanley Druckenmiller We still believe the share price will disappoint investors for years to come after rising more than 8.5X from its lows in late 2022. We would become interested buyers again, but only closer to $350 per share (plus or minus).

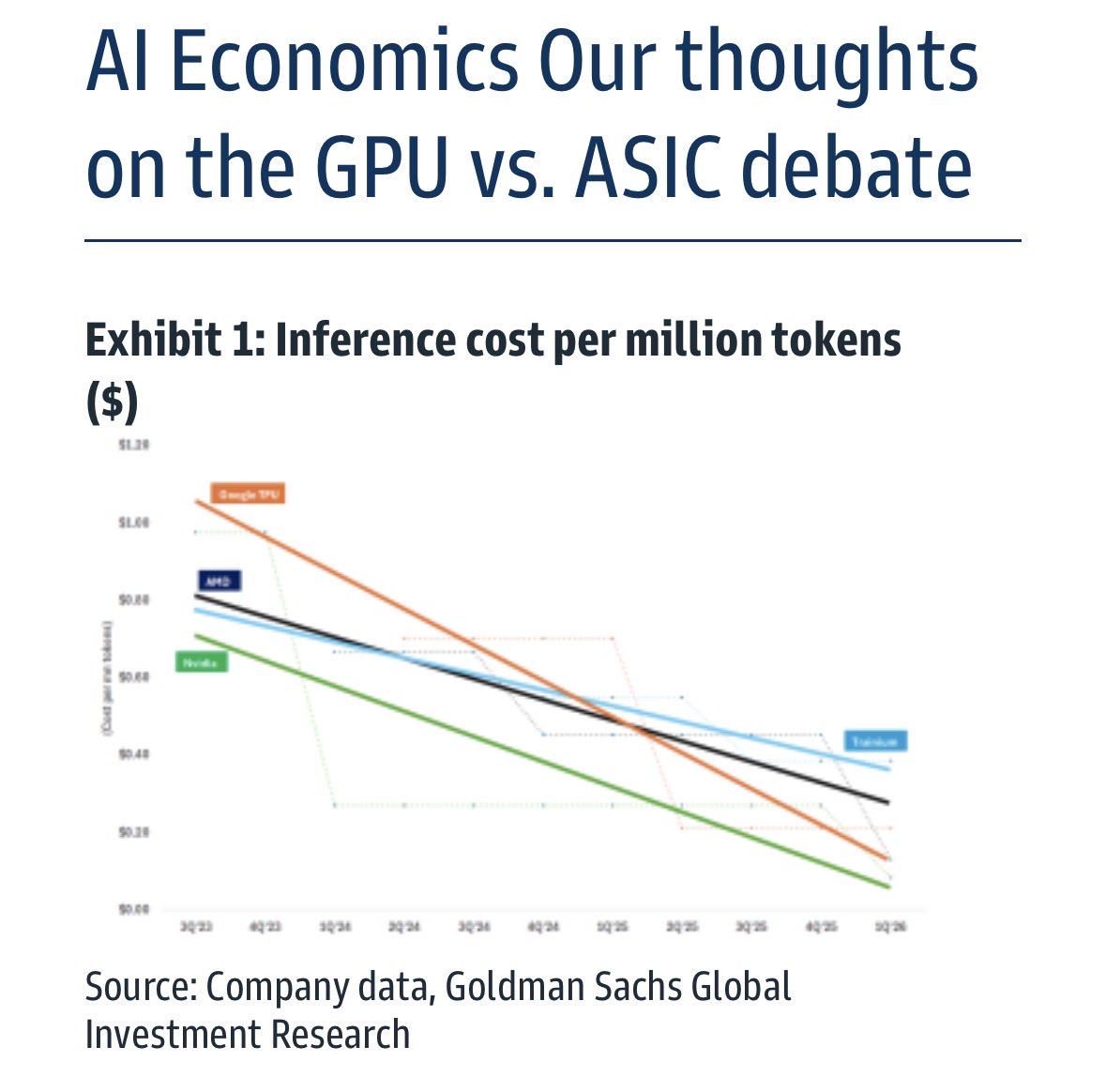

.@dylan522p lays out how we know the hard upper bound on how much compute can be produced annually by 2030: around 200 GW/year. That’s a crazy number (there’s about 20 GW of AI deployed in the world right now), but it’s nowhere near enough to satisfy Sam/Elon/Dario/Demis’s ambitions. Lots of things in the supply chain can be scaled up over 4 years, including things that other people think are bottlenecks, like datacenter power or fab clean room space. But the thing that’s inflexible over that timeline is the number of EUV tools. Dylan forecasts that production of ASML’s EUV tools will scale from 60 per year now to about 100 per year by the end of the decade - which means something like 700 total machines running in 2030. For a fab to make a GW worth of the Rubin chips that NVIDIA is deploying later this year, it needs to make 55,000 3nm wafers, 6,000 5nm wafers, and 170,000 memory wafers. Each 3nm wafers needs about 20 EUV passes, so about 1.1 million passes per GW. Adding on 5nm and memory, you need two million passes. Each tool can do 75 passes per hour, so with 90% uptime that’s around 600k passes per year - so a single machine can make less than a third of a GW in a year. So in 2030, we have 700 total machines, each making 0.3ish GW a year, which means we can produce 200 GW of compute a year. That’s a lot. But Sam Altman wants a gigawatt a week by the end of the decade. Anthropic and Google will be wanting about the same. And Elon wants to be putting 100 GW in space every year. Any one of these players could maybe get what they need, but not all of them.

Interesting research on whether free cash flow generation is "real" because of SBC. The results are not what most would think or may want to hear. SBC doesn't matter to free cash flow. And in fact, unadjusted FCF metrics have generated better alpha than adjusting out SBC.

The $NVDA numbers are getting so big and absurd and execution has been so strong that the only real bear case left is “how much bigger can it get?” or “how much capex is left?” Which probably means it eventually goes higher than most people expect.

If we are going to create ASI, why can’t the ASI make a better company than OpenAI?