Ruoyu Sun

130 posts

@RuoyuSun_UI

Associate Prof at CUHK-Shenzhen. Prev: assistant prof @UofIllinois; postdoc @Stanford; visitor @AIatMeta Work on optimization of machine learning, DL, LLM.

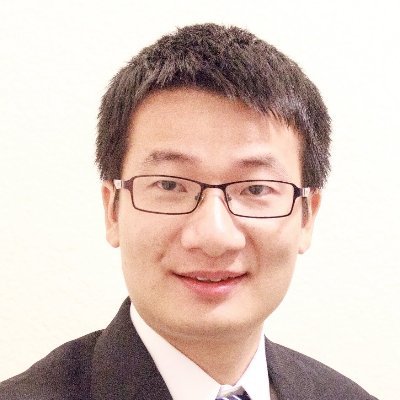

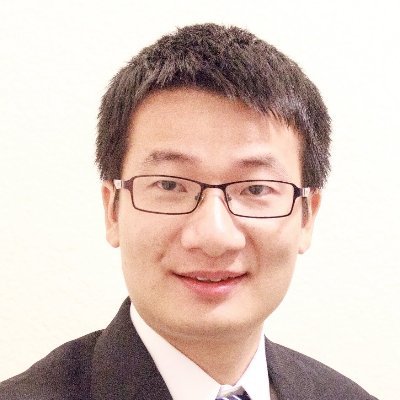

We’re excited to share our work "A Model Can Help Itself: Reward-Free Self-Training for LLM Reasoning". An earlier version of this work has been on arXiv for a few months. We added more experiments and revised it to this new title. The recipe is simple: the model samples its own reponses at low temperature, learns from them with ordinary SFT training, and repeats. No reward. No verifier. No fancy objective beyond standard SFT. On Qwen2.5-Math-7B, mean Pass@1 over 6 math benchmarks improves 22.7 → 39.5. Note that mean Pass@32 also improves 61.0 → 67.9, suggesting that this simple reward-free procedure unlocks more of the model’s existing reasoning potential. See the updated paper directly at: github.com/ElementQi/SePT… The arXiv link is: arxiv.org/abs/2510.18814 The updated version will appear on arXiv shortly. @Phanron_xli

We’re excited to share our work "A Model Can Help Itself: Reward-Free Self-Training for LLM Reasoning". An earlier version of this work has been on arXiv for a few months. We added more experiments and revised it to this new title. The recipe is simple: the model samples its own reponses at low temperature, learns from them with ordinary SFT training, and repeats. No reward. No verifier. No fancy objective beyond standard SFT. On Qwen2.5-Math-7B, mean Pass@1 over 6 math benchmarks improves 22.7 → 39.5. Note that mean Pass@32 also improves 61.0 → 67.9, suggesting that this simple reward-free procedure unlocks more of the model’s existing reasoning potential. See the updated paper directly at: github.com/ElementQi/SePT… The arXiv link is: arxiv.org/abs/2510.18814 The updated version will appear on arXiv shortly. @Phanron_xli

We’re excited to share our work "A Model Can Help Itself: Reward-Free Self-Training for LLM Reasoning". An earlier version of this work has been on arXiv for a few months. We added more experiments and revised it to this new title. The recipe is simple: the model samples its own reponses at low temperature, learns from them with ordinary SFT training, and repeats. No reward. No verifier. No fancy objective beyond standard SFT. On Qwen2.5-Math-7B, mean Pass@1 over 6 math benchmarks improves 22.7 → 39.5. Note that mean Pass@32 also improves 61.0 → 67.9, suggesting that this simple reward-free procedure unlocks more of the model’s existing reasoning potential. See the updated paper directly at: github.com/ElementQi/SePT… The arXiv link is: arxiv.org/abs/2510.18814 The updated version will appear on arXiv shortly. @Phanron_xli

Got hit with a 2 score reviewer at ICML who clearly did not read the paper carefully. We wrote a 5000 word rebuttal and got back a single line saying thanks for the reply, however. There really should be some kind of reviewer reputation or credit system. Too many people seem to treat reviewing like a place to take out their frustration after their own papers get rejected.